Get the latest AI news, understand why it matters, and learn how to apply it in your work — all in just 5 minutes a day. Join over 2,000,000+ subscribers.

Ads are officially coming to ChatGPT

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. Sam Altman once called ads in ChatGPT a "last resort" — but now, they're officially on the way.

OpenAI will begin testing targeted ads for free and budget tier users in the U.S., marking a controversial monetization shift that could set the tone for how the entire AI industry balances user trust with revenue pressures.

In today’s AI rundown:

OpenAI officially bringing ads to ChatGPT

The Rundown Roundtable: Our AI use cases

Code from your phone with OpenAI’s Codex

Musk, OpenAI trade (more) public blows

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

OPENAI

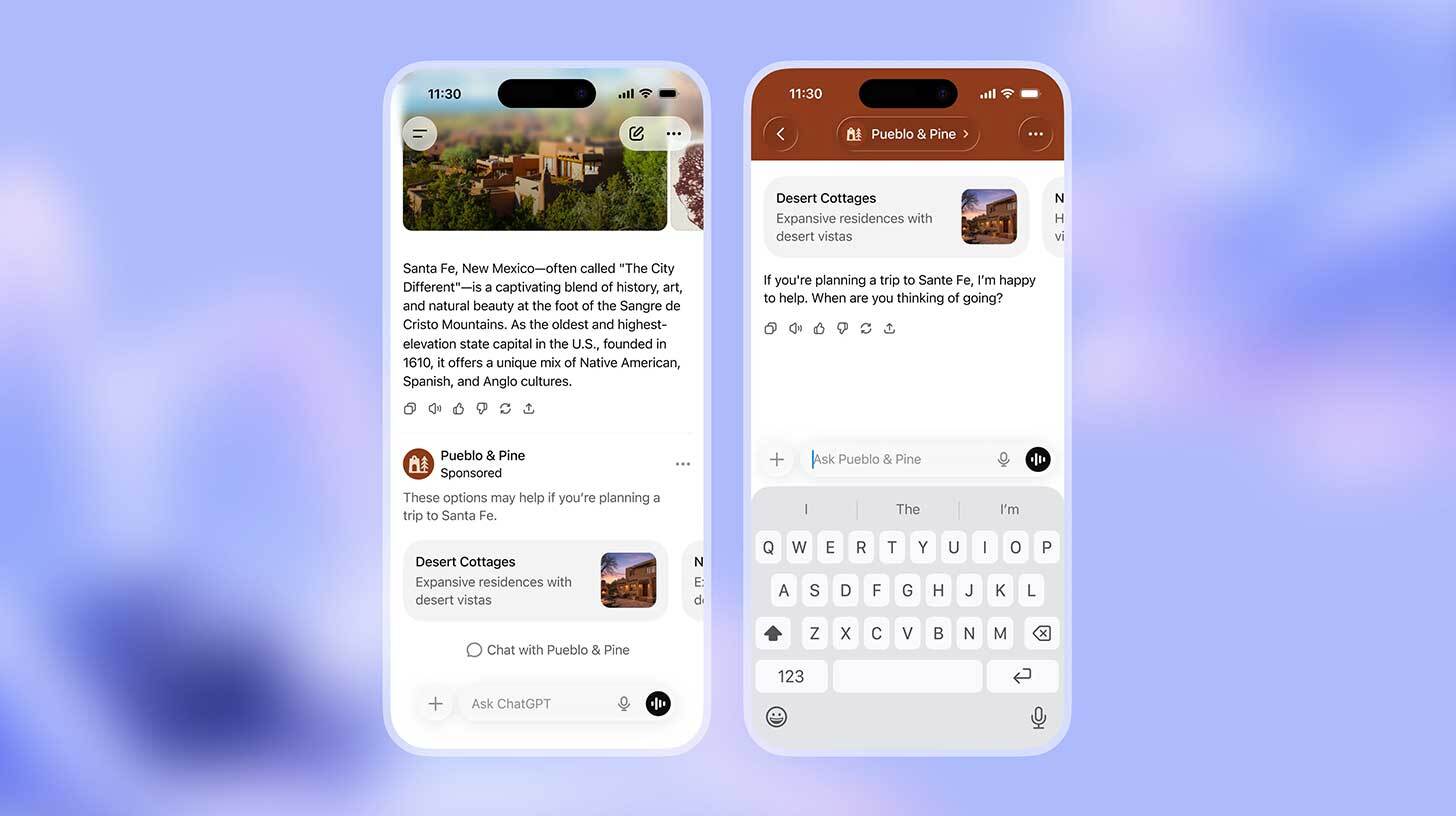

📢 OpenAI officially bringing ads to ChatGPT

Image source: OpenAI

The Rundown: OpenAI just announced it will begin testing targeted advertisements in ChatGPT for free and Go tier users in the U.S. — putting into motion a major (and controversial) monetization shift for the AI giant as it eyes a late-2026 IPO.

The details:

Ads will appear below responses as "Sponsored Recommendations," targeted based on conversations but excluded from health, politics, and underage users.

The move coincides with the company’s $8/month ChatGPT Go tier launching globally, with ads included to offset the lower price point.

Premium tiers (Plus, Pro, Business, Enterprise) remain ad-free, with OAI pledging to never sell user data or let ads influence ChatGPT's answers.

Sam Altman had said in 2024 that ads in ChatGPT would be a “last resort”, but more recently said he “wasn’t totally against it” if it didn’t violate user trust.

Why it matters: We’ve heard conflicting statements from OAI’s leadership in the past on ads, but the March hiring of Instacart’s Fidji Simo hinted at both the IPO and advertising route. Ads in AI assistants are a slippery slope, so the execution will be a nuanced moment to watch — potentially setting the tone for the industry as a whole.

TOGETHER WITH THOUGHTWORKS

📈 Unify data, delivery and human expertise

The Rundown: AI/works is Thoughtworks’ agentic development platform that unifies expert technologists and decades of engineering excellence so you can build, modernize, and evolve enterprise systems faster.

Discover how it enables you to:

Move from spec to code

Build once, reuse everywhere, compound speed over time

Integrate seamlessly with your stack

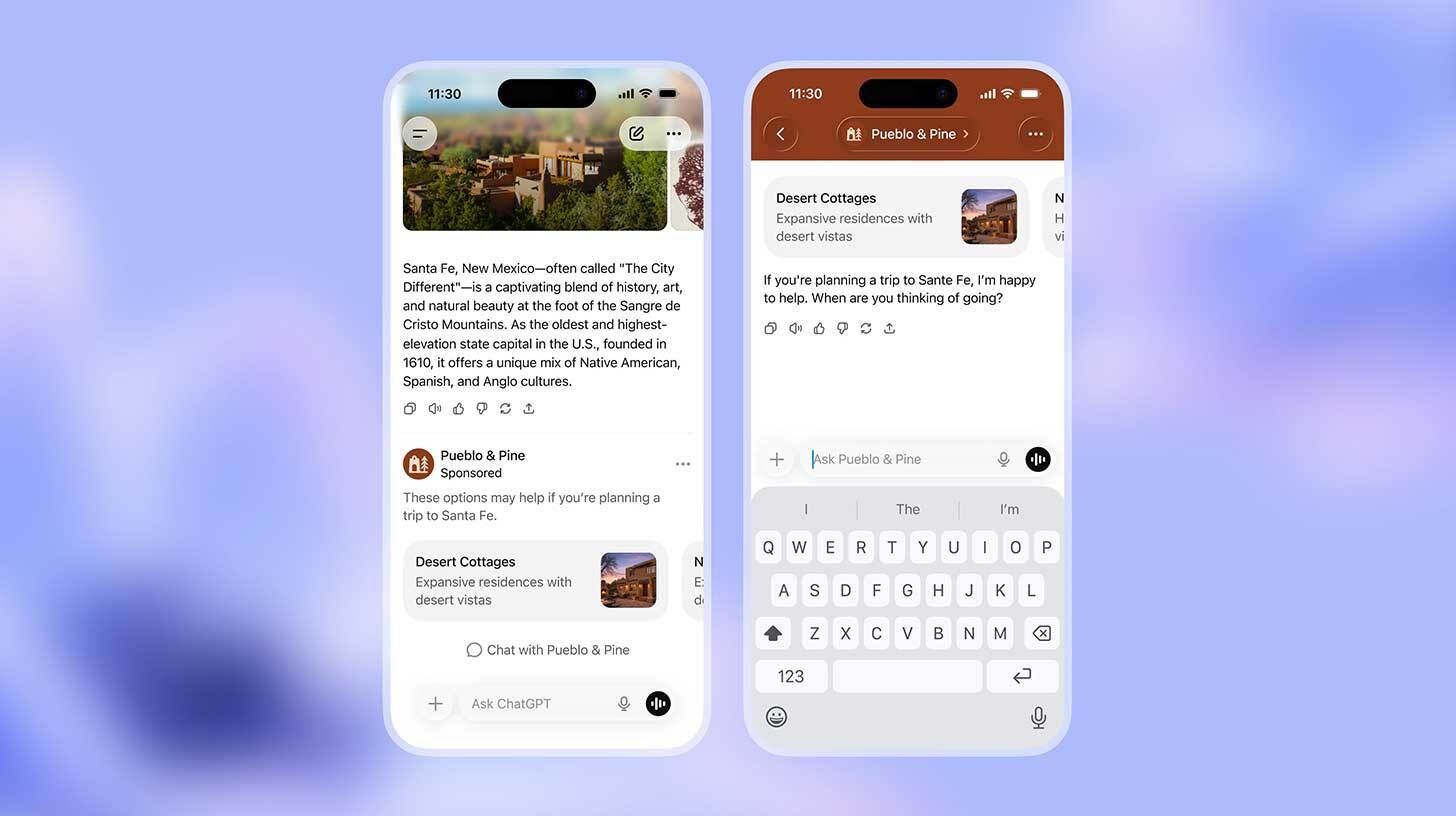

THE RUNDOWN ROUNDTABLE

💡The Rundown Roundtable: Our AI use cases

Image source: Ideogram / The Rundown

The Rundown: The Rundown Roundtable is a weekly feature in which we poll members of The Rundown staff about how we use AI in our work and daily lives.

Rowan, Founder & CEO: Granola AI has become my meeting recorder outside of just Zoom meetings. I recently had a 3-hour meeting with legal for business structuring, and I turned on Granola AI on my phone (with other parties' consent), and it picked up the entire transcript nearly word for word (for over 3 hours!), which I later pasted into ChatGPT to go back and forth on things that I didn't fully grasp in the moment.

Joey, Head of Partnerships: I have been looking around at new apartment development projects, looking to potentially buy in the next two years. I used ChatGPT Deep Research to help compare the cost, the quality of the appliances and materials the builders are using, and chart out the overall cost.

Jennifer, Tech & Robotics Writer: I use Ideogram and Reve for newsletter images, and they’re impressively good even with minimal prompts. I’ll set a general style and tone, and they consistently deliver solid renderings of the tech personalities we cover — the style has become part of our storytelling.

AI TRAINING

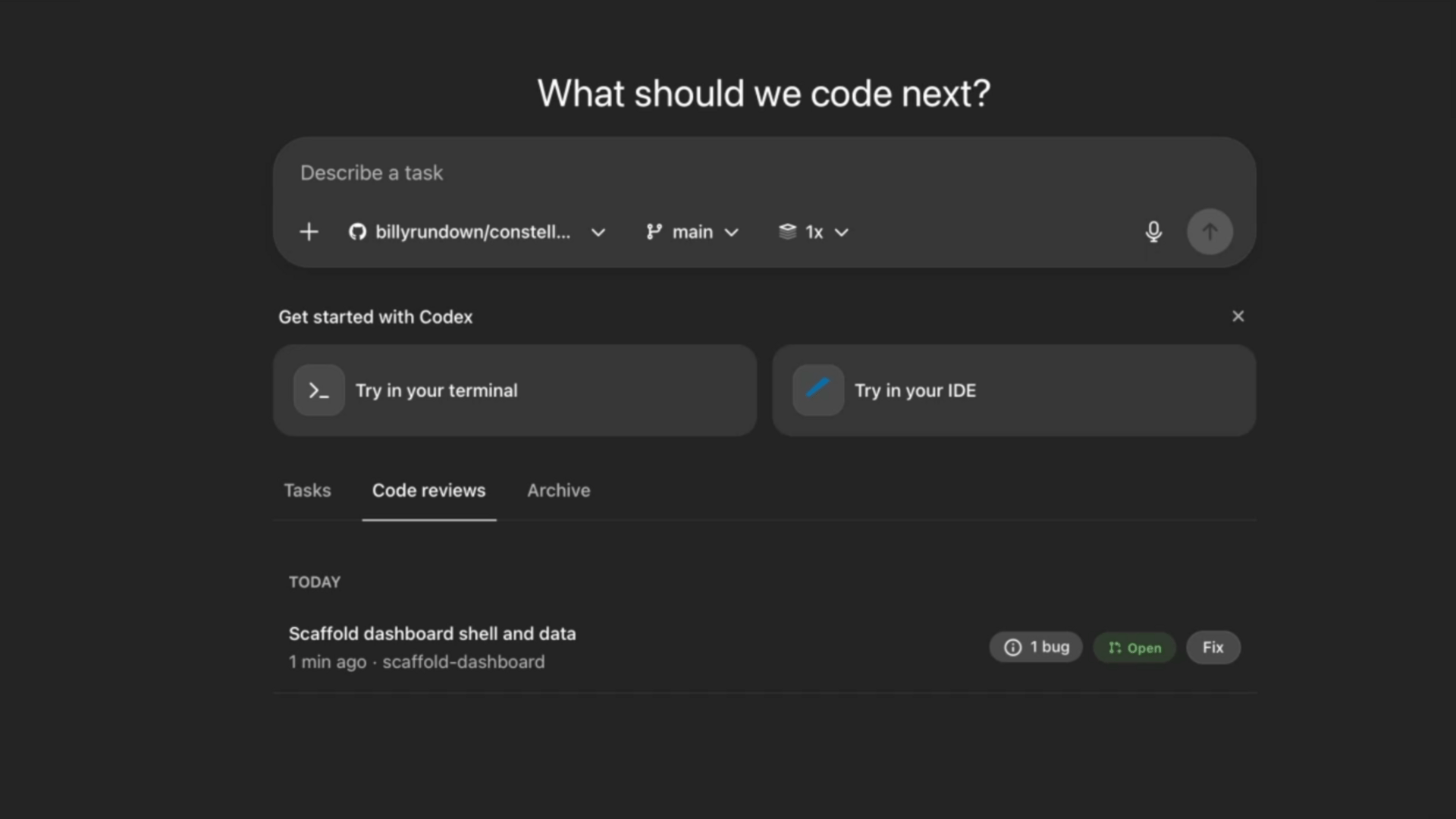

📱Code from your phone with OpenAI’s Codex

The Rundown: In this tutorial, you’ll learn how to 10× your code output with Codex by setting it up in Cursor, running it in the cloud from anywhere (even your phone), and configuring an agent that automatically reviews your code for you.

Step-by-step:

Install Codex by going to the Codex quickstart, selecting your IDE (Cursor), and launching a new Codex Agent inside that editor

Ask Codex to generate an agents.md file from your project (or a PRD/spec if starting fresh), then initialize Git so Codex understands your codebase, rules

Explore slash commands (like /review, /status, and /context) to review changes, manage context, and control how Codex reasons across your files

Push the project to GitHub and connect it to Codex to access from anywhere, and enable automatic PR reviews so every pull request is reviewed in the cloud

Pro tip: Code locally in Cursor, then hand off reviews to cloud Codex — cloud runs don’t count against your local usage.

PRESENTED BY GURU

🧠 Your AI source of truth

The Rundown: Guru is the AI Source of Truth that connects all of your company’s tools and delivers cited, permission-aware answers everywhere you work. With one governed knowledge layer powering both your people and your AIs, teams move faster — with fewer blind spots and mistakes.

Guru allows you to:

Connect all knowledge with permission-aware access

Get trusted, cited answers in chat and everywhere else you work

Experience knowledge that improves and verifies itself

ELON MUSK & OPENAI

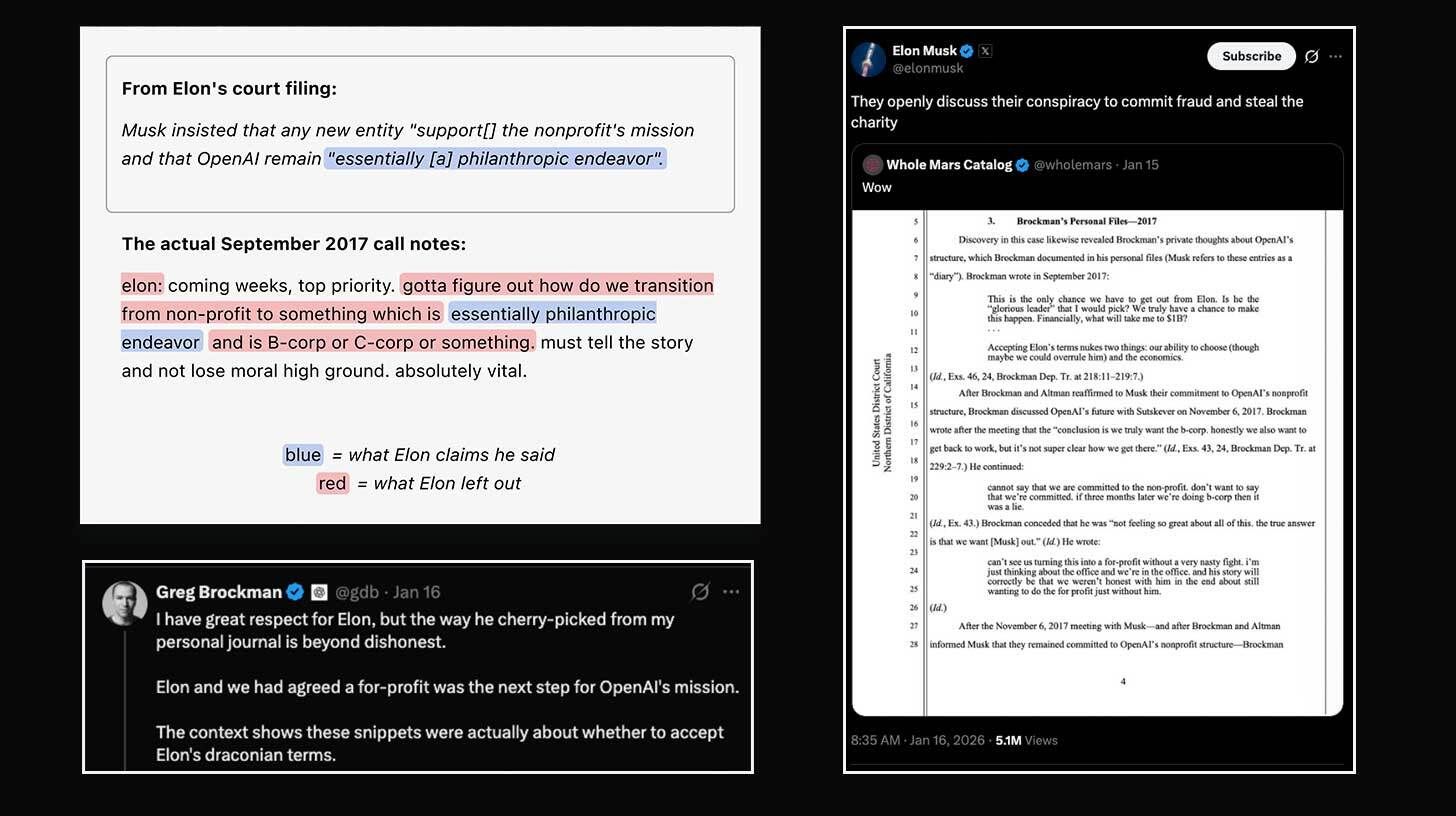

⚔️ Musk, OpenAI trade (more) public blows

Image source: Screenshots from X, OpenAI

The Rundown: Elon Musk and OpenAI continued to spar ahead of their April trial, with Musk sharing anecdotes from Greg Brockman’s 2017 private journal and Sam Altman accusing Musk of "cherry-picking" and OAI releasing correspondence of its own.

The details:

The file details Brockman’s convo with Ilya Sutskever on OAI’s structure and their desire to become a B-Corp, along with concerns over Musk’s involvement.

Altman posted notes of Musk wanting to “accumulate $80B for a self-sustaining city on Mars” and a succession plan for his children to control AGI.

OpenAI published a blog of its own highlighting context and discrepancies between Musk’s filing and Brockman’s notes, calling it “The truth Elon left out”.

Musk tweeted, "Can't wait to start the trial. The discovery and testimony will blow your mind", and is reportedly seeking $134B in damages in the lawsuit.

Why it matters: Get the popcorn ready, folks. If the early discovery nuggets are any indication, we're in for the messiest, most expensive AI lawsuit ever — and a front-row seat to the origin story of tech's biggest current arch-rivalry. Both sides clearly think the full record helps them, which means April is about to get VERY entertaining.

QUICK HITS

🛠️ Trending AI Tools

🧠 Scroll.ai - Turn any knowledge base into enterprise-grade chatbots, with accuracy and depth far beyond generic models*

💼 Claude Cowork - Claude Code’s agentic abilities, now available to Pro tier

⚙️ Replit - New capabilities to easily build and deploy mobile apps

📸 FLUX.2 Klein - BFL’s new ultra-fast, powerful AI image editing model

*Sponsored Listing

📰 Everything else in AI today

Black Forest Labs released FLUX.2[klein], a new speed-focused variant of the company’s powerful AI editing model.

DeepMind CEO Demis Hassabis said that China’s models “may be only a matter of months behind” U.S. labs, but have yet to show innovation surpassing the frontier.

Elon Musk announced that xAI’s Colossus 2 supercomputer powering Grok is now live, marking the world’s first operational gigawatt cluster in the world.

The Wikimedia Foundation announced new AI partnerships with Amazon, Meta, Microsoft, Perplexity, and Mistral, enabling training on the company’s 65M+ articles.

OpenAI CFO Sarah Friar published a blog revealing the company hit $20B+ in annualized revenue for 2025, tripling YoY, with compute expanding 10x since 2023.

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader Forest in Texas:

"I was sick of ‘Scam Likely’ ruining my dinner. So, instead of getting mad, I got even. I built a digital bodyguard named Forest. He uses OpenAI and a custom voice model to sound exactly like a confused elderly man.

His instructions are simple: be polite, be interested, but never, ever let them close the deal. The Outcome: Sweet, sweet revenge. I get to listen to recordings of scammers losing their minds arguing with an AI about armadillos, and I didn't have to lift a finger."

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Murati’s cofounders return to OpenAI

Read our last Tech newsletter: Wikipedia inks deals with Amazon, Meta

Read our last Robotics newsletter: 1X now has a world model

Today’s AI tool guide: Code from your phone with OpenAI’s Codex

RSVP to next workshop @ 4PM EST Friday: AI Foundations Bootcamp pt. 3

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown

Wikipedia inks deals with Amazon, Meta

Read Online | Sign Up | Advertise

Good morning, tech enthusiasts. Wikimedia just put a price tag on one of the internet’s most scraped resources.

The nonprofit behind Wikipedia has signed licensing deals with Amazon, Meta, and Microsoft, turning what was once free-for-all — 65M articles, zero compensation — into a paid offering. As AI chatbots siphon off human readers, the question now is whether Wikipedia can turn this leverage into a sustainable future in the AI age.

In today’s tech rundown:

Wikipedia inks deals with Big Tech

Amazon’s data centers get copper from bacteria

News Corp brings AI into the newsroom

Tiny scanner detects allergens in minutes

Quick hits on other tech news

LATEST DEVELOPMENTS

WIKIPEDIA

🤝 Wikipedia inks deals with Big Tech

Image source: Wikipedia / Reve

The Rundown: Wikimedia just announced licensing deals with Amazon, Meta, Microsoft, Perplexity, and Mistral AI, formalizing what had long been a one-sided relationship where tech giants scraped its 65M articles without compensation.

The details:

The foundation reported an 8% decline in human traffic, attributed to AI chatbots that answer questions directly without sending users to sources.

Disguised bots have been heavily taxing Wikipedia’s servers as they scrape massive amounts of content to train large language models.

Founder Jimmy Wales endorsed the arrangement, noting he’s happy AI trains on Wikipedia's human-curated content compared to alternatives like X.

Wikipedia is also exploring AI tools that could reduce tedious editor tasks, like automatically updating dead links by scanning surrounding text.

Why it matters: The new partners join Google, which signed a deal with Wikimedia in 2022, and should help offset infrastructure costs for the nonprofit, which relies on small public donations. Meanwhile, Wikipedia’s editors are pushing back on its testing of generative AI, calling it a “ghastly idea” that could undermine trust in the platform.

AMAZON

🦠 Amazon's data centers get copper from bacteria

Image source: Nuton (harvesting copper cathode)

The Rundown: Amazon Web Services is buying copper extracted by bacteria from an Arizona mine that recently became the U.S.’s first new source of the metal in more than a decade, locking in a lower-carbon supply to feed its voracious AI data centers.

The details:

Amazon has signed a two-year deal to buy copper from Excelsior Mining’s Gunnison project in Arizona, the first new U.S. copper output in 10+ years.

The copper will be produced using Rio Tinto’s Nuton bioleaching tech, which is billed as having a smaller environmental footprint than conventional mining.

The project targets approximately 30K tonnes of refined copper over four years, enough for perhaps power a single mammoth data center.

AI sector growth is expected to boost global copper demand 50% by 2040, though analysts warn supplies could fall far short.

Why it matters: Amazon is reaching past utilities and chipmakers into the dirty, capital-intensive world of mining — a sign that Big Tech now sees raw materials as strategic assets. AI data centers are copper-hungry beasts, and with EVs and clean energy already straining supply, expect more cloud giants to lock in deals before rivals do.

SYMBOLIC AI

🗞️ News Corp brings AI into the newsroom

Image source: Symbolic AI

The Rundown: Rupert Murdoch’s News Corp just signed a major deal with Symbolic, a stealthy AI startup founded by former eBay CEO Devin Wenig and Ars Technica co-founder Jon Stoke to slash newsroom research time by up to 90%.

The details:

Symbolic says it functions as an automated AI editor that unifies research, writing, and publishing into a single platform.

Dow Jones Newswires — the service behind the Wall Street Journal, Barron’s, and MarketWatch — will be the first to deploy the tech as part of its workflow.

The platform tackles everything from audio transcription and document extraction to fact-checking, headline optimization, and SEO guidance.

Wenig calls the partnership “a watershed moment” and predicts no media or communications business will stay competitive without a similar approach.

Why it matters: Major outlets like the New York Times, BBC, and Reuters have already built proprietary AI tools into their workflows — and News Corp itself signed a multi-year licensing deal with OpenAI in 2024. If Symbolic’s 90% productivity claim holds at scale, it could reshape how publishers think about AI investment.

TECH FOR GOOD

😋 Tiny scanner detects allergens in minutes

Image source: Allergen Alert

The Rundown: A French diagnostics firm has turned a decade of allergy research into a pocket-sized lab called Allergen Alert, a battery-powered scanner that gives people with severe allergies a way to verify their food instead of trusting packaging or waitstaff.

The details:

Launched at CES, the device analyzes a tiny food sample in a disposable pouch and returns allergen results in about two minutes via screen or app.

The first-gen model, due for pre-orders later this year, detects milk and gluten using immunoassay tech borrowed from clinical labs.

The roadmap targets all nine major allergens by 2028, adding peanuts, tree nuts, eggs, shellfish, wheat, soy, and sesame to the list.

Test pouches run under $10 each, with a subscription tier aimed at high-volume users like restaurants.

Why it matters: Beyond the obvious lifesaving potential, Allergen Alert signals a future where portable biosensors become standard kitchen gear — driven by rising allergy rates and brutal medical costs pushing both restaurants and households toward always-on food safety. Water and environmental testing could be next.

QUICK HITS

📰 Everything else in tech today

Customers affected by Verizon’s wireless outage can access a $20 account credit via the myVerizon app, after the disruption left around 170K users without service.

Amazon is fighting Saks Global’s bid for up to $1.75B in bankruptcy financing, telling a judge the deal would leave its own $475M equity stake effectively worthless.

Spotify is hiking U.S. Premium subscription prices again in February, raising the individual plan from $11.99 to $12.99 a month.

Elon Musk posted on X that Tesla will stop selling its Full Self‑Driving (Supervised) package outright after Feb. 14 and will only offer it as a monthly subscription.

Billionaire tech investor Peter Thiel made his biggest political donation in years, writing a $3M check to fight the state’s proposed 2026 Billionaire Tax Act.

Indian launch startup EtherealX quintupled its valuation to $80.5M as it races to build a fully reusable, Falcon 9–class rocket that can return its booster and upper stage.

YouTube is adding new parental controls that let you set daily time limits or block access entirely for kids watching Shorts on supervised accounts.

German investor DTCP is raising a €500M (~$580M) fund that it says will be Europe’s largest dedicated defense-tech vehicle for dual‑use and military startups.

NASA cut short the Crew-11 mission and brought four astronauts back from the ISS on SpaceX Dragon in the agency’s first-ever medical evacuation from space.

Telecoms giant Ericsson plans to cut about 1,600 jobs in Sweden as part of a cost-saving drive.

Thailand approved 96.9B baht (~$3.1B) in new data center and data-hosting projects, reinforcing the country’s push to become a regional tech hub.

COMMUNITY

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Murati's co-founders return to OpenAI

Read our last Tech newsletter: A moon hotel is taking reservations

Read our last Robotics newsletter: 1X now has a world model

Today’s AI tool guide: Run parallel media tasks with Claude Cowork

RSVP to next workshop @ 4PM EST today: AI foundations Bootcamp pt.2

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — The Rundown’s editorial team

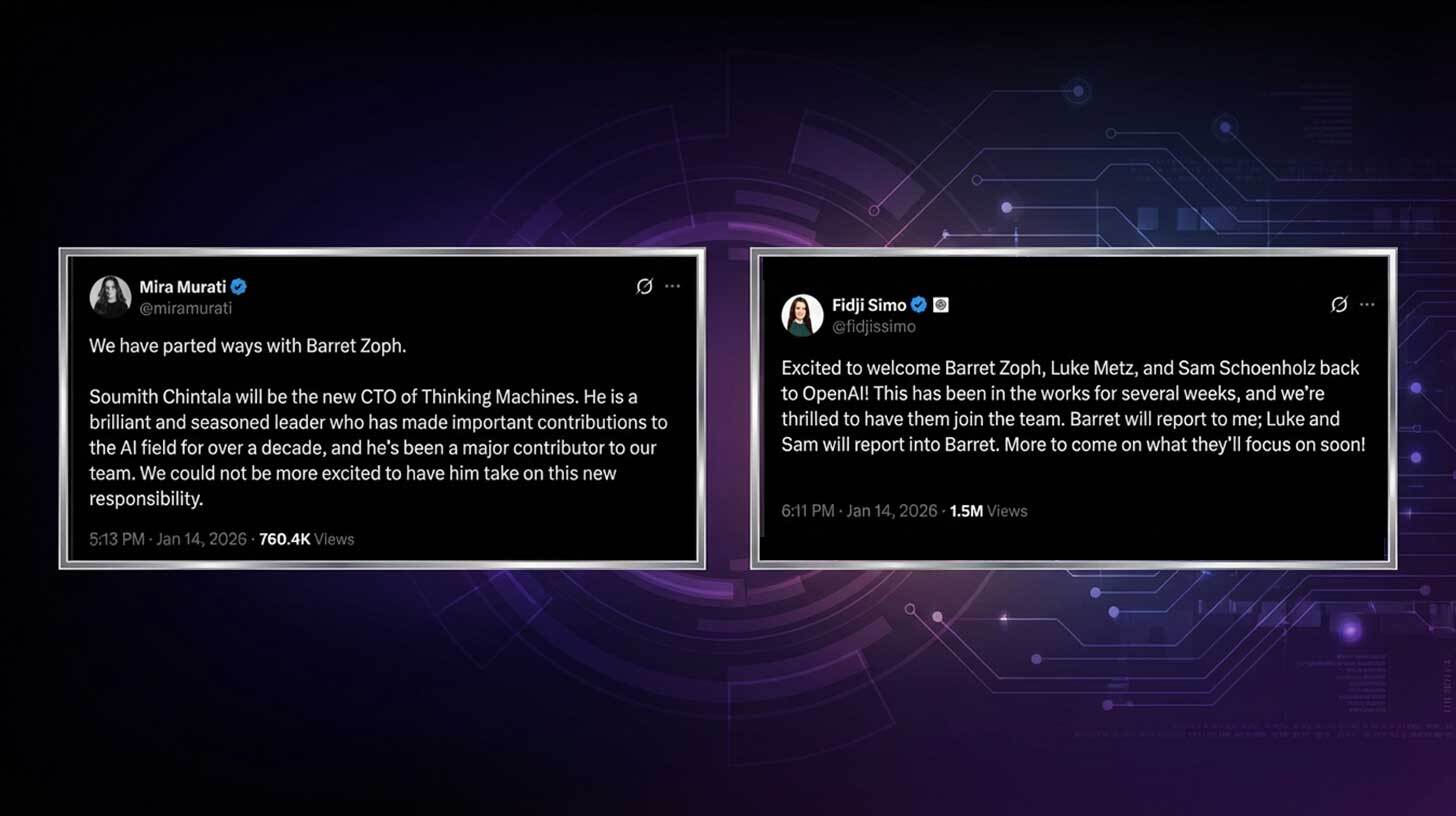

Murati's co-founders return to OpenAI

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. OpenAI used to say it was "nothing without its people" — and now it's getting a few big ones back in dramatic fashion.

Former CTO Mira Murati’s Thinking Machines just fired co-founder and CTO Barret Zolph over alleged misconduct, only for him and several others to land back at OAI in the industry’s latest AI talent shakeup.

Reminder: Our next workshop is today at 4 PM EST — join and learn how to leverage Projects and Deep Research in your workflows to upgrade working with AI. RSVP here.

In today’s AI rundown:

Thinking Machines loses co-founders to OAI

Cursor builds browser with AI agents

Run parallel media tasks with Claude Cowork

OpenAI invests in Altman’s Neuralink rival

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

THINKING MACHINES & OPENAI

🚨 Thinking Machines loses co-founders to OAI

Image source: Lovart / The Rundown

The Rundown: Mira Murati's Thinking Machines Lab just parted ways with co-founder and CTO Barret Zoph amid misconduct allegations (H/T to Kylie Robison for breaking the news), with Zoph and several other former staffers returning to OAI just hours later.

The details:

Murati announced the split at an all-hands meeting and on X, with Zoph reportedly accused of sharing proprietary information with competitors.

OAI applications CEO Fidji Simo welcomed the trio back in a post on X, revealing the company had been in discussions with Zoph for weeks.

Murati elevated longtime Meta AI researcher and PyTorch framework creator Soumith Chintala as Thinking Machines' new CTO.

The departures mark Thinking Machines’ third co-founder exit in under a year, following Andrew Tulloch's move to Meta in October.

Why it matters: In 2024, Murati's OAI departure was a shock to the AI world, and now her own co-founders are boomeranging back to her former employer under ugly circumstances. OAI is “nothing without its people,” as the old saying goes, and now it’s getting a few big ones back (and potentially more) — albeit in a dramatic fashion.

TOGETHER WITH HUBPSOT

🤑 100 ways to diversify your income stream

The Rundown: Whether you're looking to supplement your 9-5 or pursue passion projects, HubSpot's curated side hustle database provides 100 vetted opportunities with the strategic insights you need to match opportunities with your goals.

HubSpot’s list gives you:

100 carefully selected side hustle ideas for every skill level

Investment and skill breakdowns to guide your decisions

Opportunities designed to complement your existing goals

CURSOR

🤖 Cursor builds browser with AI agents

Image source: Cursor CEO Michael Truell (@mntruell on X)

The Rundown: Cursor published a new blog revealing it ran hundreds of AI coding agents autonomously for weeks on end — with one experiment producing a 3M+ line web browser built entirely from the ground up using GPT 5.2.

The details:

Cursor organized agents into planners, workers, and judges (similar to the viral Ralph Wiggum technique), allowing hundreds to run and collaborate together.

The browser was built in under a week by the agents from scratch, with the final output actually loading simple websites correctly.

Other experiments included a Windows 7 emulator, an Excel clone, and an internal migration of Cursor's codebase, each spanning a million+ lines of code.

The team also noted that GPT-5.2 handled long autonomous runs significantly better than Claude Opus 4.5, which tended to take shortcuts.

Why it matters: The current frontier generation of coding agents has broken through an invisible capability wall, and we’re seeing it everywhere from viral Claude Code use cases to AI agent swarms crushing 1M line projects over weeks. With increased agent coordination and durations of work, the entire economics of development start to shift.

AI TRAINING

🤓 Run parallel media tasks with Claude Cowork

The Rundown: In this tutorial, you’ll learn how to use Claude Cowork to process images and videos in parallel — compressing files, extracting audio, and handling media-heavy tasks automatically on your computer.

Step-by-step:

Update your Claude desktop app (requires a Claude Max subscription at $100/month) and open the new Cowork tab

Put one or two images and videos in a new folder, then click "Work in a folder" and select that folder

Give Claude this prompt: "Save copies of the images in this folder, then reduce the file size of each by at least 50%"

Before it finishes, add another prompt: "Extract the audio from the small steps video and save it as an MP3" — Claude will power through both tasks in parallel

Pro tip: Add Context7 extension to give Cowork access to open source documentation.

PRESENTED BY TELY

🔎 Are you invisible in AI search?

The Rundown: You’re in a niche industry. Customers search on Google, ChatGPT, and Perplexity, but your company doesn’t show up because your website doesn’t answer their questions. Tely AI analyzes the questions your customers ask and automatically creates and publishes content that answers them on your website, bringing high-quality leads on autopilot.

With Tely AI, you can:

Have +20% monthly organic growth.

Get indexed on Google, ChatGPT, and Perplexity in as little as 1 week

Enjoy full automation for topics, writing, and publishing

Get discovered by buyers already searching for your solution

MERGE LABS & OPENAI

🧠 OpenAI invests in Altman’s Neuralink rival

Image source: Lovart / The Rundown

The Rundown: OpenAI announced a new seed investment into Merge Labs, a brain-computer interface startup co-founded by Sam Altman that emerged from stealth alongside its $252M raise, with the AI giant becoming the company’s largest backer.

The details:

Merge is aiming to boost BCI bandwidth using ultrasound and engineered proteins, skipping the surgical brain implants required by rivals like Neuralink.

The founding team includes World/Tools for Humanity's Alex Blania and Sandro Herbig, plus researchers from Caltech and the nonprofit Forest Neurotech.

OAI will work on AI models and tools to help Merge read brain signals and ramp R&D, saying BCIs will be “natural, human-centered way” to interact with AI.

Why it matters: OAI’s investment continues the circular merry-go-round of dealmaking, this time on a more personal level for Altman. But the bigger story may be the turf he’s encroaching on, bringing his feud with Elon Musk into the brain-computer interface sector — and likely making for a whole new round of drama in the process.

QUICK HITS

🛠️ Trending AI Tools

🗣️Speechmatics - Build voice-enabled apps with Speechmatics’ Startup Program and get up to $50k in credits to deploy to production*

💬 ChatGPT Translate - Translate text, voice, or images across 50+ languages

🔊 TranslateGemma - Google’s new family of open translation models

💼 Claude Cowork - Bring Claude Code’s agentic capabilities to everyday tasks

*Sponsored Listing

📰 Everything else in AI today

ByteDance released SeedFold, a new open-source protein structure prediction model that outperforms Google’s AlphaFold3 across several benchmarks.

Elon Musk revealed that Grok 4.20 will lag behind Claude in coding, saying Anthropic has “done something special” but that cutting off xAI was “not good for their karma”.

Replit launched mobile app creation, letting users build, test via QR code, and publish directly to the App Store from a single platform.

Airbnb appointed Ahmad Al-Dahle as Chief Technology Officer, bringing in Meta's former head of generative AI and the leader behind the Llama model family.

AI creative platform Higgsfield announced a new $130M funding round at a $1.3B valuation, with the company claiming to be the fastest scaling AI company in history.

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader John D. in Daytona Beach, FL:

"As the IT guy, I enjoy showing coworkers what AI can do. For our accounting team, I fed a CSV file of our annual Business Prime account activity into Claude, and it created a colorful summary with charts & graphs and broke down spending by month, who spent what, product categories we spent most on, and where we can save money.”

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Gemini’s ‘Personal Intelligence’ upgrade

Read our last Tech newsletter: A moon hotel is taking reservations

Read our last Robotics newsletter: 1X now has a world model

Today’s AI tool guide: Run parallel media tasks with Claude Cowork

RSVP to next workshop @ 4PM EST today: AI foundations Bootcamp pt.2

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown

1X now has a world model

Read Online | Sign Up | Advertise

Good morning, robotics enthusiasts. 1X wants its Neo humanoids to learn the way humans do: by watching. The Norwegian startup just unveiled a physics-based world model that lets robots learn tasks from video instead of code.

Right now, that means Neo is learning high fives and handling air fryer baskets, but the approach puts 1X in serious company.

In today’s robotics rundown:

1X releases a world model for Neo

Skild AI hits $14B for building robot brains

Motional robotaxis target Vegas comeback

Robot learns to lip sync by watching YouTube

Quick hits on other robotics news

LATEST DEVELOPMENTS

1X

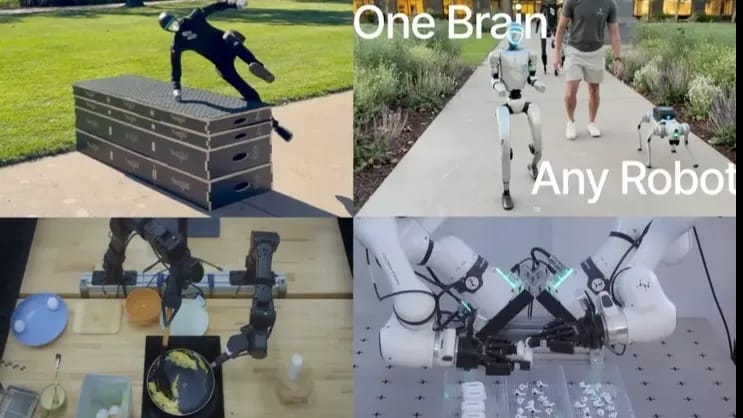

🌎 1X releases a world model for Neo

Image source: 1X / YouTube

The Rundown: Humanoid maker 1X just released a physics-based world model that uses video and prompts to teach its Neo robots new tasks, moving closer to bots that can learn by observation rather than pre-programming.

The details:

The company unveiled its 1X World Model as it prepares to ship Neo to customers who preordered the home robots in October.

Neo captures video data linked to prompts and feeds it back into the model, which then distributes learned behaviors across the entire network of bots.

1X joins competitors like Skild AI (more on it below) and Field AI in pursuing adaptive robotic software that learns from observation.

The world model represents a step toward robots that teach themselves rather than requiring exhaustive pre-programming for each action.

Why it matters: While 1X claims Neo can “transform any prompt into new actions” and “master nearly anything you could think to ask,” the actual learned tasks remain limited to basics like removing air fryer baskets and making toast. Still, the ability to learn simple tasks from observation puts 1X in direct competition with major players.

SKILD AI

🧠 Skild AI hits $14B for building robot brains

Image source: Skild AI

The Rundown: Skild AI, a Pittsburgh-based startup building foundation models to let robots learn by watching humans, tripled its valuation to $14B in seven months on the promise of general-purpose robot brains.

The details:

Skild builds foundation models that retrofit existing robots with adaptive capabilities, allowing machines to learn new tasks without extensive retraining.

Its valuation jumped from $4.5B last summer, reflecting investor confidence that learn-as-you-go software represents the breakthrough needed for robots.

The startup’s models learn by observing human demos, addressing the core bottleneck that keeps robots locked into narrow, pre-programmed routines.

Skild was founded in 2023 by Carnegie Mellon roboticists Deepak Pathak and Abhinav Gupta, and has raised over $300M in a single Series A round.

Why it matters: The sheer amount of training required for robots to learn each new task remains the biggest hurdle to adoption. Adaptive software that lets machines learn as they go could clear the path for broader deployment, though Skild AI faces growing competition from rivals like Field, Physical Intelligence, Figure, and (now) 1X.

MOTIONAL

🎰 Motional robotaxis target Vegas comeback

Image source: Hyundai Motor Group

The Rundown: Motional, the struggling autonomous vehicle company born from Hyundai and Aptiv’s $4B joint venture, is rebooting its robotaxi ambitions with an AI-first self-driving system and a plan to launch driverless service in Las Vegas.

The details:

The company paused operations in 2024 after missing deadlines and cutting 40% of staff, prompting Hyundai to inject $1B after Aptiv withdrew backing.

Motional ditched its complex web of machine learning models for an end-to-end foundation model that adapts to new cities through retraining.

The company will launch IONIQ 5 robotaxi rides with safety drivers later this year, then remove human operators by December 2026 for driverless service.

Test rides showed progress in navigating chaotic Las Vegas hotel pickup zones, though the system still struggled around double-parked delivery vans.

Why it matters: Motional’s promise arrives as Zoox already operates a free public robotaxi service on the Las Vegas Strip and Waymo offers commercial rides in other cities. Whether foundation models can compress years of development into months will determine if Motional survives or becomes another pricey cautionary tale.

ROBOTICS RESEARCH

👄 Robot learns to lip sync by watching YouTube

Image source: Columbia / Reve

The Rundown: The team at Columbia Engineering’s Creative Machines Lab developed a flexible silicone robotic face with 26 tiny motors, then let it learn lip syncing by watching YouTube videos rather than preprogrammed rules.

The details:

The robot first spent hours making random expressions in front of a mirror to learn how its own face moves.

It then watched videos of humans talking and singing to map those movements to speech sounds, an approach dubbed a "vision-to-action" language mode.

The system outperformed five existing approaches and generalized across 11 languages without requiring language-specific training data.

When paired with ChatGPT, the lip-syncing skill creates what the researchers call “a whole new depth to the connection” between robots and humans.

Why it matters: Robots like Hanson’s Sophia have been lip-syncing to speech for nearly a decade, but rule-based systems still deliver stiff, uncanny results. Columbia’s learning-based approach could finally narrow that gap — though making robots this emotionally convincing raises concerns of its own.

QUICK HITS

📰 Everything else in robotics today

German auto supplier Schaeffler inked a deal with London-based startup Humanoid to deploy hundreds of bipedal machines across its factories over the next five years.

New York Governor Kathy Hochul is moving to legalize robotaxis statewide, except in New York City, where Waymo and others remain stuck in regulatory limbo.

NYU engineers developed fluid-driven gears that use spinning liquid instead of interlocking teeth, a breakthrough that could make robots more immune to wear.

Shanghai-based AgiBot reportedly captured 39% of the global humanoid robot market in 2025 with over 5,100 units already shipped.

Autonomous trucking startup Kodiak is tapping automotive supply giant Bosch to build production-grade sensors and steering systems for its driverless rigs.

A Nature review of 47 ray-inspired underwater robots found that researchers are still struggling to find effective actuators for mid-sized designs.

China’s Matrix Robotics unveiled MATRIX-3, a humanoid with synthetic skin, 27 DoF hands, and a brain that learns new tasks from spoken commands without training.

Dutch robotics firm Vitestro released its first public video demonstrating Aletta, an autonomous robot that performs diagnostic blood draws without human intervention.

Japanese researchers developed a machine learning system that lets robots learn human grasping techniques from minimal training data, cutting motion errors by 74%.

The Association for Advancing Automation released a 403-page safety standard consolidating U.S. and international rules for industrial robots into one document.

COMMUNITY

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Gemini’s ‘Personal Intelligence’ upgrade

Read our last Tech newsletter: A moon hotel is taking reservations

Read our last Robotics newsletter: Walmart expands drone empire

Today’s AI tool guide: Get the most out of Google’s Gemini in Gmail

RSVP to next workshop @ 4PM EST Friday: AI foundations bootcamp pt. 2

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — The Rundown’s editorial team

Gemini's ‘Personal Intelligence’ upgrade

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. As frontier models converge on capability, the differentiator is becoming personal context — and no one has more of it across the internet than Google.

The company's latest ‘Personal Intelligence’ upgrade lets Gemini pull from Gmail, Photos, and YouTube automatically, turning the apps billions already use into an AI moat rivals will struggle to cross.

In today’s AI rundown:

Gemini’s new ‘Personal Intelligence’ upgrade

McConaughey trademarks himself to fight deepfakes

Get the most out of Google’s Gemini in Gmail

Z AI’s open image model trained on Huawei chips

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

🧠 Gemini’s new ‘Personal Intelligence’ upgrade

Image source: Google

The Rundown: Google just launched Personal Intelligence, a new beta feature that lets Gemini reason across apps like Gmail, Photos, YouTube, and Search data to deliver more personalized responses without users needing to specify which app to pull from.

The details:

Personal Intelligence connects Google’s app suite to Gemini, letting the assistant understand, locate, and proactively use personalized details.

The tool can reason across text, images, and videos, with VP Josh Woodward detailing the AI referencing his photos and emails to help at a tire shop.

Personal Intelligence is off by default, with Google saying it won’t train its AI models directly on connected info like inboxes or photo libraries.

The feature is rolling out to Gemini AI Pro and Ultra subscribers in the U.S. first, with plans to expand to free tiers and AI Mode in the future.

Why it matters: This feels like Google is finally playing the card its AI rivals will always struggle to match: billions of users already living inside Gmail, Photos, and YouTube. As the frontier models all become extremely capable for the average user, deep integrations with personal context from widely used apps will be the big differentiator.

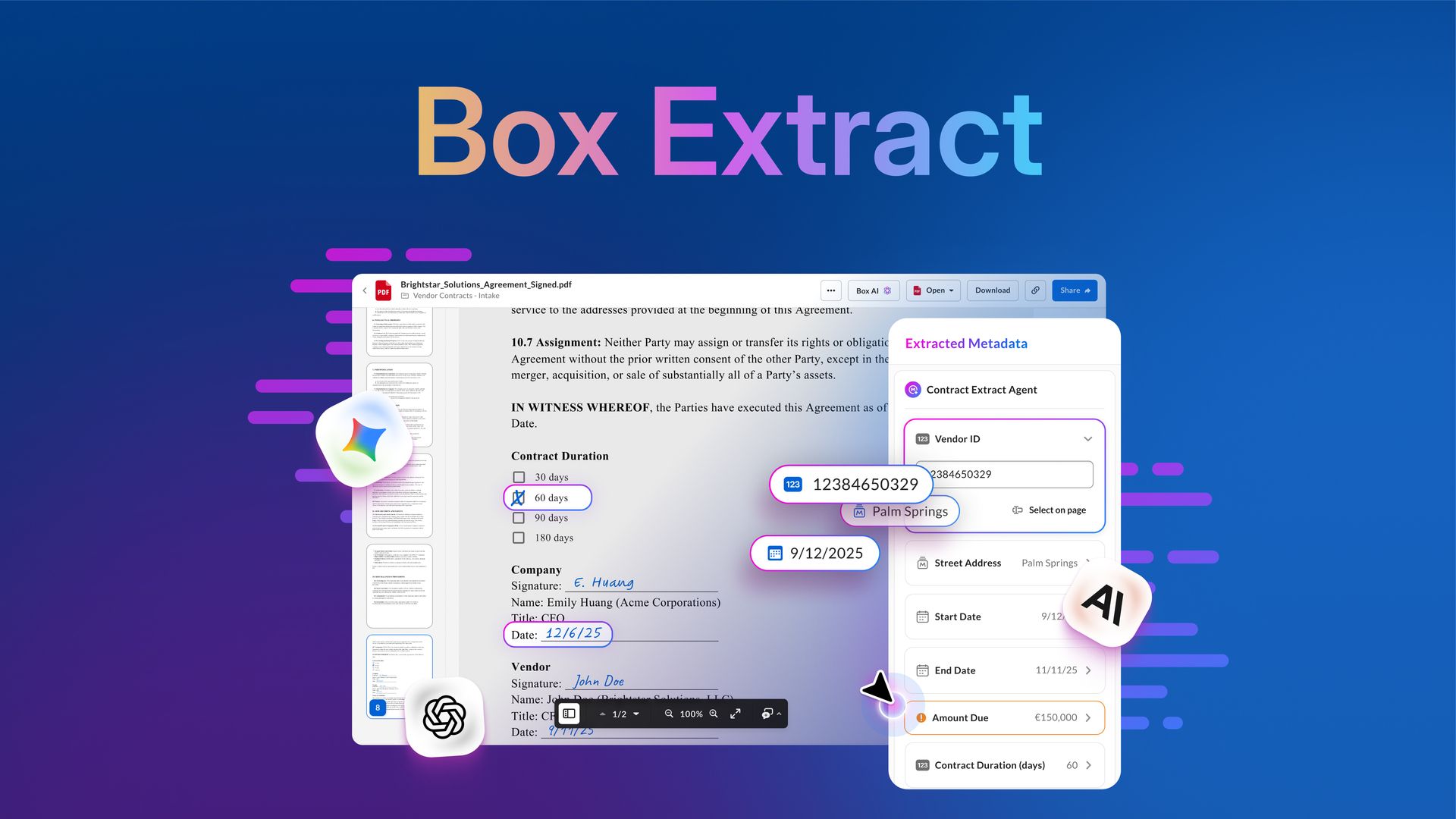

TOGETHER WITH BOX

📦 Turn enterprise content into actionable data

The Rundown: Enterprises sit on gold mines of data locked in content. Box Extract changes that, securely extracting structured data at scale to power faster decisions and automated workflows.

Box is a game-changer for regulated industries, with use cases like:

Financial services: extract loan terms and payment dates automatically

Government: pull permit details and inspection dates to streamline compliance

Insurance: distill claims info from reports and images to accelerate processing

AI & COPYRIGHT

⭐️ McConaughey trademarks himself to fight deepfakes

Image source: Lovart / The Rundown

The Rundown: Oscar-winning actor Matthew McConaughey secured eight trademark approvals from the U.S. Patent and Trademark Office covering his voice, likeness, and video clips, according to the WSJ, citing the need to combat AI deepfakes and misuse.

The details:

The approved trademarks include audio of his famous "Alright, alright, alright" catchphrase and short clips of him speaking and staring into a camera.

McConaughey told the WSJ that they “want to create a clear perimeter around ownership with consent and attribution the norm in an AI world.”

McConaughey's lawyers say the filings give them a federal court avenue to pursue AI misuse, rather than relying on state-by-state publicity laws.

The actor is an investor in AI voice startup ElevenLabs, as well as the face of Salesforce’s Agentforce TV campaigns.

Why it matters: The capabilities of AI image and video models are blurring reality more than ever, and newer, powerful releases have been far more lax on creating real likenesses and IP. McConaughey is right to push against the unclear ownership rules in the AI era, but thus far, it’s been a tough legal game to play without strong results.

AI TRAINING

📧 Get the most out of Google’s Gemini in Gmail

The Rundown: In this tutorial, you will learn how to use Google’s new Gemini features in Gmail, what is available right now, and the most beneficial and disappointing parts about this initial rollout.

Step-by-step:

With a Google Workspace or “AI Pro” personal account, go to gmail.com and click on settings in the top right. Make sure all the "smart features" are enabled

In your inbox, click the “Ask Gemini” button next to settings and ask anything about your inbox, like: “What emails do I have about [subject]?”

In any draft, you can press

Option + H(Mac) orAlt + H(Windows), or click "Help me write" to take Gemini’s help to write based on the email’s contextAfter a reply is written, you can click “Recreate” to regenerate it or click “Refine” to change the tone or length of the reply.

Pro tip: Use “help me schedule” in the draft pane to have Gemini read the email thread, check the calendars, and suggest meeting times to whomever you’re emailing.

PRESENTED BY SLACK FROM SALESFORCE

📈 The real ROI of AI agents in collaboration

The Rundown: For all the talk of AI's transformative power, are companies actually seeing a tangible return? A new Metrigy global study of over 1,100 companies confirms that over 90% of organizations investing in AI are already achieving or expect positive ROI.

Research reveals that early adopters of agentic AI in particular are seeing:

21% reduction in operating costs

35% increase in customer satisfaction

31% improvement in employee efficiency

ZHIPU AI

🎇 Z AI’s open image model trained on Huawei chips

Image source: Z AI

The Rundown: Chinese AI startup Zhipu AI just released GLM-Image, an open-source image generator hailed as the first major model trained entirely on Huawei hardware — though early testing has not been positive despite strong benchmark scores.

The details:

The 16B-parameter model was developed using Huawei's Ascend chips and software, with zero reliance on US semiconductors.

Z AI claims GLM-Image excels at text-heavy images and beats Nano Banana Pro on accuracy benchmarks, though early user tests have not backed that up.

The model trails top options like Nano Banana Pro and Seedream on overall image quality, but is released fully open-source under a permissive license.

Why it matters: Zhipu made its AI presence felt with a powerful GLM-4.7 release in December (and a recent HK IPO), and now brings a capable, open image model debut trained entirely without Nvidia to the table. While it may not beat out closed rivals, it’s a sign that China's AI industry isn't waiting around for the chip war to end.

QUICK HITS

🛠️ Trending AI Tools

👨💻 Thesys - Make AI apps respond with interactive UI instead of walls of text. Try one of the world's first Generative UI API for free*

🧠 Personal Intelligence - Personalize Gemini with info from apps like Gmail

🎇 GLM-Image - Z AI’s new open-source image generation model

🩻 MedGemma 1.5 - Google’s newly updated open medical models

*Sponsored Listing

📰 Everything else in AI today

OpenAI announced a deal with chipmaker Cerebras to deploy 750MW of dedicated processing power for faster AI responses, with capacity rolling out through 2028.

ElevenLabs is partnering with Deutsche Telekom to deploy AI voice agents for customer service, offering 24/7 support without wait times via app and phone.

Slack updated its Slackbot, now acting as a personal AI agent that taps into messages, channels, and files to answer and leverage context throughout work.

OpenAI made its GPT-5.2-Codex model available to developers via the Responses API, extending the model’s availability beyond the company’s Codex platform.

Microsoft reportedly became one of Anthropic’s top customers, according to The Information, ramping spending on its models to nearly $500M annually.

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader Jon M. in Wake Forest, NC:

"I coach youth basketball and always struggled with substitutions: how to give every kid fair playing time while keeping balanced lineups on the court… I built a simple roster table, rated each player on height, offense, and defense (1–10), and asked ChatGPT to design a single-page HTML app that would generate substitutions.

The first version worked, but I refined it using Claude for hands-on code edits, improving mobile usability and flow. Now I can toggle who’s present, choose starters, and instantly get a quarter-by-quarter substitution plan with clear tracking."

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Meta’s massive AI compute push

Read our last Tech newsletter: A moon hotel is taking reservations

Read our last Robotics newsletter: Walmart expands drone empire

Today’s AI tool guide: Get the most out of Google’s Gemini in Gmail

RSVP to next workshop @ 4PM EST Friday: AI foundations bootcamp pt. 2

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown

Meta's massive AI compute push

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. Meta spent the summer poaching top AI talent, and now it's building up the compute power to match.

Mark Zuckerberg just unveiled Meta Compute, an initiative to build tens of gigawatts of new capacity this decade and hundreds over time — making clear that infrastructure won't be what stands between Meta and the frontier.

In today’s AI rundown:

Zuckerberg’s massive AI infrastructure push

Microsoft’s ‘good neighbor’ data center initiative

Never lose vibe coding progress again with Git

AI learns from 1M species to design new medicine

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

META

🏗️ Zuckerberg’s massive AI infrastructure push

Image source: Lovart / The Rundown

The Rundown: Meta just announced Meta Compute, a new “top-level initiative” to build AI infrastructure at unprecedented scale — with plans to add tens of gigawatts of capacity this decade and hundreds of gigawatts over time.

The details:

Infrastructure chief Santosh Janardhan will co-lead the effort with Daniel Gross, who came over from AI safety startup SSI last year.

Meta has committed $600B in U.S. infrastructure spending by 2028 and recently locked in 20-year nuclear power agreements for its data centers.

Newly appointed president and former Trump national security official Dina Powell McCormick will handle government deals to finance and build capacity.

The announcement comes amid reported major layoffs to Meta’s Reality Labs and metaverse/VR divisions, with a roughly 10% cut expected this week.

Why it matters: Zuck and co. splashed some serious cash on poaching top AI talent in the summer, and now they are doubling down on the compute front as well. With the AI race increasingly becoming an infrastructure one, Meta’s initiative aims to ensure that scale won’t be the bottleneck in leveling up to the frontier.

TOGETHER WITH CONCENTRIX

🔮 Are humans in the loop still needed?

The Rundown Concentrix’s guide on the Missing Piece in AI Systems combines research-based reasoning and hands-on experience to redefine the role of human expertise in building and deploying strong AI systems.

Inside, you’ll find:

Analysis of the business impact of human oversight in AI systems development

Practical tips for improving the performance of your AI systems

Real-world examples of AI success in practice

MICROSOFT

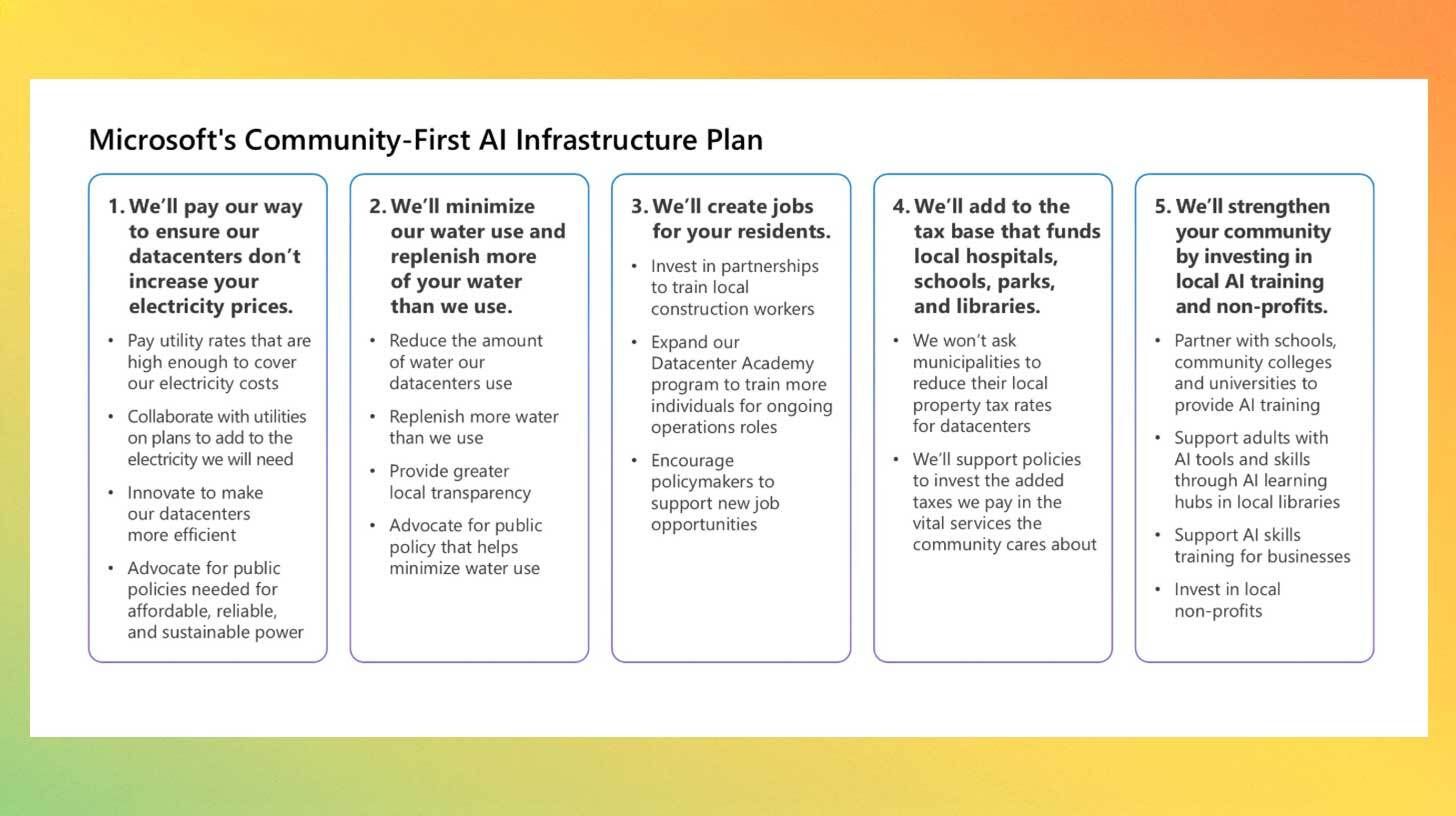

😇 Microsoft’s ‘good neighbor’ AI data center initiative

Image source: Microsoft

The Rundown: Microsoft just launched ‘Community-First AI Infrastructure’, a new plan promising that its data centers won't raise local electricity prices, will replenish more water than they use, and will invest in jobs and training for nearby residents.

The details:

The company says it will ask utilities to charge rates high enough to cover the full cost of powering its data centers, so residential bills aren't affected.

Microsoft is committed to a 40% reduction in water-use intensity by 2030, with new facilities using closed-loop cooling that doesn't tap local drinking water.

The tech giant also pledged to pay full property taxes without breaks, and announced new programs to train locals for data center construction jobs.

The announcement follows pressure from senators and comments from President Trump that tech companies need to "pay their own way" on energy.

Why it matters: AI’s infrastructure surge shows no signs of slowing down, but they’ve become divisive in communities across the country over spiking power bills and water supply concerns (some valid, others overblown). Microsoft’s pledge is a good start, but it’ll likely take more than this to turn the PR tides from the current major backlash.

AI TRAINING

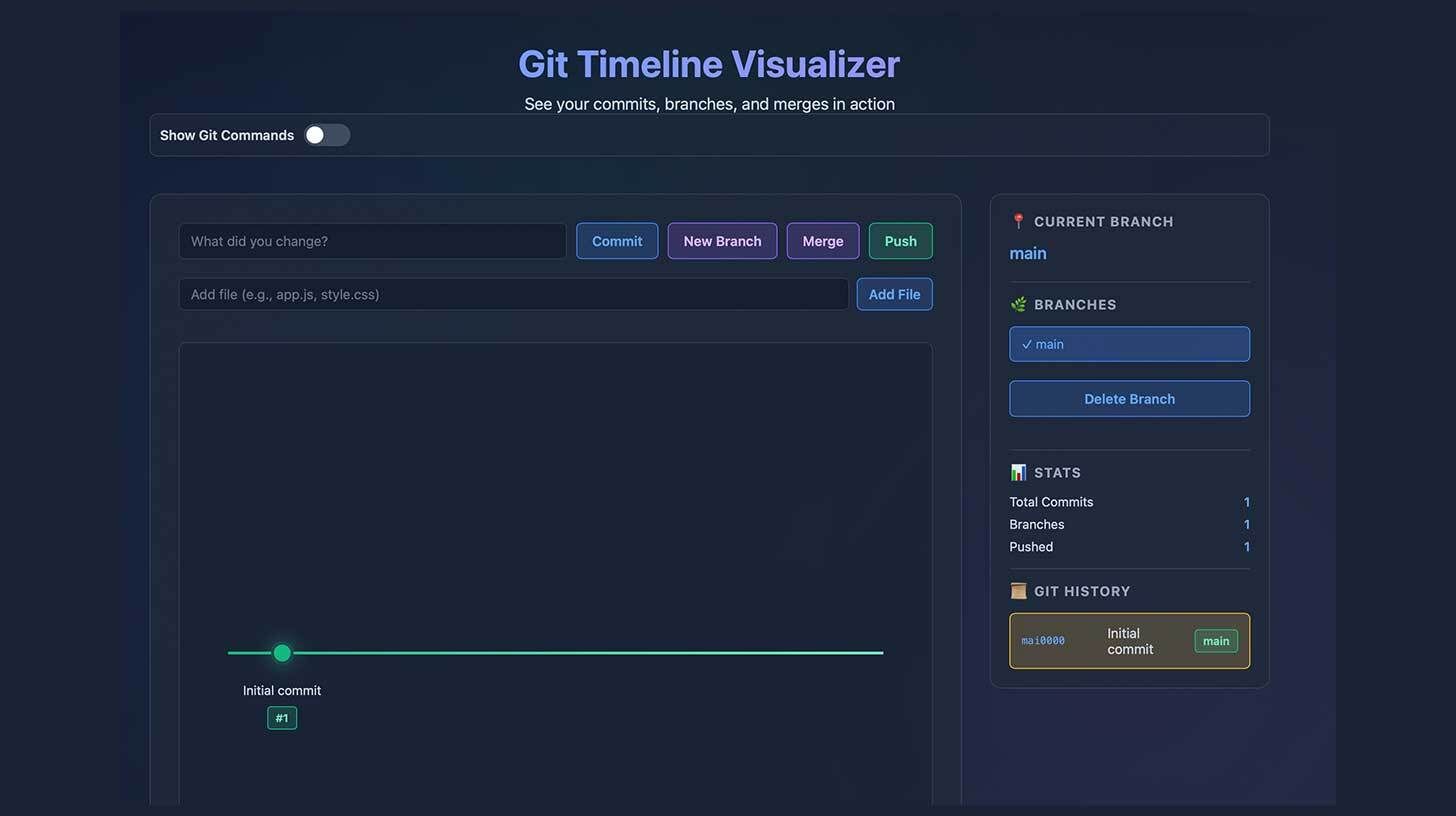

💾 Never lose vibe coding progress again with Git

The Rundown: In this tutorial, you will learn how to save your vibe coding progress with Git so you never lose your work again — covering eight practical git commands and writing your first git commit.

Step-by-step:

Install git from git-scm.com and verify with

git --versionin terminal, then open a coding project in your IDE and start a terminal instance in that folderUse

git initto initialize git tracking, thengit add .to snapshot all files (orgit add [filename].[extension]for individual files)Save your snapshot with

git commit -m 'first commit!'and view all commits withgit logFor new features, create a branch with

git checkout -b feature-[name], then merge changes to main withgit merge [branch name]

Pro tip: Add git rules to your coding assistant: (1) Create a feature branch: git checkout -b feature-[name], (2) Commit after each logical change, (3) Ask before merging, (4) Wait for approval, (5) Never commit directly to main.

PRESENTED BY GLEAN

💡 100 ideas from leaders redefining work with AI

The Rundown: AI has moved fast — but transformation hasn’t. Too many organizations are still stuck between hype and results. This report, brought to you by Glean’s Work AI Institute, distills insights from 100+ leaders into practical, evidence-backed plays for using AI to transform work.

In this report, you will discover:

Five big lessons for leading through AI change

Nine themes around how AI is rewiring the core of how organizations function

Real-world AI examples from orgs. like Time, Workday, Deloitte, and more

AI RESEARCH

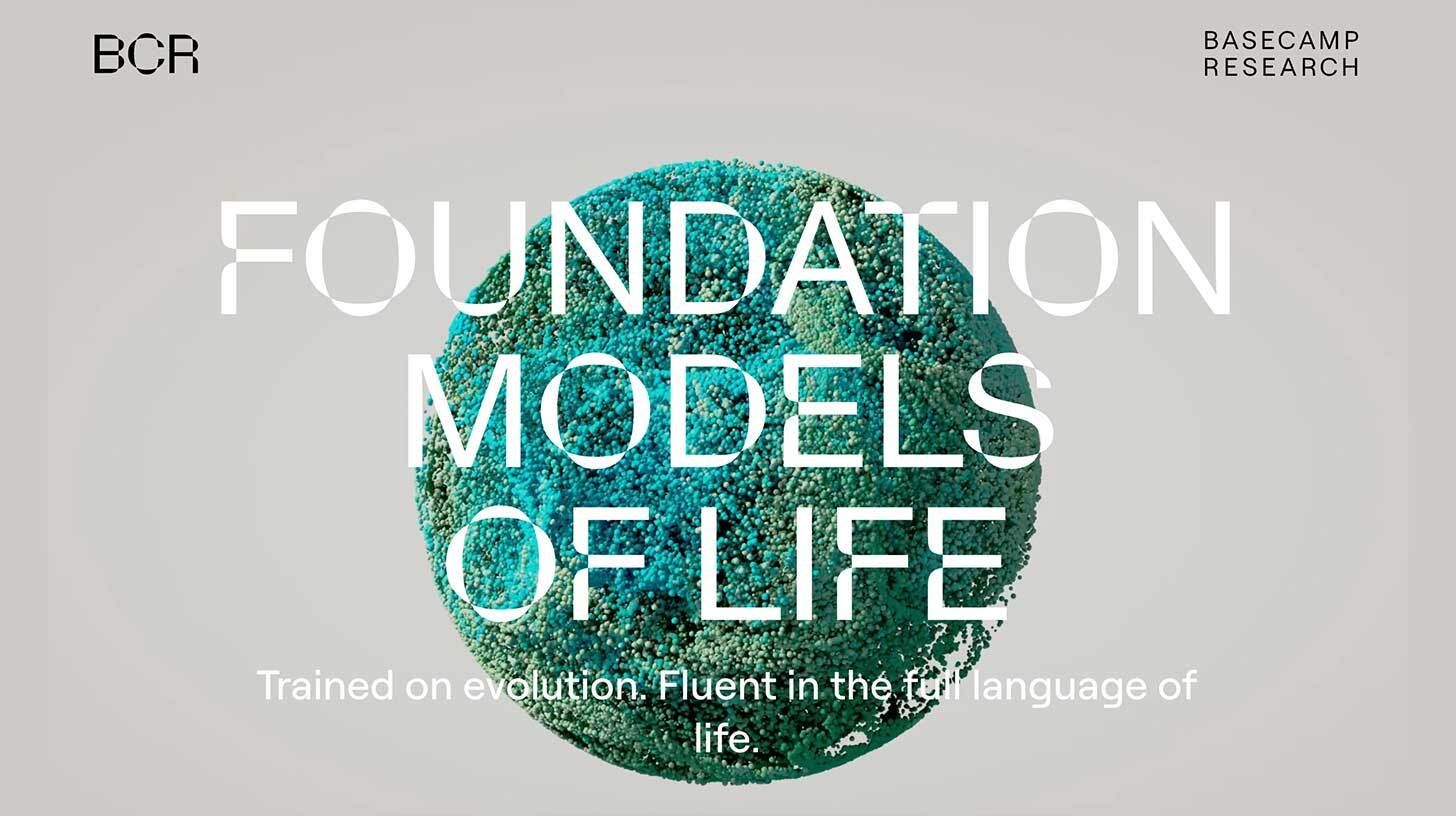

🧬 AI learns from 1M species to design new medicine

Image source: Basecamp Research

The Rundown: UK startup Basecamp Research introduced Eden, a new family of AI models developed with Nvidia that learned from evolutionary data across 1M species to design potential new treatments for genetic diseases and drug-resistant infections.

The details:

Eden learned from DNA collected across 28 countries, studying how organisms evolved to solve biological problems over billions of years.

The AI designed a new type of gene-editing tool that can insert therapeutic DNA without cutting it, a potentially safer approach than methods like CRISPR.

In lab tests for diseases like muscular dystrophy and hemophilia, over 63% of the AI-designed treatments were functional.

Eden also created new antibiotic candidates, with 97% proving effective against dangerous 'superbugs' that don't respond to existing drugs.

Why it matters: Most people don’t think about where new medicines come from until they need one that doesn't exist. Basecamp's approach of teaching AI to learn from billions of years of evolution could help speed up treatments for genetic diseases and a growing crisis of antibiotic-resistant infections that current drugs can't address.

QUICK HITS

🛠️ Trending AI Tools

🗣️ Unwrap Customer Intelligence - Get AI-driven insights from your unstructured customer feedback to build your product roadmap*

🤝 Claude Cowork - Bring Claude’s agentic capabilities to everyday tasks

🎥 Veo 3.1 - Google’s video AI, with upgrades to references, vertical outputs

💬 Slackbot - Slack’s upgraded personal AI agent

*Sponsored Listing

📰 Everything else in AI today

Anthropic introduced Anthropic Labs, a new team focused on building prototypes of AI tools, with CPO and Instagram co-founder Mike Krieger shifting to lead the division.

Google updated its open-source MedGemma medical AI with abilities for interpreting scans like CT and MRIs, also releasing an open MedASR speech-to-text tool.

Claude Code creator Boris Cherny revealed that Anthropic’s new Claude Cowork feature was entirely built using the agentic coding tool in just 1.5 weeks.

U.S. Defense Secretary Pete Hegseth said the Pentagon is adding xAI’s Grok into military networks by the end of the month to accelerate AI use across the department.

Japanese lab Sakana AI announced that its ALE-Agent for coding took first place in the AtCoder Heuristic Contest, the first time an AI has won the event.

McKinsey CEO Bob Sternfels said the consulting giant counts 25k AI agents among its 60k “person” workforce, with plans to pair every consultant with at least one agent.

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader David G. in Lakewood Ranch, FL:

"AI is all over my workday. It is my WordPress assistant, which solves minor issues and lets me tackle tasks beyond my technical reach. It helps me every time I enter a new, unfamiliar dashboard, answers multiple technical questions during my workday, and supports our marketing and strategy.

Away from work, it also helps manage diabetes by guiding me on the best eating practices and, with a picture, estimating carb counts. I'm hooked!"

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Apple, Google go official for Siri revamp

Read our last Tech newsletter: A moon hotel is taking reservations

Read our last Robotics newsletter: Walmart expands drone empire

Today’s AI tool guide: Never lose vibe coding progress again with Git

RSVP to next workshop @ 4PM EST Friday: AI Foundations Bootcamp pt. 2

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown

A moon hotel is taking reservations

Read Online | Sign Up | Advertise

Good morning, tech enthusiasts. A 22-year-old Berkeley-trained engineer says he wants to build a luxury hotel on the moon — and he’s taking six-figure deposits from anyone willing to book a room.

His startup, called GRU Space, has already raised money from Y Combinator. It’s audacious even by Silicon Valley standards, but for a certain class of techno-optimist, the moon is starting to look less like a celestial body and more like a lifestyle upgrade.

In today’s tech rundown:

This moon hotel is taking reservations

Google’s founders are leaving California

Trump wants Big Tech to eat AI’s power bills

How much Tim Cook made last year

Quick hits on other tech news

LATEST DEVELOPMENTS

SPACE TECH

🌝 This moon hotel is taking reservations

Image source: GRU / YouTube

The Rundown: A YC-backed startup called GRU Space says it wants to build the first lunar hotel, taking deposits of $250K–$1M from wealthy true believers who are willing to reserve a bed on the moon years before the infrastructure exists.

The details:

The tiny startup plans to launch its first hotel, an inflatable structure, in 2032, giving SpaceX travelers an option off the Starship, Ars Technica reports.

The first tech demo is a 10kg payload slated for a commercial lunar lander in 2029, meant to prove out inflatable structures and on-site manufacturing.

Founder Skyler Chan, a 22-year-old UC Berkeley engineer, previously interned at Tesla and worked on a NASA-funded 3D printer flown to space.

GRU pitches itself as an infrastructure company that will mine outer space to support long-term human settlements.

Why it matters: Chan says the first hotel would be launched in 2032 and capable of supporting up to four guests, with the next iterations, built from moon bricks, taking on the style of San Francisco’s Palace of the Fine Arts. It’s an audacious vision bordering on absurd, but it has already received Y Combinator’s validation.

🧳 Google’s founders are leaving California

Image source: Wikimedia Commons

The Rundown: Google co-founders Larry Page and Sergey Brin are quickly severing many of their remaining financial ties to California just as the state weighs a one-time 5% wealth tax on billionaires.

The details:

In December, Brin-linked entities dissolved or shifted 15 California LLCs to Nevada, covering assets such as his superyacht and a private jet terminal.

Page has likewise moved or wound down dozens of entities and is positioning himself in lower-tax states.

The proposed wealth tax would apply retroactively to anyone living in California as of January 1 and could affect roughly 200 ultrawealthy residents.

The plan has split Silicon Valley: LinkedIn co-founder Reid Hoffman opposes the levy, while Nvidia CEO Jensen Huang says he is “perfectly fine” paying it.

Why it matters: When two tech legends worth $300B each start shuffling superyachts out of state, California has reason to worry. The state pulls roughly 40% its income tax revenue from the top 1% of earners. But apparently, most of the ultrawealthy stayed put past the January 1 deadline, so the rumored mass exodus hasn’t happened yet.

DATA CENTERS

⚡️ Trump wants Big Tech to eat AI’s power bills

Image source: Ideogram / The Rundown

The Rundown: President Donald Trump says he'll force Microsoft and other tech giants to cover the soaring electricity costs of their AI data centers instead of letting those power bills hit voters’ wallets.

The details:

Trump said Americans should never pay higher electricity bills “because of data centers” and vowed to announce new measures in the coming weeks.

He singled out Microsoft as the first company expected to implement “major changes” to ensure consumers don’t “pick up the tab” for its AI network.

Analysts estimate U.S. data centers could nearly triple their share of national electricity use to around 12% by 2028.

A recent PJM grid auction attributed roughly $23B in capacity costs to data centers from 2025 to 2028 — about half the total.

Why it matters: AI data centers are driving power demand so quickly that Washington now treats electricity prices as a political flashpoint, with Trump leaning on Big Tech not to pass rising costs to voters. Yet he previously fast‑tracked data‑center hookups, leaving him both hyping the AI buildout and scrambling to contain its backlash.

APPLE

🍎 How much Tim Cook made last year

Image source: Ideogram / The Rundown

The Rundown: Apple’s latest proxy filing shows that CEO Tim Cook was paid about $74.3M in 2025, a hair below his 2024 package and still overwhelmingly tied to stock awards and performance bonuses rather than salary.

The details:

Breakdown: $3M salary, about $57.5M in stock awards, $12M in performance-based cash, and roughly $1.76M in other compensation.

“Other” compensation includes 401(k) contributions, life insurance, vacation cash-out, security, and personal air travel costs.

Apple spent around $887,870 on Cook’s personal security and about $789,991 on private air travel in 2025 alone.

Senior execs like Sabih Khan, Deirdre O’Brien, and Kate Adams each made about $27M, while new CFO Kevan Parekh received roughly $22.5M.

Why it matters: Cook’s pay package puts him near the top of the U.S. CEO ranks, alongside Microsoft’s Satya Nadella, whose compensation recently hit about $96.5M — but well below the most extreme outliers, which keeps Apple in the broader debate over whether executive pay is tethered to performance or simply market power.

QUICK HITS

📰 Everything else in tech today

Paramount Skydance sued Warner Bros. Discovery in Delaware court to force more disclosure about WBD’s planned sale of its studio and streaming assets to Netflix.

Meta reportedly plans to cut about 10% of jobs in its 15K-person Reality Labs, shifting investments from metaverse and VR projects into AI-powered wearables.

Disney+ is adding a TikTok-style vertical feed of clips from its content library to boost daily engagement and compete with short-form platforms for users’ attention.

Alphabet, Google’s parent company, became the latest tech giant to surpass a $4T market valuation, as investor AI optimism drives the stock to record highs.

The FCC authorized SpaceX to launch and operate another 7.5K Starlink satellites, bringing its approved Gen2 constellation to 15K spacecraft.

Netflix dominated the 83rd Golden Globes with seven awards, including four for breakout limited series “Adolescence” and two for animated hit “KPop Demon Hunters.”

Meta shut down nearly 550K Australian accounts belonging to under‑16s across Instagram, Facebook, and Threads to comply with the country’s new social media ban.

Apple led the 2025 global smartphone market with a 20% shipment share, narrowly beating Samsung and Xiaomi, per Counterpoint data.

Uber faces a Phoenix trial over a woman’s sexual-assault claim against a driver, a case that could expose serious holes in the company’s safety practices.

The EU is exploring a minimum-price scheme for Chinese EVs as an alternative to tariffs, aiming to curb state subsidies without completely choking off imports.

Tesla reportedly just started producing a new solar panel at its Buffalo Gigafactory, a sign its struggling solar business might not be completely dead yet.

COMMUNITY

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Apple, Google go official for Siri revamp

Read our last Tech newsletter: Lego’s iconic brick just got a brain

Read our last Robotics newsletter: Walmart expands drone empire

Today’s AI tool guide: Exploring Perplexity’s Comet browser

RSVP to next workshop @ 4PM EST Friday: AI Foundations Bootcamp pt. 2

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — The Rundown’s editorial team

Walmart expands drone empire

Read Online | Sign Up | Advertise

Good morning, robotics enthusiasts. Walmart’s parking lots are quietly turning into airfields. Wing is strapping 150 drone launchpads onto hundreds of big-box stores, laying the groundwork for a nationwide aerial delivery network that could reshape how everyday goods move through U.S. cities and suburbs.

With new FAA approvals and heavy automation, flying snacks might be ready to go mainstream.

In today’s robotics rundown:

Walmart adds drone delivery to 150 stores

A 17-ft. robot makes human embryos

Humanoids battle it out at CES

This AI robot is actually a Tamagotchi

Quick hits on other robotics news

LATEST DEVELOPMENTS

WALMART

🛍️ Walmart adds drone delivery to 150 stores

Image source: Wing

The Rundown: Wing is bolting 150 launchpads onto Walmart stores as part of a race to build a 270-site drone network blanketing the U.S. by 2027 — creating what the company calls the world’s largest commercial drone-delivery operation.

The details:

The network will target coverage for over 40M Americans, expanding from Dallas–Fort Worth pilots into LA, Miami, Cincinnati, and smaller metros.

Customers will be able to order thousands of Walmart items for delivery “in roughly 30 minutes” via Wing’s app or Walmart’s platform.

Wing’s aircraft fly autonomously under a centralized fleet management system, coordinating dozens of drones at once and lowering packages on a tether.

Wing now completes thousands of weekly deliveries with an average fulfillment time under 19 minutes and flight times averaging 3 minutes and 43 seconds.

Why it matters: FAA approval for beyond-visual-line-of-sight flights has unlocked Wing’s expansion. For Walmart, wiring drone infrastructure into its 4K-plus stores transforms big-box parking lots into an anti-Amazon aerial grid — one that can drop Advil and Doritos straight into suburban backyards without touching a delivery van.

IVF ROBOTICS

👶🏼 A 17-ft. robot makes human embryos

Image source: Conceivable

The Rundown: A 17-foot AI-powered robot in a Mexico City fertility clinic is now making human embryos that have already resulted in 19 babies, raising the question of whether machines are better at IVF than people, Bloomberg reports.

The details:

Conceivable’s Aura handles over 200 microscopic steps — from pipetting sperm to injecting it into eggs — with minimal human intervention.

The company is offering free robotic IVF to couples in clinical trials at a Mexico City fertility clinic.

Conceivable raised $50M last year; rival Overture Life has also deployed automated systems that have produced babies.

The 17-foot machine addresses IVF’s core bottlenecks: high costs, inconsistent outcomes, and a global shortage of trained embryologists.

Why it matters: End-to-end automation could transform fertility treatment from a labor-intensive service into an industrialized process, making it accessible to far more people. But delegating embryo creation to machines is already triggering regulatory and ethical scrutiny about who — or what — should control human reproduction.

CES

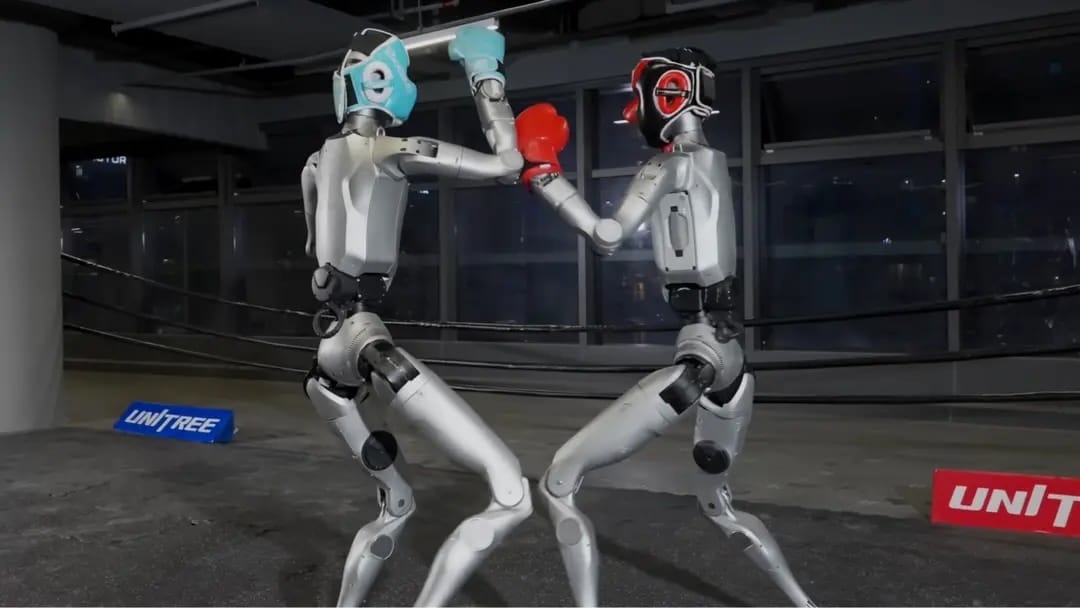

🥊 Humanoids battle it out at CES

Image source: Unitree / YouTube

The Rundown: Ultimate Fighting Robot staged live bouts between child-sized Unitree humanoids at CES, complete with human pilots, motion controllers, and a ringside referee — positioning it as a legitimate combat-sports franchise in the making.

The details:

Founders Vitaly and Xenia Bulatov are selling UFB as “the sport of the future,” betting fans will connect to robot backstories the way they do MMA fighters.

Pilots control the robots ringside using cameras and motion-sensing Nintendo-style controllers while a referee monitors for fouls and knockdowns.

Robots resembled blindfolded boxers at times, reportedly triggering laughter with wild misses and cheers when blows landed.

Each bout doubles as a data-collection operation — UFB is mining motion data from every match to refine humanoid control systems and movement models.

Why it matters: UFB is stress-testing control interfaces and balance algorithms that robotics companies are racing to perfect, turning Vegas spectacle into practical R&D. Previous sold-out events in San Francisco suggest the format has traction among tech professionals at least, but its widespread entertainment value is still an open question.

ROBOPETS

🥺 This AI robot is actually a Tamagotchi

Image source: Takway

The Rundown: Remember Tamagotchi? It’s back, and it’s way smarter. Takway’s Sweekar, unveiled at CES, is a palm-sized AI robot that learns your voice, remembers conversations, and evolves a personality of its own, with intelligence baked in.

The details:

You keep it “happy” by feeding and playing with it, and its mood and expressions change in response to how much attention you give it.

The pet moves through four life stages — egg, baby, teen, and adult — becoming less needy over time and eventually able to entertain itself.

An adult Sweekar can’t die from neglect; it will keep itself alive and later tell you stories about what it “did” while you were away.

Takway plans to launch Sweekar on Kickstarter later this year with a projected price of around $100–$150.

Why it matters: Takway is positioning it as the first step toward becoming the “Nintendo of the AI robot era.” The original Tamagotchi sold over 80M units worldwide by tapping into our impulse to nurture something small and needy — now that impulse comes with machine learning and actual memory.

QUICK HITS

📰 Everything else in robotics today

Musk’s xAI is burning billions of dollars but is pitching investors on a plan to make its Grok models the “brain” for Optimus, becoming the software layer that powers the bot.

Hyundai and Boston Dynamics’ Atlas humanoid, which debuted at CES last week, was named “Best Robot” in CNET Group’s Official Best of CES 2026 award.

HD Hyundai Robotics, South Korea’s largest industrial robot maker, hired UBS, Korea Investment & Securities, and KB Securities to lead a planned Seoul IPO.

X Square Robot raised about $140M in a round backed by ByteDance, Alibaba, and Meituan, making it one of China’s best-funded general-purpose robotics startups.

Tuya Smart unveiled Aura, an AI-powered mobile pet companion robot that roams the home to monitor animals’ emotions, play with them, and capture photos and videos.

CMR’s Versius robot just won EU and UK safety marks for pediatric abdominal surgery, while India’s SS Innovations brings smaller tools to its SSi Mantra system.

Brolan debuted ClearX at CES, a robot that washes, dries, and optionally sanitizes shoes using sensor‑guided micro‑bubble cleaning instead of harsh detergents.

Zero Zero Robotics unveiled HOVERAir AQUA, a fully waterproof, floating self-flying camera drone that uses AI tracking to film 4K/100 fps watersports footage hands‑free.

E-commerce logistics company Radial says its Kentucky warehouse has used Locus’s robots to pick more than 25M million items so far.

COMMUNITY

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Anthropic blocks xAI's Claude access

Read our last Tech newsletter: Lego’s iconic brick just got a brain

Read our last Robotics newsletter: Hyundai mass-producing Atlas robots

Today’s AI tool guide: Find dozens of free AI tools with Google Labs

RSVP to next workshop @ 4PM EST Friday: AI Foundations Boot Camp Pt. 2

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — The Rundown’s editorial team

No matching search results

Try using different keywords, double-check your spelling, or explore related categories.

Stay Ahead on AI.

Join 2,000,000+ readers getting bite-size AI news updates straight to their inbox every morning with The Rundown AI newsletter. It's 100% free.