Get the latest AI news, understand why it matters, and learn how to apply it in your work — all in just 5 minutes a day. Join over 2,000,000+ subscribers.

OpenAI reclaims the image crown

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. After OpenAI’s DALL-E and GPT Image 1 paved early ground in image generation, Google's Nano Banana has topped the leaderboards for the better part of a year. That run just ended.

OpenAI's new ChatGPT Images 2.0 is the first image model that plans, searches the web, and self-checks its outputs before generating, and the results show — with an upgrade that Sam Altman says is like "going from GPT-3 to GPT-5 all at once."

In today’s AI rundown:

OpenAI breaks new ground with Images 2.0

Meta logging employee keystrokes to train AI

Build a command center with Claude Live Artifacts

Google pushes Deep Research Agent to the max

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

OPENAI

🎆 OpenAI breaks new ground with Images 2.0

Image source: OpenAI

The Rundown: OpenAI just rolled out ChatGPT Images 2.0, the company’s upgraded image generation model that had been going viral in testing over the last few weeks — calling it the “smartest image generation model ever built".

The details:

2.0 thinks before generating images, allowing it to plan, search the web for info and references, and check its outputs for errors before delivery.

The model takes the No.1 spot on Arena AI’s text-to-image leaderboard by a wide margin over Nano Banana 2, sweeping every category of generations.

Other features include 2K resolution, producing up to 8 images at a time, aspect ratios from 3:1 ultrawide to 1:3 tall, plus multilingual text rendering.

Sam Altman called the release “like going from GPT-3 to GPT-5 all at once", with the model now available in ChatGPT, Codex, and in the API.

Why it matters: It’s been a while since OAI topped the image world, and this release brings it back in a big way — with a model that not only feels like it ‘solves’ images and text issues like no other model has, but also completely changes workflows yet again with thinking abilities and capabilities that open up brand new creative avenues.

TOGETHER WITH ALGOLIA

🧩 A practical guide to building AI agents that work

The Rundown: The next step in AI isn't better chat; it's agents that can query databases, update systems, and make decisions. Does that mean more custom connectors? Not sure.

Whether you’re a developer or data leader, Algolia's guide helps you understand:

Challenges in building AI Agents

How MCP servers connect Agents with search

Best practices & real cases

META

🕵️ Meta logging employee keystrokes to train AI agents

Image source: Images 2.0 / The Rundown

The Rundown: Meta is running a Model Capability Initiative (MCI) to record screenshots, keystrokes, and mouse activity on U.S. employees' work laptops, with no opt-out, to capture real data for AI training, sparking backlash within the organization.

The details:

MCI's capture scope skews towards developers, logging activity in apps like VSCode, Metamate (Meta's internal AI assistant), Google Chat, and Gmail.

Business Insider published the internal memo, with CTO Andrew Bosworth reportedly responding to concerns by saying there is “no option to opt out”.

About 8,000 Meta staffers are set to exit on May 20, with MCI starting to log their workflows a month before their end date.

The memo presented the move as the way for all Meta employees to help the company’s “models get better simply by doing their daily work.”

Why it matters: Robotics labs have spent years recording humans doing physical tasks to teach their systems when and how to grab, walk, or stack boxes. Meta just brought that playbook to software and computer use, except the demo subjects are its own staff — and the backdrop of layoffs gives it a very dystopian feel.

AI TRAINING

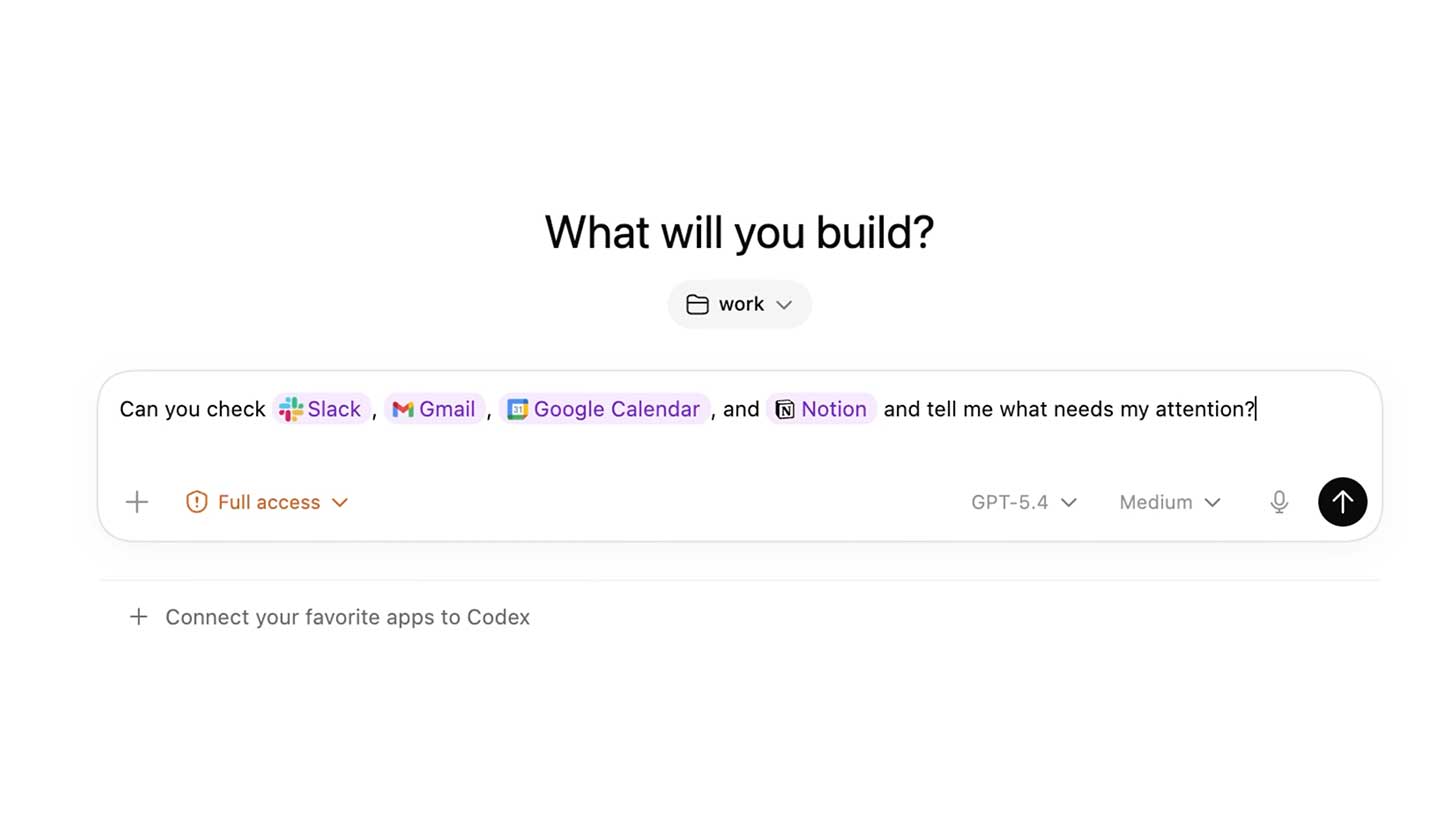

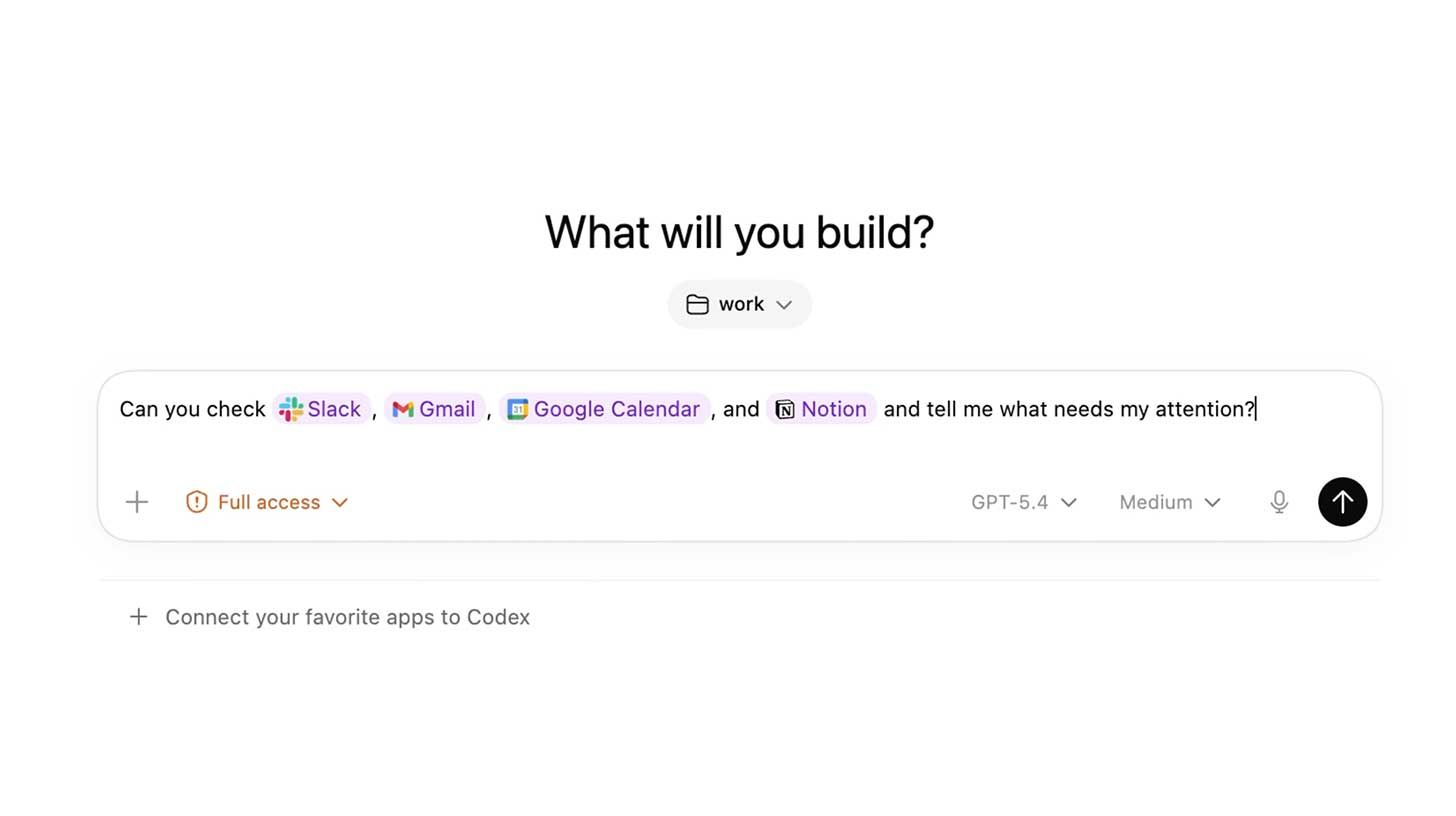

🎛️ Build a command center with Claude Live Artifacts

The Rundown: In this guide, you will learn how to build a daily command center in Claude Cowork with Live Artifacts. Instead of opening Slack, email, calendar, tasks, docs, and dashboards one by one, you will get one live view all in one place.

Step-by-step:

Open Claude Cowork and prompt: “Interview me about my connected apps, daily workflow, KPIs, and what counts as urgent. Then propose the modules for a daily command center before creating the artifact”

Answer Qs and then ask to build a modular Live Artifact dashboard with Today, This Week, and This Month views, including KPI cards, stats, charts, app feeds

Ask to add priority labels and ranking so updates are categorized (urgent, review, FYI, blocked) and sorted by impact, deadlines, and decisions needed

Prompt to add skills with dedicated buttons, like “Plan my day,” “Draft replies,” or “Prep meetings,” so you can take action from the dashboard itself

Pro tip: Try additional upgrades like dark mode, animations, a settings panel for update frequency, manual override, an archive button, and click to open any update.

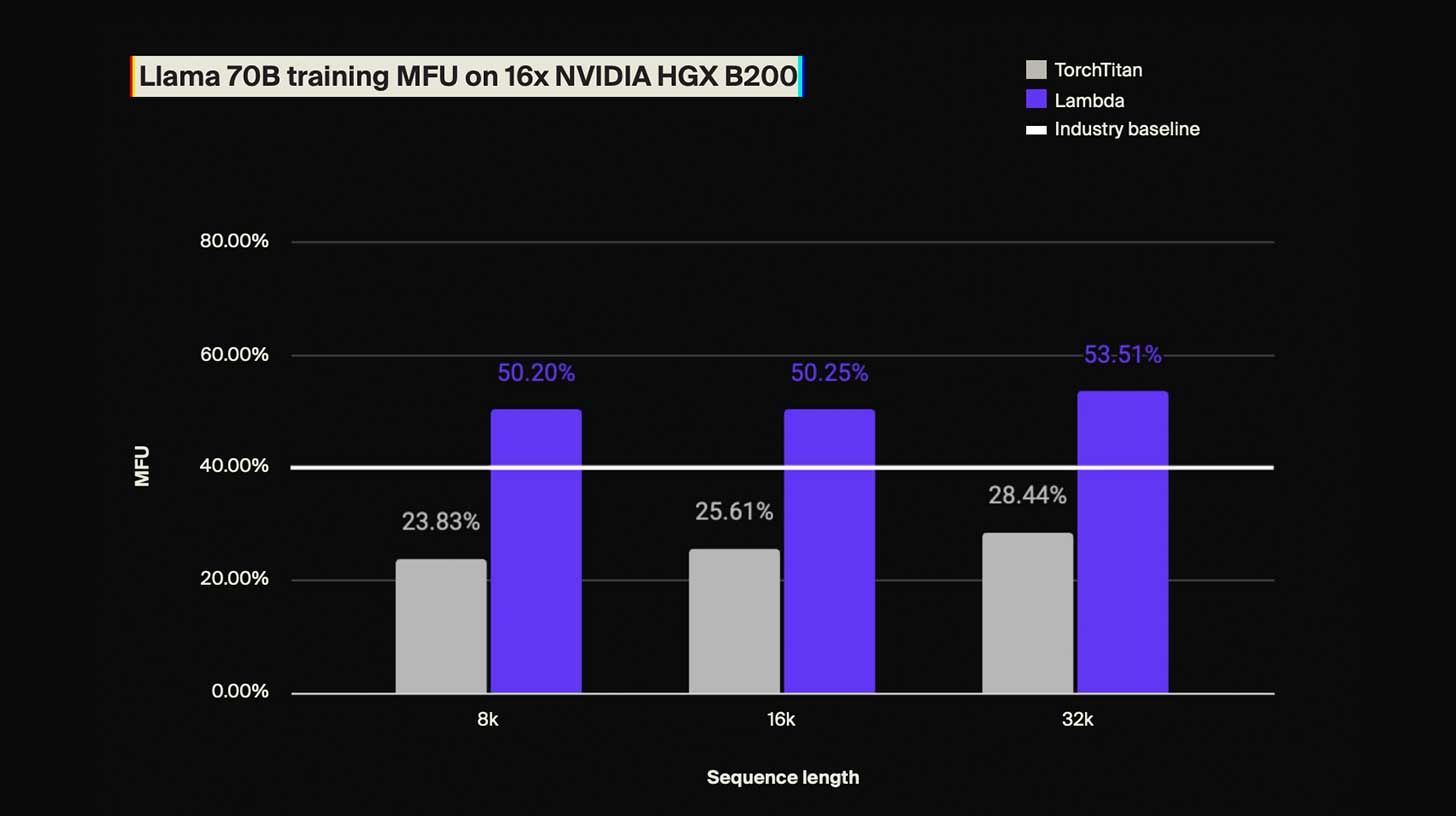

PRESENTED BY LAMBDA

✂ Cut your AI training costs by over 25%

The Rundown: Most large-scale AI training runs use less than half the computing power they're paying for. Lambda's team found the root causes and built a reproducible framework that boosted efficiency by over 25%, without changing the model itself.

Lambda’s whitepaper shows you how to address:

Memory inefficiencies silently inflating your costs

Training configurations that aren't making full use of your hardware

Bottlenecks that slow down GPU communication

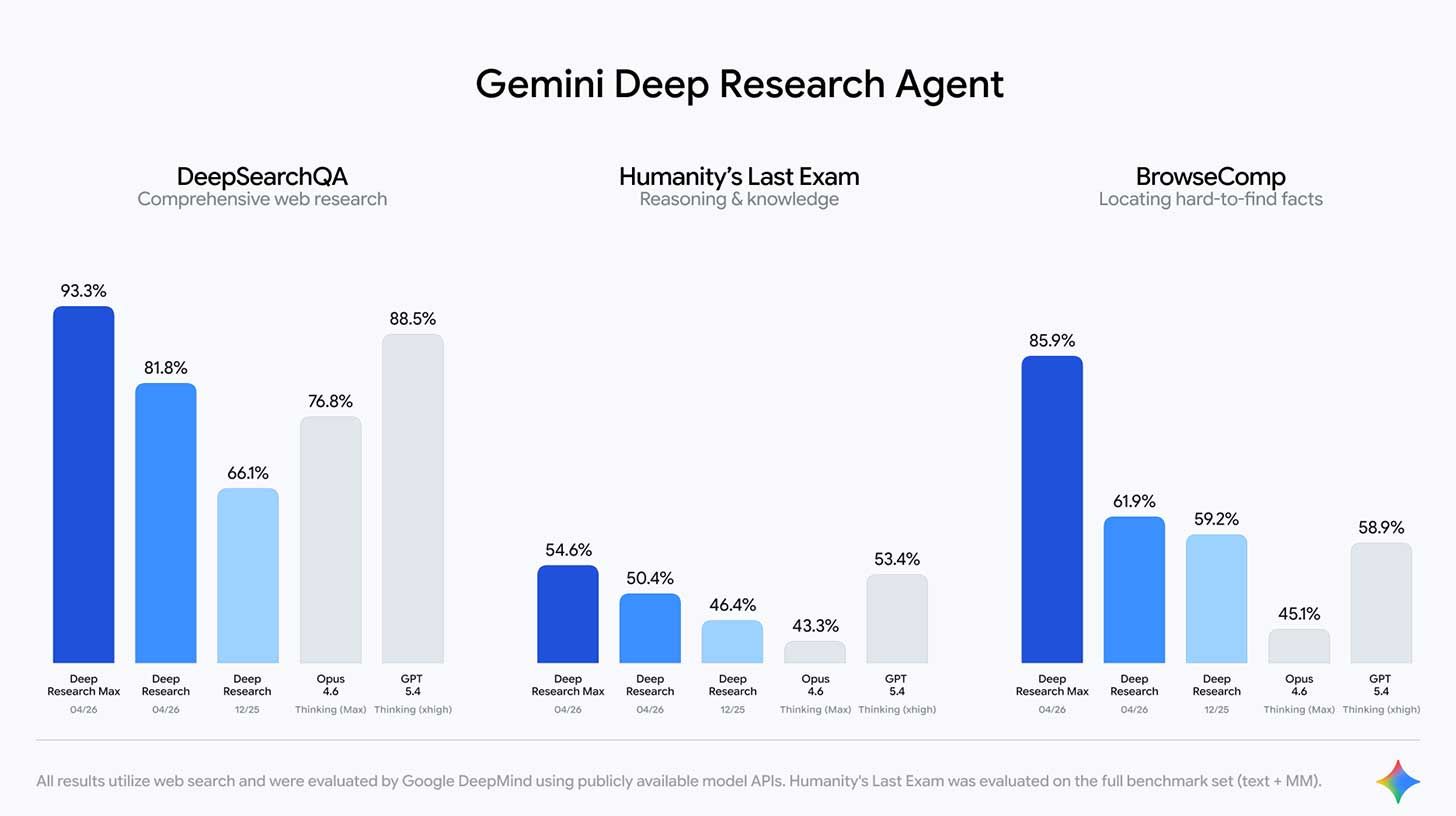

📚 Google pushes Deep Research Agent to the max

Image source: Google

The Rundown: Google released Deep Research and Deep Research Max, two SOTA agents that use Gemini 3.1 Pro to generate research reports from the web, uploaded files, or any Model Context Protocol server, complete with charts and infographics.

The details:

Both agents use Gemini 3.1 Pro and run on the same research engine inside NotebookLM, replacing Google's December preview of Deep Research.

Google's benchmarks show jumps for Max on retrieval and reasoning from both previous versions and against models like Opus 4.6 and GPT 5.4.

Users can also combine open-web search with MCP servers and file uploads, or cut off external web access to search only their private data.

Google is already working with firms like PitchBook, S&P, and FactSet to build MCP servers that pipe paid financial data directly into the research workflow.

Why it matters: Research-heavy work of analysts, consultants, and lawyers has been an obvious target for AI automation. Google's move turns that threat into a priced API call any developer can wire into a product. Expect more partnerships to follow as every vertical figures out which parts of its research workflow just became automatable.

QUICK HITS

🛠️ Trending AI Tools

🔒 Incogni - Remove your personal data from the web so scammers and identity thieves can’t access it. Use code RUNDOWN to get 55% off.*

🎆 ChatGPT Images 2.0 - OpenAI’s new next-generation image model

📚 Deep Research Max - DeepMind's research agent with MCP, native charts

🔎 Deep Max - Exa's new SOTA agentic search tool

*Sponsored Listing

📰 Everything else in AI today

Former OpenAI research VP Jerry Tworek launched Core Automation, a new AI lab building "an AI to build AI" with founders from OpenAI, Anthropic, and DeepMind.

Meta poached three more employees from Mira Murati's Thinking Machines Lab, bringing the total number of founding members who departed to the tech giant to 7.

Google open-sourced its DESIGN.md feature from Stitch, a portable file that lets AI agents understand a project's colors, accessibility, and brand rules.

Exa released Deep Max, a new agentic search tool that tops existing rivals on accuracy while running 20x faster.

Genspark launched Build, a new Claude Opus 4.7-powered agentic vibe-coding tool that generates apps and websites from text prompts

Deezer reported that 75K AI tracks are now published on its platform daily (44% of uploads), but draw just 1-3% of streams, with 85% of them labeled as fraudulent.

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader Matthew S. in the U.K.:

"I used Claude to build my own exercise tracking app and exported the code to Bolt to make a web app. I have a specific set of exercises that I do each day that other trackers don’t map or give me streaks for. It lets me input each set into each of the four sections and tells me when I’ve met my target for the day.

It only lets me build my streak after I have completed all exercise targets and keeps a daily record of what I achieved. Much easier!"

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: DeepMind commits to a Claude catch-up

Read our last Tech newsletter: Apple gets a new boss

Read our last Robotics newsletter: Humanoid smokes half-marathon record

Today’s AI tool guide: Build a daily command center with Live Artifacts

RSVP to workshop April 30 @ 2PM EST: Codex for non-technical operators

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer—the humans behind The Rundown

Apple gets a new boss

Read Online | Sign Up | Advertise

Good morning, tech enthusiasts. John Ternus spent 25 years building the hardware that made Apple the world’s most valuable company. On September 1, he takes the CEO chair and inherits a company that has spent the AI era playing catch-up.

Tim Cook leaves behind a $4T empire — and a product roadmap still searching for its breakout AI device.

In today’s tech rundown:

John Ternus replaces Tim Cook as Apple CEO

Blue Origin stuck the landing, lost the satellite

California says Amazon fixed prices across the web

WhatsApp is testing a new paid tier

Quick hits on other tech news

LATEST DEVELOPMENTS

APPLE

🍎 John Ternus replaces Tim Cook as Apple CEO

Image source: Apple

The Rundown: Apple is bringing longtime hardware chief John Ternus as its next CEO, with Tim Cook relinquishing day-to-day control after 15 years to move upstairs as executive chairman starting September 1.

The details:

Ternus will become CEO and join the board on Sept. 1, after more than two decades shaping flagship products from the iPhone to the Mac.

Cook, who took over from Steve Jobs in 2011, oversaw Apple’s rise from about $350B to $4T in market value, and will shift to executive chairman.

Apple’s shakeup comes as it faces pressure to catch up on AI-first hardware, with Ternus cast as the product-driven steward of that shift.

OpenAI CEO Sam Altman responded by calling Cook “a legend,” while Anduril founder Palmer Luckey reacted with a tongue‑in‑cheek “RIP Tim Apple.”

Why it matters: Cook’s legacy is less about redefining consumer tech than about turning Apple into a hyper-efficient machine, from AirPods and Apple Silicon to the supply chain that powers them. Apple’s handoff to Ternus comes as the next hardware cycle shifts to on-device AI, putting a product-first insider in charge of that pivot.

TOGETHER WITH AWS MARKETPLACE

🤖 Your AI agents can’t share state yet

The Rundown: Multiple agents sharing mutable state, passing messages, and recovering from silent failures — that’s a distributed systems problem. AWS’s free technical workshop on April 28th shows you how to solve it using LangGraph, Step Functions, EventBridge, and Amazon MQ.

The session covers:

How to connect multiple agents so they can coordinate and communicate reliably

Building in safeguards so one agent failing doesn't take down the whole system

Moving from prototype to production-grade multi-agent infrastructure on AWS

BLUE ORIGIN

🚀 Blue Origin stuck the landing, lost the satellite

Image source: Blue Origin

The Rundown: Blue Origin finally proved it can reuse its massive New Glenn booster. But an underpowered upper stage stranded a customer satellite in a dead-end orbit, turning a showcase flight into a high-profile failure.

The details:

New Glenn’s third launch marked the first successful recovery of its reusable first stage, which touched down cleanly on a droneship in the Atlantic.

The upper stage misfired, stranding an AST SpaceMobile’s BlueBird satellite in a low, unsustainable "off-nominal" orbit instead of its planned circular one.

Blue Origin pointed to a weak-thrust engine failure in the upper stage; AST SpaceMobile said additional BlueBirds are already manifested for later this year.

An FAA anomaly investigation is now underway, clouding New Glenn’s pitch to NASA, national security agencies, and high-value commercial customers.

Why it matters: Booster recovery was the hard part, and Blue Origin did it. But an upper-stage miss is exactly the kind of failure that haunts a rocket’s reputation when defense agencies and constellation operators are picking their next launch provider. SpaceX’s grip won’t loosen until New Glenn proves the whole stack works, every time.

AMAZON

📦 California says Amazon fixed prices across the web

Image source: Ideogram / The Rundown

The Rundown: California is accusing Amazon of an alleged years-long price-fixing scheme in which the company pressured brands to raise prices on rival platforms, effectively ensuring Amazon would always look like the better deal.

The details:

Newly unsealed filings say Amazon leaned on brands to “fix” or “increase” prices at Walmart, Target, etc., to keep Amazon’s own listings looking cheapest.

The state alleges Amazon issued threats to cut ads, seek compensation, or delist products if vendors did not pressure rival sites to raise prices.

California says the scheme reached major brands like Levi’s, Hanes, and big pet-food suppliers, elevating prices across much of online retail.

Prosecutors point to a Home Depot case where, after Amazon flagged cheaper pricing with a vendor, that vendor then agreed to raise prices on the site.

Why it matters: If California prevails, it could set a landmark precedent for how antitrust law applies to platforms that police prices not just on their own storefronts but across the broader e-commerce market — and whether Amazon’s vendor agreements, long treated as standard retail practice, constitute illegal price-fixing at scale.

💵 WhatsApp is testing a new paid tier

Image source: Ideogram / The Rundown

The Rundown: WhatsApp is rolling out a test of “WhatsApp Plus,” a paid tier that turns the world’s biggest messaging app into another subscription experiment in Meta’s quest to squeeze more cash out of its user base of 3 billion people.

The details:

WhatsApp Plus mirrors Instagram Plus and Snapchat+ in scope, offering cosmetic perks like custom app icons, new chat themes, and ringtones.

Power users get a handful of practical upgrades: the ability to pin up to 20 chats (vs. the current three) and expanded custom lists for managing inboxes.

Meta is running the pilot in select markets, with pricing calibrated to local economies — around €2.49 per month in Europe and roughly $0.82 in Pakistan.

A broader rollout could bring tiered monetization — cosmetic, then productivity, then who knows — across Meta’s app family.

Why it matters: Meta is testing where users draw the line between free features and paid extras on WhatsApp and Instagram. If WhatsApp Plus catches on, it could open the door to more paid features across Meta’s messaging apps, and change what billions of people expect from a service that has long felt free.

QUICK HITS

📰 Everything else in tech today

Elon Musk reportedly bought about $1.4B worth of SpaceX shares from current and former employees last year, increasing his stake ahead of the company’s planned IPO.

Apple hardware chief Johny Srouji is carving Apple’s hardware org into five teams to sharpen focus on future iPhone, iPad, and Watch hardware.

Elon Musk failed to appear for a voluntary interview with Paris prosecutors probing X and its Grok chatbot over sexually explicit deepfakes and Holocaust‑denying content.

A SpaceX filing showed President and COO Gwynne Shotwell made about $85.8M last year, mostly in stock, ahead of the company’s planned IPO.

Google Photos is rolling out new AI-powered face touch-up tools that let users make edits like smoothing skin, removing blemishes, and whitening teeth directly in the app.

The Onion struck a court-backed deal to take over Alex Jones’ InfoWars, aiming to relaunch it as a Tim Heidecker–led comedy network.

Uber is spending about €270M (around $318M) to buy another 4.5% stake in German food delivery group Delivery Hero.

Blue Energy raised $380M in equity and debt to build large, modular nuclear power plants manufactured in shipyards, starting with a 1.5-gigawatt project in Texas.

An experimental mRNA vaccine for pancreatic cancer showed durable immune responses in a small phase 1 trial, with most responders still alive up to six years later.

The Pentagon canceled the long‑delayed, over‑budget GPS OCX ground system, killing a flagship space program before it could threaten existing GPS service.

Tech companies have already cut more than 73K jobs in 2026, according to a new report, with many of the layoffs explicitly tied to AI-driven automation and restructuring.

Rivian’s factory in Normal, Illinois, was hit by an EF-1 tornado that damaged its R2 logistics building, forcing a temporary pause in operations. No injuries were reported.

COMMUNITY

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Sergey Brin commits to Claude catch-up

Read our last Tech newsletter: SpaceX buys up a lot of Cybertrucks

Read our last Robotics newsletter: Humanoid smokes half-marathon record

Today’s AI tool guide: Create landing pages that convert in Claude Design

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — The Rundown’s editorial team

Sergey Brin commits DeepMind to a Claude catch-up

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. Catching Anthropic's lead in coding just became Sergey Brin's personal project.

The once-retired Google co-founder is now reportedly running a DeepMind “strike team” focused on closing Gemini's internal coding gap with Claude — with Brin framing the push as the shortest route to the holy grail of self-improving AI.

In today’s AI rundown:

Brin mobilizes DeepMind to chase Anthropic on code

Moonshot AI’s Kimi K2.6 closes open-source gap

Create high-converting landing pages in Claude Design

Adobe’s new agentic AI platform for enterprises

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

💻 Brin mobilizes DeepMind to chase Anthropic on code

Image source: Lovart / The Rundown

The Rundown: Google co-founder Sergey Brin is personally rallying DeepMind to out-code Anthropic with Gemini, according to The Information — creating a new “strike team” and pitching the effort to staff as the shortest route to self-improving AI systems.

The details:

Research engineer Sebastian Borgeaud, who previously ran DeepMind's pretraining, is leading the group under CTO Koray Kavukcuoglu and Brin.

In an internal memo, Brin told staff the real prize is AI that trains the next AI, with coding being the capability that gets Gemini there.

DeepMind researchers reportedly rate Claude's code-writing above Gemini's internally, which kicked off Brin's push for a dedicated team.

Gemini engineers now have to use Google's internal agent tools on complex tasks, with usage tracked on a company leaderboard called Jetski.

Why it matters: After dominating the AI conversation towards the end of 2025, Google has had a slow start to 2026. But Brin's push isn't a product response — it's an internal one, and the strike team's real job is to automate Google itself, closing the gap with deeply embedded AI systems already operating inside Anthropic and OpenAI.

TOGETHER WITH YOU.COM

🐌 Is your API latency metric lying to you?

The Rundown: Most teams pick an API by checking a benchmark table and calling it done—a shortcut that could miss what really matters in production. This guide from You.com explains why raw latency is a misleading signal and what to measure instead.

What you'll get:

Why p50 latency hides the failures your users actually experience

The "time-to-useful-result" framework that captures what benchmarks leave out

Four hidden cost drivers that show up in your logs, not vendor tables

How to evaluate APIs at your actual concurrency levels, not the demo conditions

Stop optimizing for the wrong number. Learn what to measure instead.

MOONSHOT AI

🔓 Moonshot AI’s Kimi K2.6 closes open-source gap

Image source: Moonshot AI

The Rundown: Moonshot AI’s Kimi open-sourced K2.6, a new agentic coding model that nears or outperforms models like GPT-5.4, Opus 4.6, and Gemini 3.1 Pro across top benchmarks for reasoning, coding, and more at a fraction of the cost.

The details:

K2.6 beats GPT-5.4, Opus 4.6, and Gemini 3.1 Pro on benchmarks including Humanity’s Last Exam w/ tools (reasoning) and SWE-Bench Pro (coding).

On long-horizon work, K2.6 can work for 12+ hours straight across 4,000+ tool calls, with Kimi showing it refactoring an 8-year-old codebase in demos.

Always-on agents like OpenClaw and Hermes now run on K2.6, with Kimi reporting one internal agent operated autonomously for five days straight.

K2.6’s agent swarms can now spin up 300 parallel sub-agents at the same time to complete tasks, triple the amount of its K2.5 predecessor.

Why it matters: Dario Amodei just said open-source and China are 6-12 months behind frontier labs, and while that may be true of internal releases, public systems are looking a lot closer. Given frustrations over usage rates and the rise of autonomous agents, K2.6 looks like a powerful, cost-effective new option for agentic workflows.

AI TRAINING

🧑🎨 Create high-converting landing pages in Claude Design

The Rundown: In this guide, you will learn how to use Claude Design, Anthropic’s new design tool, to generate four mockup variations of your website’s landing page.

Step-by-step:

Go to claude.ai/design, select the wireframe option, click create, and write: who the page is for, what you’re selling, and the action a visitor should take”

Find and screenshot a landing page you like. Try searching “top landing pages in [your niche]” and the checkout pane of a site that does millions of daily transactions, like Amazon, eBay, or PayPal

Add the screenshots to your brief, and tell Claude to create four variations of the mockup. Answer any follow-ups, and wait 2-5 minutes for results

Click any element and leave a note like "rewrite this CTA to be outcome-specific" or "add a testimonial." Claude applies the changes to refine outputs

Pro tip: Click Share > Handoff to Claude Code > Send to Claude Code Web to get Claude Code to build and deploy the final website for you.

PRESENTED BY SLACK

🤖 Work smarter, not harder with Slackbot

The Rundown: Your team is already using AI tools. But are they actually getting smarter results? Slackbot, Slack's built-in AI, turns your entire workspace into a productivity engine — surfacing answers, drafting content, and automating the busywork so your team can focus on what matters.

In this free guide, you'll discover:

How Slackbot synthesizes conversations, files, and apps for instant answers

Ways to automate routine tasks without writing a single line of code

How teams are using AI to move faster and collaborate more effectively

ADOBE

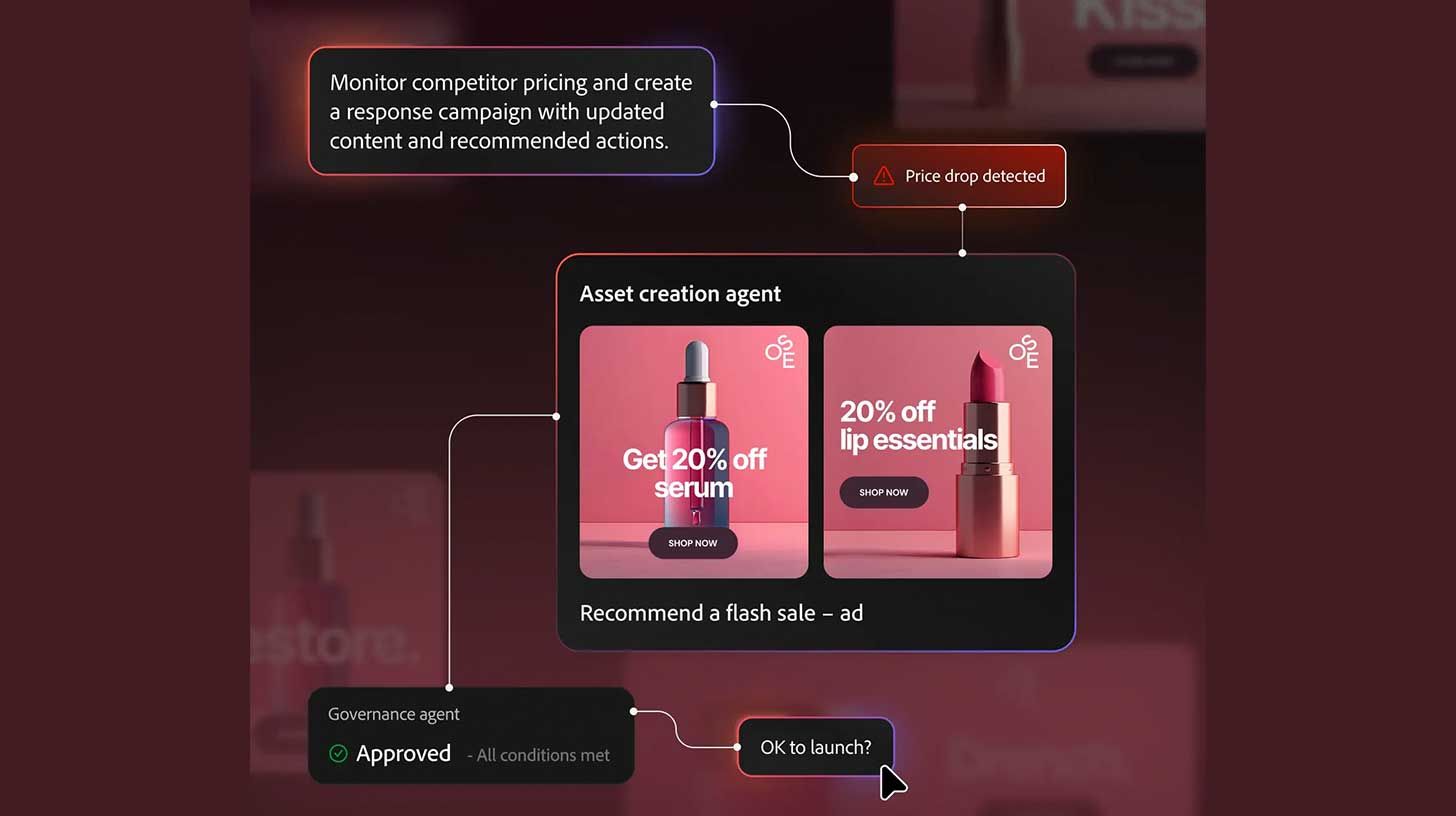

🤖 Adobe’s new agentic AI platform for enterprises

Image source: Adobe

The Rundown: Adobe just introduced CX Enterprise at its Adobe Summit, a new agentic platform built to help businesses coordinate marketing, content, and customer interactions through networks of AI agents.

The details:

CX Enterprise weaves three pillars under one agentic orchestration layer: brand visibility, content supply chain, and customer engagement.

CX Enterprise Coworker assembles the correct agents and tools based on a specific user goal, creating a plan and executing multi-step actions.

Adobe’s Marketing Agent now plugs into systems like ChatGPT, Claude, Gemini, and Copilot, coordinating between the agents and Adobe apps.

The company is also launching an agent skills catalog, enabling enterprises to create reusable, customizable workflows within the platform.

Why it matters: The entire design world is moving toward agentic workflows, with Figma Agents, Canva Agents, and Adobe all jockeying for position. The bigger threat is the labs cutting out the middleman: Launches like Claude Design and every subsequent improvement will make legacy orchestration paths more difficult.

QUICK HITS

🛠️ Trending AI Tools

🤖 Scrunch - See how AI interprets your site, run a free audit, and unlock the new way to reach customers*

⚙️ Kimi K2.6 - Moonshot AI's powerful open-source coding and agent model

🧠 Chronicle - OpenAI's Codex feature that uses screen context for memory

🚀 Qwen3.6-Plus - Alibaba's new AI with a 1M context window, strong coding

*Sponsored Listing

📰 Everything else in AI today

OpenAI rolled out Chronicle, a Codex preview feature that runs background agents capturing your screen to build persistent memories, limited initially to Pro users on Mac.

Ex-Meta chief AI scientist Yann LeCun said people shouldn’t listen to Dario Amodei about AI’s impact on labor markets, or “Sam, Yoshua, Geoff, or me”, saying economists have the most important perspective.

Lovable denied reports that it suffered a data breach after users flagged that public project chats were visible, saying the issue was a documentation failure.

Tinder and Zoom partnered with Sam Altman's World, letting users get "proof of humanity" badges via iris scans to combat AI bots and deepfakes.

Anthropic expanded its Amazon deal for 5 GW in compute, with the tech giant investing up to $25B more into Anthropic in exchange for its $100B+ AWS commitment.

Recursive Superintelligence raised $500M at a $4B valuation, with the four-month-old startup founded by OAI and Deepmind alumni building AI that improves itself.

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader Julie N. in Grand Junction, CO:

"As a digital marketer, I use ChatGPT as my assistant. I will feed all of my information about a client's Google Ads needs into it, including budget, landing page, and let it design keyword-appropriate headlines and descriptions, set the ad strategy, and recommend additional campaign options.

Also, I will take my stream-of-consciousness thoughts about a potential SEO package and create a front-facing & admin-side PDF that I can share with potential clients.”

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Claude comes for the design stack

Read our last Tech newsletter: SpaceX buys up a lot of Cybertrucks

Read our last Robotics newsletter: Humanoid smokes half marathon record

Today’s AI tool guide: Design landing pages that convert in Claude Design

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown

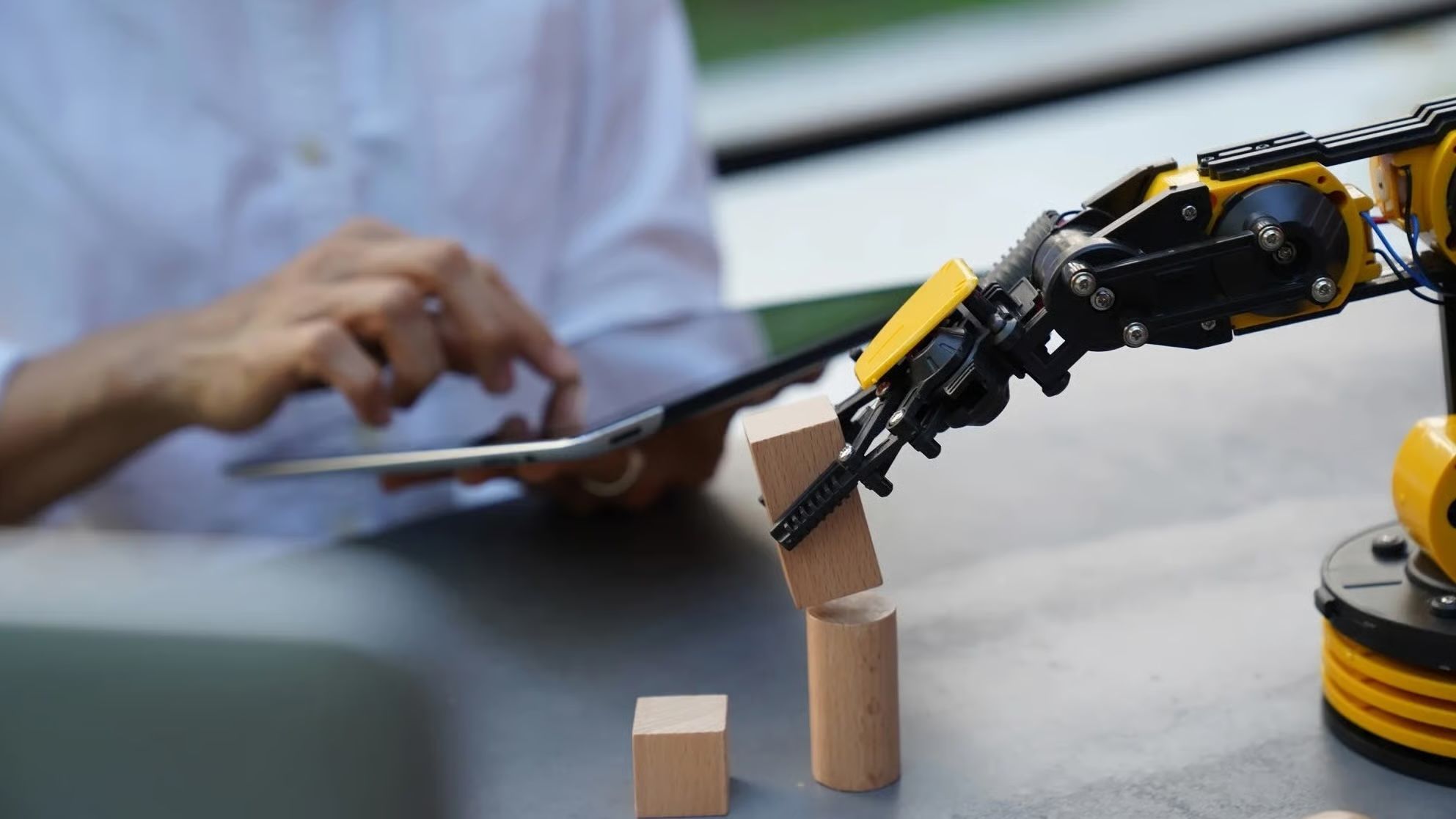

Humanoid smokes half-marathon record

Read Online | Sign Up | Advertise

Good morning, robotics enthusiasts. On Sunday, Honor’s bright-red Lightning bot crossed Beijing’s half-marathon finish line in 50:26 — nearly seven minutes faster than the human world record — as more than 100 humanoids raced alongside 12K people.

The contrast from last year’s race tells you everything: the winner took 2 hours and 40 minutes, and most of the field couldn’t even make it past the first kilometer.

In today’s robotics rundown:

Robot beats the human half-marathon record

Tesla launches robotaxis in Dallas and Houston

Coco’s delivery bots are now accessibility scouts

Physical Intelligence’s robot brain can wing it

Quick hits on other robotics news

LATEST DEVELOPMENTS

HUMANOIDS

👟 Robot beats the human half-marathon record

Image source: Keven Frayer / Getty Images

The Rundown: Humanoids just outran human runners at Beijing’s half marathon, with Honor’s bright-red “Lightning” bot finishing the 21km course in 50:26 — nearly seven minutes faster than Jacob Kiplimo’s freshly minted human world record.

The details:

More than 100 Chinese-made humanoids ran in a dedicated lane alongside 12K humans, in only the second year robots have joined the race.

Honor’s Lightning platform slashed last year’s robot-winning time from 2:40:00 to 50:26, and at least four bots broke the one-hour mark.

Roughly 40% robots ran fully autonomously while the rest were teleoperated, with organizers weighting the scoring to favor self-driving systems.

The race doubled as a showcase for China’s 150-plus humanoid startups, proving their robots’ balance, battery life, and navigation prowess.

Why it matters: A year after clunky, closely supervised bots jogged only part of the course, this race showed quieter, real progress. Most robots finished, a few fell, and their recovery systems mostly worked. That messy but steady evolution hints at how fast humanoids are being shaped for unpredictable real-world work.

TESLA

🚖 Tesla launches robotaxis in Dallas and Houston

Image source: Tesla

The Rundown: Tesla is debuting its Robotaxi service in tight geofenced pockets of Dallas and Houston, tiptoeing into markets where rival Waymo has been operating since February with no safety monitors.

The details:

The launch follows pilots in Austin and San Francisco, but no word yet on fleet size, pricing, and how many rides — if any — will run without safety drivers.

Mapping of the initial service areas shows Houston limited to roughly 25 square miles and Dallas constrained to a small patch around Highland Park.

The launches are part of a planned 7-city expansion, including Phoenix, Miami, Orlando, Tampa, and Las Vegas in 2026.

Waymo is already live in both Texas cities with fully driverless vehicles and now claims around 500K paid robotaxi rides each week across 10 U.S. markets.

Why it matters: Tesla’s ‘Robotaxi’ expansion into Dallas and Houston opens with geofences a fraction of the size of its Austin footprint, which took nearly a year to grow from 20 to 245 square miles. The company has logged 15 crash incidents since its Austin launch, and faces increased federal scrutiny.

COCO ROBOTICS

🥡 Coco’s delivery bots are now accessibility scouts

Image source: Coco Robotics

The Rundown: Delivery bot startup Coco is turning its 10K sidewalk robots into a live sensor network for BlindSquare, feeding real-time hazard alerts to blind pedestrians in six cities across the U.S. and Europe, Fortune reports.

The details:

Coco’s robots now stream live obstacle data, like fallen e-scooters or bad curb cuts, into BlindSquare’s self-voicing navigation app.

The partnership, born out of an EU-backed pilot in Helsinki, taps Coco’s constantly updated sidewalk map to fill in the accessibility gaps.

A two-way feedback loop lets BlindSquare users flag cleared hazards, helping Coco instantly reroute robots over newly accessible paths.

One goal is to use the stack for smarter cities, from bots “pressing” crosswalks to lights that extend green when robots and pedestrians bunch up.

Why it matters: Sidewalk delivery bots are usually cast as cute nuisances or job-stealing automatons, but this deal reframes them as infrastructure, quietly building live accessibility maps. If it works, every robot dodging tipped scooters and busted sidewalks becomes a roaming accessibility probe.

PHYSICAL INTELLIGENCE

🧠 Physical Intelligence’s robot brain can wing it

Image source: Physical Intelligence

The Rundown: Physical Intelligence’s new π0.7 model is showing early signs that a single generalist system can improvise — combining learned skills and web-scale training to handle real-world tasks it was never explicitly taught on.

The details:

π0.7 shows compositional smarts, remixing prior skills and web-scale pretraining to run an air fryer that it was effectively never trained on.

With verbal coaching, π0.7 robots completed multi‑step chores like cooking a sweet potato, and matched or beat specialist models on coffee and laundry.

The team says they were “genuinely surprised” when π0.7 handled unfamiliar setups, like new appliances, beyond what their training data should allow.

Physical Intelligence published the findings as a research paper, positioning π0.7 as an early-stage result, not a finished system.

Why it matters: Backed by over $1B in funding and reportedly in talks for a new round that would raise its valuation to $11B, Physical Intelligence is building toward a software-first foundation model for robotics. If generalist intelligence holds up, the field could stop building bespoke stacks and converge on a few shared intelligence layers.

QUICK HITS

📰 Everything else in robotics today

Figure’s new Vulcan AI balance policy lets the Figure 03 robot stay upright and limp itself to a repair bay even after losing up to three lower‑body actuators.

Siemens trialed Humanoid’s Nvidia-powered HMND 01 Alpha at Siemens’ Erlangen electronics factory, where it autonomously handled tote logistics for 8+ hours.

MIT built a soft ion-conducting gel that becomes roughly 400x more conductive under light, enabling adaptive wearables, human–machine interfaces, and soft robotics.

Neptune Robotics is investing $12M in a new Singapore manufacturing and R&D facility to scale its AI-powered autonomous ship hull-cleaning operations.

Chef Robotics said its AI-powered kitchen robots have assembled 100M product servings in commercial production, marking a major scale milestone.

Agibot is deploying its G2 semi-humanoid robots on Longcheer’s tablet production lines in China, moving embodied AI into full-time electronics manufacturing.

Scientists developed a slime-like artificial muscle that can be reconfigured on demand, self-heal, and reused, so one soft robot can morph for multiple tasks.

Chinese researchers tested a ground-based microwave system that beams power to drones in flight, letting them stay aloft for hours without landing to recharge.

German retailer Rossmann is running a year-long trial of UBTECH’s Walker S2 humanoid at its Burgwedel logistics hub to handle repetitive warehouse tasks.

Researchers built a leg-mounted robotic diving exoskeleton that uses waist motors and smart sensors to sync with a diver’s kicks, cutting oxygen use by 40%.

COMMUNITY

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Claude comes for the design stack

Read our last Tech newsletter: SpaceX buys up a lot of Cybertrucks

Read our last Robotics newsletter: Uber’s $10B robotaxi pivot

Today’s AI tool guide: Run your own free coding agent on your laptop

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — The Rundown’s editorial team

Claude comes for the design stack

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. Every few weeks, an Anthropic launch seems to rattle a new industry. This time it's design.

Claude Design turns prompts, screenshots, and codebases into shippable prototypes — the latest layer of the software stack Anthropic is quietly pulling under one roof.

In today’s AI rundown:

Anthropic rolls out Claude Design

The Rundown Roundtable: Our AI use cases

Run a free coding agent on your laptop

Three OpenAI leaders exit amid reshuffle

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

ANTHROPIC

🎨 Anthropic rolls out Claude Design

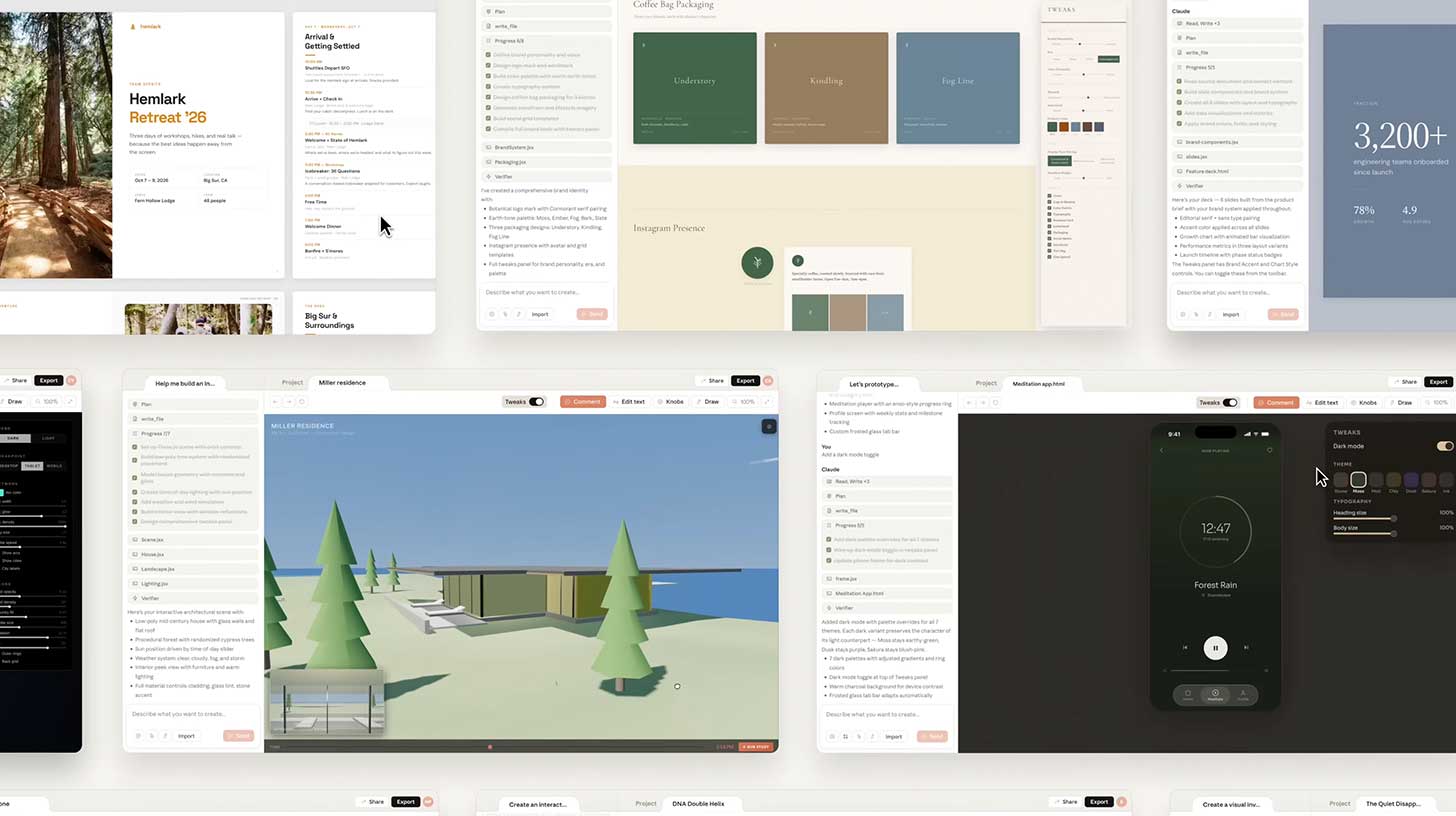

Image source: Anthropic

The Rundown: Anthropic just launched Claude Design, a new tool that turns prompts, screenshots, and codebases into interactive prototypes, slide decks, and marketing collateral powered by the company’s new Opus 4.7 vision model.

The details:

Claude can read users’ codebases and existing mockups during setup to build a brand system that auto-applies to every future project.

Users can refine designs through chat, inline comments, direct edits, or custom sliders Claude generates for spacing, color, and layout.

Finished work can be handed off to Claude Code as a build-ready bundle or exported to Canva, PPTX, PDF, or standalone HTML for further editing.

Anthropic CPO Mike Krieger resigned from Figma's board on April 14, three days before launch, amid rumors of a competing product from the company.

Why it matters: Every few weeks, an Anthropic launch shakes a new industry — and this time it is design. With the new tool, Anthropic is closing the loop from first sketch to shipped product inside a single ecosystem. Add in Cowork, browser agents, and office integrations, and every layer of the software stack is moving under one umbrella.

TOGETHER WITH SONAR

💡Redefine the “Shift Left”

The Rundown: The speed of autonomous agents requires a transition from reactive testing to proactive engineering. Sonar’s CEO has coined the “Agent Centric Development Cycle” framework that challenges how verification works in an agentic world — by embedding it at every stage of the engineering process, inside each reasoning cycle, and at sandbox exit.

This workflow includes:

Guiding agents with project-specific context

Generating code with LLM-based tools within your architecture and standards

Verifying code integrity inside the sandbox - automatically, at agent speed

Solving bugs and vulnerabilities autonomously

THE RUNDOWN ROUNDTABLE

💡 The Rundown Roundtable: Our AI use cases

Image source: Ideogram / The Rundown

The Rundown: The Rundown Roundtable is a weekly feature where we poll members of The Rundown staff about how we use AI in our work and daily lives.

Zach, AI Writer: My father takes a ton of different medications every day for various conditions, and also sees a lot of different doctors. I was concerned about how they were all interacting with each other and if the doctors at different practices were even getting the full picture.

I gave Claude a comprehensive list of all of the drugs and his medical history, and asked for a deep research report about potential interactions. Claude provided a thorough list of issues to bring up to his doctors about dangerous interactions and situations that might be getting missed, referencing up-to-date journals, guidelines, and research that would’ve taken me days.

It also laid everything out in an easy-to-read format, and provided specific instructions on who to contact and what specific questions to ask — helping turn a potentially stressful and disjointed doctor conversation into a strong action plan.

Joey, Head of Partnerships: I have Claude connected to all my communication platforms (Slack, Notion, calendars, Granola, etc) and pulls a morning brief for me each day. I've curated so it provides me an interactive page each day with call prep, priority tasks, recommendations of tools to use to solve XYZ, recap of activities overnight, etc.

AI TRAINING

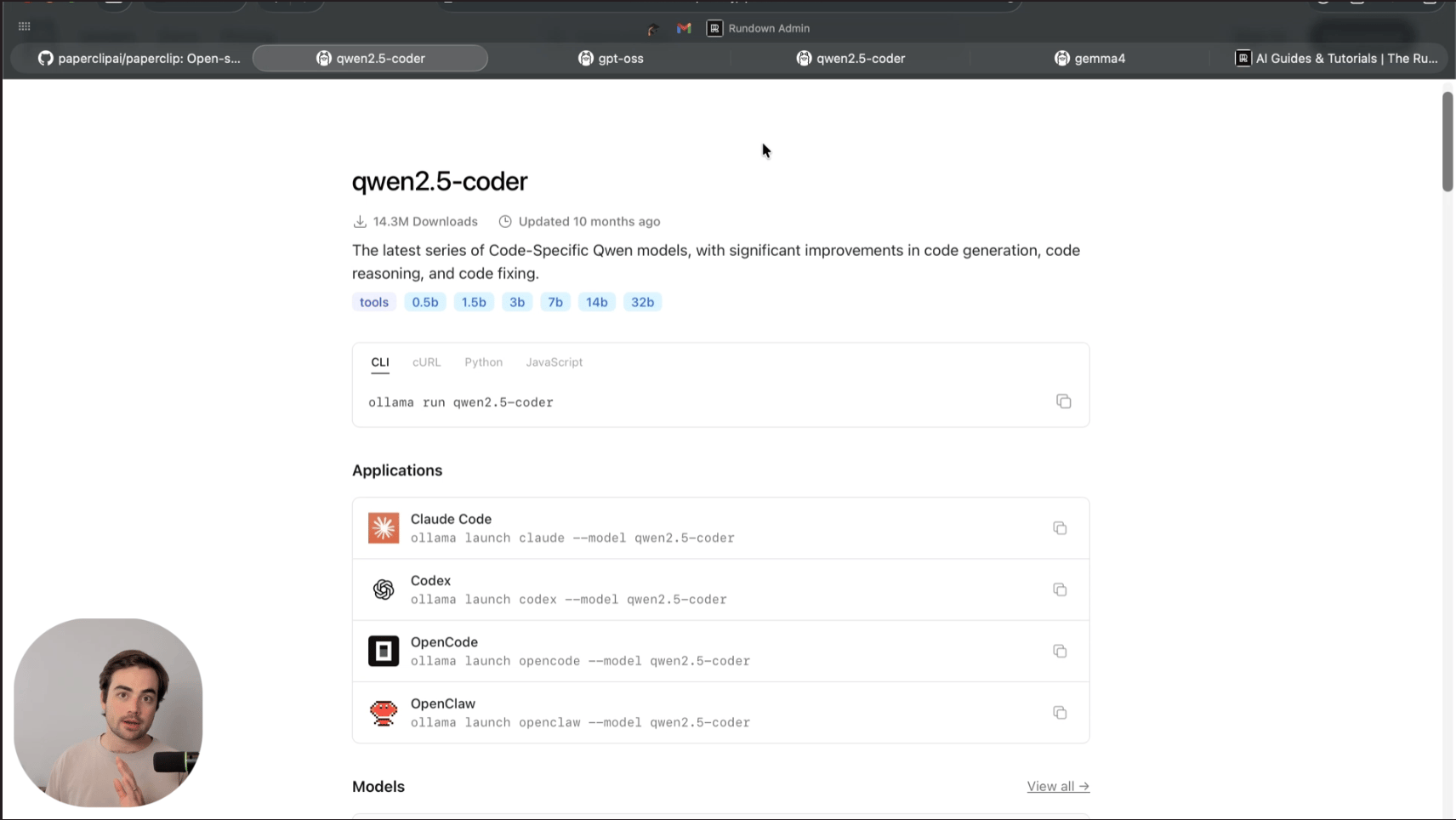

🤓 Run your own free coding agent on your laptop

The Rundown: In this guide, learn how to download a coding LLM to your laptop using Ollama. You will be able to run it for free inside popular coding tools like Claude Code or Codex.

Step-by-step:

Screenshot your PC hardware specs, drop them into Claude or ChatGPT, and ask which Ollama coder model you can run

Browse coder models, choose the best-suited one, and grab its launch command from Ollama. Example: “ollama launch claude--model gemma4:e4b”

Open a terminal in your project folder, paste the command, download, and wait. Once done, Ollama drops you into Claude Code, pointed at the local model

Go to Ollama > Settings > Context. Bump the default 4K to 32K or higher. If Claude Code refuses tasks or drops tool calls, try OpenCode the same way

Pro tip: Local AI is not a replacement for paid Claude Code/Codex. Treat it as practice or basic debugging. You can wire it as a cheap subagent under the paid Claude Code.

PRESENTED BY SLACK FROM SALESFORCE

📅 Make meetings optional with Slack

The Rundown: Want to make more progress with fewer meetings? Find out how Slack can help your team move faster and keep calendars clear.

In this guide, you'll discover:

How to replace status meetings with AI-generated summaries and recaps

Use huddles for quick, searchable conversations instead of scheduled calls

Record and share async clips so teammates can review on their own schedule

Read the full guide and reclaim your calendar.

OPENAI

🚪 Three OpenAI leaders exit as reshuffle continues

Image source: LinkedIn / X / X

The Rundown: OpenAI lost three senior execs in a day, with ex-CPO Kevin Weil, Sora lead Bill Peebles, and enterprise apps chief Srinivas Narayanan departing — capping a month of leadership changes as the company kills ‘side quests’ for a narrower focus.

The details:

Former CPO Weil led OpenAI for Science, which is being ‘decentralized’ into other teams, with the Prism app for scientists also being woven into Codex.

Peebles led Sora until OAI killed the video app last month over cost, calling its development the “honor and adventure of a lifetime”.

Narayanan ran OAI's enterprise apps for three years after 13 at Facebook, and said on X he's heading to India to care for aging parents.

Sam Altman wrote in a recent blog that OpenAI is "now a major platform, not a scrappy startup" and needs to "operate in a more predictable way."

Why it matters: Last month, we covered OAI scrapping "side quests" to catch Anthropic, and a month in, the changes are certainly visible. Whether these departures are actually a result of that shake-up or just personal movements, they are big ones — particularly Weil, who has been the face of science-related efforts at the company.

QUICK HITS

🛠️ Trending AI Tools

🎨 Claude Design - Anthropic’s new tool for creating polished visual work

🚀 Codex - OAI's coding agent, now with computer use, in-app browser, more

🤖 Claude Opus 4.7 - Anthropic’s top AI with advanced agentic coding

🖥️ Perplexity Personal Computer - Agent orchestrator for files, apps, more

📰 Everything else in AI today

Anthropic CEO Dario Amodei told the Financial Times that he believes open-source and Chinese models will be able to reach Mythos capabilities in just 6-12 months.

An AI artist named Inga Rose hit No. 1 on iTunes’ global charts with the single “Celebrate Me”, with the music created with Suno and the lyrics written by a human.

Google is reportedly working with Marvell to help design a custom TPU and memory processing unit for AI inference, aiming to cut its longtime reliance on Broadcom.

Nous Research introduced Tool Gateway, a subscription that powers its Hermes Agent without requiring multiple APIs, amid surging usage of the agentic platform.

Salesforce launched Headless 360, exposing its full platform as MCP tools, APIs, and CLI commands so coding agents can act on customer data.

Vercel disclosed a breach that began with a hacked AI tool connected to Google accounts, impacting a “limited subset” of customers and prompting an investigation.

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader David P. in New Zealand:

"I have a small recording studio that focuses on acoustic folk and roots music. I used ChatGPT to write a plug-in-style app based on Cubase plugins. I can run my mix through the app, and it can identify each instrument and make EQ and editing suggestions for each that will bring the track into a mastering-ready state. It creates waveforms and tables to present the information in an easy-to-read graphic UI.

I then load a reference track, and it will advise what EQ is required to essentially match the reference track's vibe. This has given me great technical information about each recording so I can manually make changes to the mix to suit what I want to achieve, but ensure it is technically excellent."

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: OpenAI’s superapp hiding inside Codex

Read our last Tech newsletter: SpaceX buys up a lot of Cybertrucks

Read our last Robotics newsletter: Uber’s $10B robotaxi pivot

Today’s AI tool guide: Run your own free coding agent on your laptop

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown

Exclusive: Inside Canva AI 2.0 with CPO Cameron Adams

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. Most AI design tools give you a result and stop there. You get a finished image, and then you're on your own, prompting in circles to get it closer to what you actually meant.

Canva is betting the real value isn't in generation — it's in what comes after. The company just launched Canva AI 2.0, turning its platform into an AI-native environment where generated designs are fully editable, and the AI refines alongside you rather than handing off a flat image and stepping back.

We sat down with Cameron Adams, Canva's co-founder, CPO, and a designer himself, in an exclusive Q&A to understand what this actually unlocks, where it still falls short, and what it means for the people whose jobs revolve around making things.

In today’s AI rundown:

Closing the gap between words and vision

Being 'great' when AI makes everyone good

Where the model surprised its creators

The ‘last mile’ of creative work

What changes for teams worldwide

Quick hits with Cameron

LATEST DEVELOPMENTS

DESIGN INTELLIGENCE

💪 Closing the gap between words and vision

The Rundown: Adams says understanding what a user actually means, not just what they type, is a major problem in creative AI. Canva is tackling this by training its model not just on language, but also on the sequence of actions that lead to a finished design.

Cheung: If I prompt to create a minimal ad with “tension in the negative space." Does the Canva AI 2.0 actually understand what tension in negative space means?

Adams: Most AI systems learn from finished outputs... What they can't see is everything that happened before it. We trained our foundation model, the Canva Design Model, on structured data, millions of designs, and the actual sequence of edits used to build them.

Understanding how people actually get to good work (Canva has 265M+ monthly users), the hesitations, the pivots, the moments of clarity, is what separates creative intelligence from generation. Rather than sitting outside the creative process, the Canva Design Model lives in the editor itself, shaped by the same typography systems, layout rules, brand kits, and collaborative workflows our users work within every day.

If you were to use this prompt in Canva AI 2.0, you'd see it share back its thought process on how to tackle the design. It's combining language model reasoning to interpret the prompt with its design training to execute it... And it's not re-assembling templates, it's generating entirely new editable elements (coming from Canva’s 2024 acquisition of Leonardo.ai) to fulfill your vision.

Why it matters: While it remains to be seen how good Canva AI 2.0 really is at capturing design nuances professionals often use, this is a step toward a future where you spend less time on the loop of prompting again and again, and get to a usable outcome with far less effort.

DIFFERENTIATION IN THE AI AGE

💯 Being 'great' when AI makes everyone good

The Rundown: As AI-driven design makes creation accessible to anyone, Adams says there will always be room for “greats” to stand out with their judgment, empathy, and knowing what will strike a chord with the audience.

Cheung: Canva AI 2.0 can now generate and edit at the layer level — text, elements, colours. That means anyone with a vision can execute it without touching a tool. Does the gap between a great designer and an average one get smaller?

Adams: When it comes to creativity, there will always be room for “greats” to stand out from the “goods”, but the skills that you need to stand out are constantly evolving. When you think about other eras of creative change, democratisation always makes room for more expression but also enables the best in the field to push even further.

When anyone can produce something polished, what separates the work is the thinking behind it and the message it contains. Judgement and empathy become more important: the strength of the idea, the sensitivity to context, the instinct about what will resonate, and the fundamentals of creating connection with other people. These things only humans can bring, and that’s why we’ve built an agentic experience that keeps the user at the center.

This is powerful for designers, but even more so for those who need to create visual materials but aren’t designers: a marketer creating campaign materials, a wedding planner designing a seating chart, or a student’s school project.

Why it matters: For anyone in a creative role wondering what AI leaves for them and how to shine — this is the answer. AI handles the execution. What it can’t replicate is the harder stuff: knowing your audience, your instinct, and getting what actually will work. The better you are at that, the more successful you become in the age of AI.

AI CREATIVE PARTNER

🧠 Where the model surprised its creators

The Rundown: From scavenger hunts to cleaning up docs and slides, Adams says he’s using Canva AI to handle both personal and professional creative tasks, along with the small fixes around them — with the AI sometimes catching issues well before he even notices.

Cheung: You're a designer. What’s something you built with Canva AI that surprised you?

Adams: I love making scavenger hunts for my kids, but it’s often time-consuming... Canva AI is actually really brilliant at doing a scavenger hunt if you give it a list of places around your house. It can design a cryptic visual clue for each of them and deliver it as a finished design ready for me to print and place around the house.

Then, in my work, having that design partner on call is invaluable for tweaking the decks and docs that I’m already working on. Being able to call up Canva AI and ask it to tidy up some mess I’ve made as I’ve been brainstorming a slide, or inject a table into a doc I’m writing with research on the latest market stats are invaluable time saver.

Adams added: It consistently upskills itself and handles tasks we’ve never tested or optimised for. One example was when it started to get really good at converting ASCII diagrams into designs almost flawlessly.

Why it matters: When AI starts catching what you missed before you notice it, and keeps improving over time, the relationship with the tool changes. Less effort goes into spotting errors and fixing inconsistencies. But that same trust can also create blind spots, where both the user and the AI miss something — and the output quietly breaks.

AI & ACCURACY

🔍️ The ‘last mile’ of creative work

The Rundown: Adams says Canva is actively stress-testing its AI model by deliberately breaking designs, while also positioning the platform as the “last mile” of the creative process, completing the ideas started by AI assistants like ChatGPT and Claude.

Cheung: In creative work, a small error can spiral into a long back-and-forth. How are you improving the accuracy of your AI when it comes to designing?

Adams: We "perturb" designs, purposely breaking the spacing or hierarchy, to train the model to recognize and score those errors. Plus, we evaluate against large-scale patterns from real-world usage, like alignment, readability, and brand consistency. This all culminates in what we call agentic editing: a system designed to catch small errors and refine them alongside you.

Cheung: As AI assistants like ChatGPT now generate, edit, and publish campaigns from chat, where does the design canvas still matter, and how does Canva stay the destination?

Adams: We don’t see the rise of AI assistants as a bypass; we see it as a massive expansion of how people start their creative journey. By embedding Canva into AI ecosystems people use – ChatGPT, Claude, Copilot, and now Google Gemini – we’ve established Canva as the definitive visual layer for the AI ecosystem.

The design canvas will remain essential because most AI assistants are great for thinking, but they’re often a dead end for doing. If you want to make a precise change, collaborate with your team, or ensure every pixel is strictly on-brand, you eventually hit a wall in a chat box. You end up in a loop of prompts instead of just grabbing an element and moving it.

Why it matters: While AI enthusiasts may position chatbots as the go-to for creative tasks, the reality is that these tools are great for starting ideas, but not finishing them. The gap between a prompt and a publish-ready design is still wide, and that's where Canva is planting its flag, stress-testing its AI to pick up where assistants leave off.

FUTURE OF WORK

🧑💼 What changes for teams worldwide

The Rundown: Adams argues the real story isn’t teams getting smaller, it’s that every team in a company suddenly gains design capability they never had before. The roles that matter most shift toward creative strategy and brand stewardship.

Cheung: Do you see design teams shrinking with AI in the loop? Which roles do you think will become vital in this age of AI?

Adams: I’d actually flip the premise. This isn’t really a story about design teams getting smaller. It’s about every team in a company suddenly having design capability they never had before.

The marketing coordinator, the sales lead, or the founder pulling together a pitch at 11 pm. They’re already doing this work in Canva, and now they have an always-on partner to produce work that spans a far greater breadth, without waiting on a design queue.

The ability to think about campaigns and projects with far greater scope and higher impact is now what teams should be striving for, and it’s letting teams stretch beyond their traditional boundaries and constraints.

Adams added: The roles that become vital are the ones focused on creative strategy and brand stewardship. You need people who can set the vision and craft the brand kit ingredients that the AI will then use to scale your capabilities.

We’ve already been anticipating this overlap and building Canva up as a platform where anyone can create visual work, while remaining plugged into a full productivity suite that includes your team’s context, connects with customer data, and integrates with your other work tools like Slack.

Why it matters: As agent teams handle execution, the professional edge goes from producing the work to directing it. Adams’ framing is useful: it’s not about teams shrinking, but design capability spreading across the entire company. The people who thrive are those who move up to creative strategy — setting a vision that AI then scales.

LIGHTNING ROUND

⚡️ Quick hits with Cameron

The one design task that AI will never do better than a human?

Adams: Deciding not to do something. Knowing which idea to go with and which to leave on the table is a purely human insight. AI can give you options, but it will never have the gut feeling to tell you that none of them are as good as the one idea you have.

Who keeps you up at night — Adobe, Figma, or OpenAI?

Adams: People often ask me about Adobe & Figma, but we’re in a totally different (and much bigger market) to them. We’re bringing design to the entire world, not just a subset of professionals.

On the flipside, as the only worksuite that genuinely brings creativity and productivity together with AI, we’re also more than just a chatbot. People need somewhere where they can collaborate with their team and turn AI-generated content into real, usable work. So I’m sleeping pretty soundly at the moment.

Things Canva usage data tell you about how people use AI in design?

Adams: The biggest surprise is how much people want to stay in control of the output. Not full automation, not a magic “make it for me” button. They want options, they want to suggest edits, they want to describe what they’re after in their own words, often quite subjective ones. “Make it feel more premium” or “something a bit warmer,” and they expect the tool to understand that.

The other thing that’s become really clear is that most people genuinely don’t care what model is running under the hood. They’re not shopping for AI, they’re trying to get something done. What matters is whether it fits into how they already work.

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown

SpaceX buys up a lot of Cybertrucks

Read Online | Sign Up | Advertise

Good morning, tech enthusiasts. Elon Musk’s own companies are reportedly buying a lot of Cybertrucks — and without them, Tesla’s sales numbers would look a lot worse.

SpaceX alone accounted for more than 18% of all U.S. Cybertruck registrations in Q4 2025, and without purchases from Musk’s other ventures, sales would have fallen 51% year-over-year. SpaceX may not need 1,279 stainless-steel pickups — but Tesla certainly did.

In today’s tech rundown:

Tesla Cybertruck’s biggest customer is Musk

Reed Hastings is leaving Netflix

YouTube adds an off switch for Shorts

Amazon buys Globalstar for $11.57B

Quick hits on other tech news

LATEST DEVELOPMENTS

TESLA

🛻 Tesla Cybertruck’s biggest customer is Musk

Image source: Ideogram / The Rundown

The Rundown: Elon Musk’s own companies bought nearly one in five Cybertrucks registered in the U.S. last quarter, reportedly masking a demand collapse that would have otherwise sent sales down 51% year-over-year.

The details:

SpaceX alone accounted for 1,279 of the 7,071 Cybertrucks registered in the U.S. during Q4 2025 — more than 18% of the total, according to Bloomberg.

The remainder went to xAI, Neuralink, and The Boring Company, for a total of 1,339 units and roughly 19% of all U.S. registrations for the quarter.

Bloomberg noted it remains unclear what Musk’s non-rocket companies are doing with the trucks — or why an AI firm would need 50 of them.

The pattern has continued into 2026, with Musk-owned entities adding another 158 Cybertrucks in January and 67 in February.

Why it matters: The figures put hard numbers on what had been anecdotal — Cybertrucks piling up at SpaceX’s Starbase — and raise questions about how Tesla accounts for sales to companies its CEO controls, without the disclosure standards expected in comparable fleet transactions.

TOGETHER WITH FIN

📅 Fin and Attio on agentic GTM

The Rundown: Join Rati Zvirawa, Sr. Director of AI Product at Fin, the best-performing AI Agent for customer experience, and Nicolas Sharp, Founder & CEO of Attio, for a live discussion on how the best revenue teams are rebuilding the sales funnel with agentic AI.

What you’ll learn:

How AI is reshaping modern GTM experiences

The importance of shared context across interactions

How to design AI GTM for trust and control

NETFLIX

💼 Reed Hastings is leaving Netflix

Image source: Getty Images

The Rundown: Reed Hastings, the co-founder who transformed Netflix from a DVD mailer into a $12B-a-quarter streaming empire, is leaving the board in June after 29 years — as the company he built moves fully into the Sarandos-Peters era.

The details:

Hastings, currently serving as chairman, will not stand for reelection at the June annual meeting, citing a desire to focus on philanthropy.

Netflix posted Q1 2026 revenue of ~$12.25B, up ~16% year over year, with a net income of ~$5.3B — beating expectations.

Hastings backed the failed Warner bid, but execs say his exit is unrelated; Netflix shares still slid about 8–9% in after‑hours trading on the news.

The board’s nominating committee will now move to select a new chairman in the coming months.

Why it matters: Hastings not only founded Netflix but invented the playbook that forced Hollywood to reinvent itself around streaming, data, and subscriber-first economics. His exit closes a 29-year chapter that helped reshape an entire industry. What comes next belongs entirely to co-CEOs Ted Sarandos and Greg Peters.

YOUTUBE

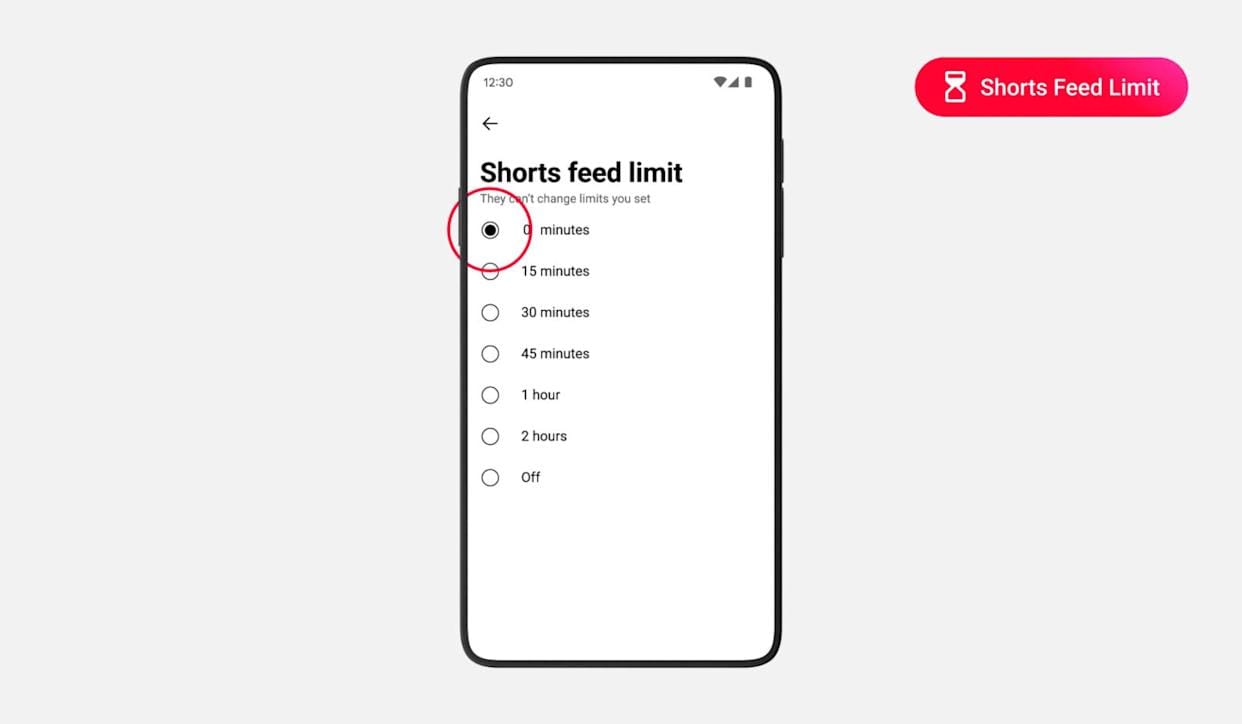

🧘🏽 YouTube adds an off switch for Shorts

Image source: YouTube

The Rundown: YouTube is giving users a genuine off switch for Shorts, adding a zero-minute limit to its existing time management tools so you can effectively strip the TikTok-style feed out of the Android and iOS app.

The details:

The zero-minute option extends an existing Shorts timer that previously only let users cap scrolling between 15 minutes and two hours per day.

Hit your limit — including zero — and the Shorts tab stops serving video, replacing the feed with a full-screen notice that you’ve reached your daily cap.

The feature originated inside parental controls but is now rolling out to all adult accounts on Android and iOS through YouTube’s time management settings.

To enable it: Settings → Time management → Shorts feed limit, then set your daily cap anywhere from zero to two hours.

Why it matters: The zero-minute limit is a rare instance of a major platform giving users a real off switch for an addictive engagement surface, rather than just more nudges and reminders. It tests how serious YouTube is about digital well‑being, and whether users (and regulators) will start expecting this level of control from rivals.

AMAZON

🛰️ Amazon buys Globalstar for $11.57B

Image source: Amazon

The Rundown: Amazon is spending $11.57B to acquire satellite operator Globalstar, giving its fledgling Amazon Leo satellite network the spectrum, infrastructure, and direct-to-device capabilities it needs to challenge SpaceX’s Starlink.

The details:

The $90-per-share deal will enable Amazon to flesh out its satellite business, Amazon Leo, with direct-to-device services ahead of its launch later this year.

Apple’s Emergency SOS and Find My features will keep running on Globalstar’s network under a new long-term agreement with Amazon.

Globalstar also brings two-way satellite IoT capability and government and defense accounts to Amazon’s portfolio.

Amazon is targeting a constellation of 3,200 satellites by 2029; Starlink currently has about 10K in orbit.

Why it matters: The deal hands Amazon a ready-made spectrum position and operational satellite infrastructure that would have taken years to build independently. For consumers, it sets up a race against Starlink in satellite connectivity that could ultimately drive broader, faster, and cheaper coverage beyond the reach of a cell tower.

QUICK HITS

📰 Everything else in tech today

Google is teaming up with Gucci to launch fashion-focused AI smart glasses in 2027, following its 2026 Android XR “Project Aura” glasses.

Alphabet’s early 6.11% stake in SpaceX could translate into roughly a $100B-plus windfall if the rocket company debuts at around a $2T valuation, Bloomberg reports.

OpenAI scrapped its Norway data-center lease, and Microsoft is taking over the capacity while still powering OpenAI via Azure.

AI data center startup Fluidstack is reportedly negotiating a new $1B funding round at an $18B valuation.

Slash, a business-banking and corporate card startup founded five years ago by then-teenage college dropouts, raised a $100M Series C at a $1.4B valuation.

The U.S. will now require data centers nationwide to disclose detailed information about their energy use through a forthcoming mandatory survey, Wired reports.

French President Emmanuel Macron is urging EU leaders to adopt a unified set of rules to limit minors’ access to social media and better protect children online.

Spotify now lets users in the U.S. and UK buy physical books from audiobook pages in its app via a “Get a copy for your bookshelf” button that redirects to Bookshop.org.

Amazon-backed nuclear startup X-energy is eyeing up to $814M in its U.S. IPO at a $7.5B valuation to fund deployment of its small modular reactors.

A lightweight Van Rysel skinsuit with an integrated airbag rapidly inflates to protect cyclists’ upper bodies in crashes and could reach consumers within two years.

The FCC granted Netgear an exemption from its ban on foreign-made routers, allowing the company to sell overseas-manufactured devices in the U.S. through 2027.

Blue Origin is rolling out a new stock-option plan that replaces its annual cash bonus program, but many employees see it as unfair treatment of current and former staff.

A cancer-drug compound, JQ1, temporarily shuts down sperm production in mice without hormones, and normal fertility returns after treatment stops.

COMMUNITY

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: OpenAI's superapp hiding inside Codex

Read our last Tech newsletter: Meta closing in on Google ad crown

Read our last Robotics newsletter: Uber’s $10B robotaxi pivot

Today’s AI tool guide: Run an LLM on your laptop for free with Ollama

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — The Rundown’s editorial team

OpenAI's superapp hiding inside Codex

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. OpenAI has been teasing a superapp for months. Today, it shipped a major first piece.

With a major Codex update bringing new features like background computer use, an in-app browser, parallel agents, and more, the rollout is OpenAI's clearest step yet toward the all-in-one platform it's been building out in the open.

In today’s AI rundown:

OpenAI’s superapp shift with Codex update

Anthropic's Opus 4.7 tops rivals, trails Mythos

Run an LLM on your laptop for free with Ollama

OpenAI’s first science domain-specific model

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

OPENAI

🧰 OpenAI’s superapp shift with Codex update

Image source: OpenAI

The Rundown: OpenAI just updated its Codex platform, shifting it from a coding agent to a cohesive ChatGPT + Atlas + Codex app with features like background computer use, parallel agents, an in-app browser, image generation, and more.

The details:

Background computer use lets Codex operate any Mac app on its own, with several agents also able to work at once, even in apps without APIs.

Memory (in preview) now retains preferences and context across sessions, while automations let Codex pick up long-running tasks days later.

An Atlas-powered in-app browser lets developers mark up pages to direct Codex, while inline gpt-image-1.5 creates mockups without switching apps.

Codex hit 3M weekly users with 70% month-over-month growth, and Codex head Thibault Sottiaux said OpenAI is "building the super app out in the open."

Why it matters: Anthropic hit a home run with Claude Code and Cowork, and this is OpenAI’s biggest challenge to it yet — bringing Codex on a similar playing field with an expansion of capabilities far beyond just an agentic coding assistant. With the company building a ‘superapp’, this feels like a big first shift towards that vision.

TOGETHER WITH LOVABLE

🛠️ Your app idea doesn't need a dev team

The Rundown: Hiring developers or learning to code used to be the only way to bring a product to life. Lovable removes that barrier entirely — its AI builds real, usable apps and websites from a simple text description.

Millions of users are already building with Lovable to:

Go from idea to a functional, customer-ready app in minutes, not months

Launch Shopify stores, admin tools, landing pages, and more with simple prompts

Launch real businesses, validate ideas, and save thousands in dev costs

ANTHROPIC

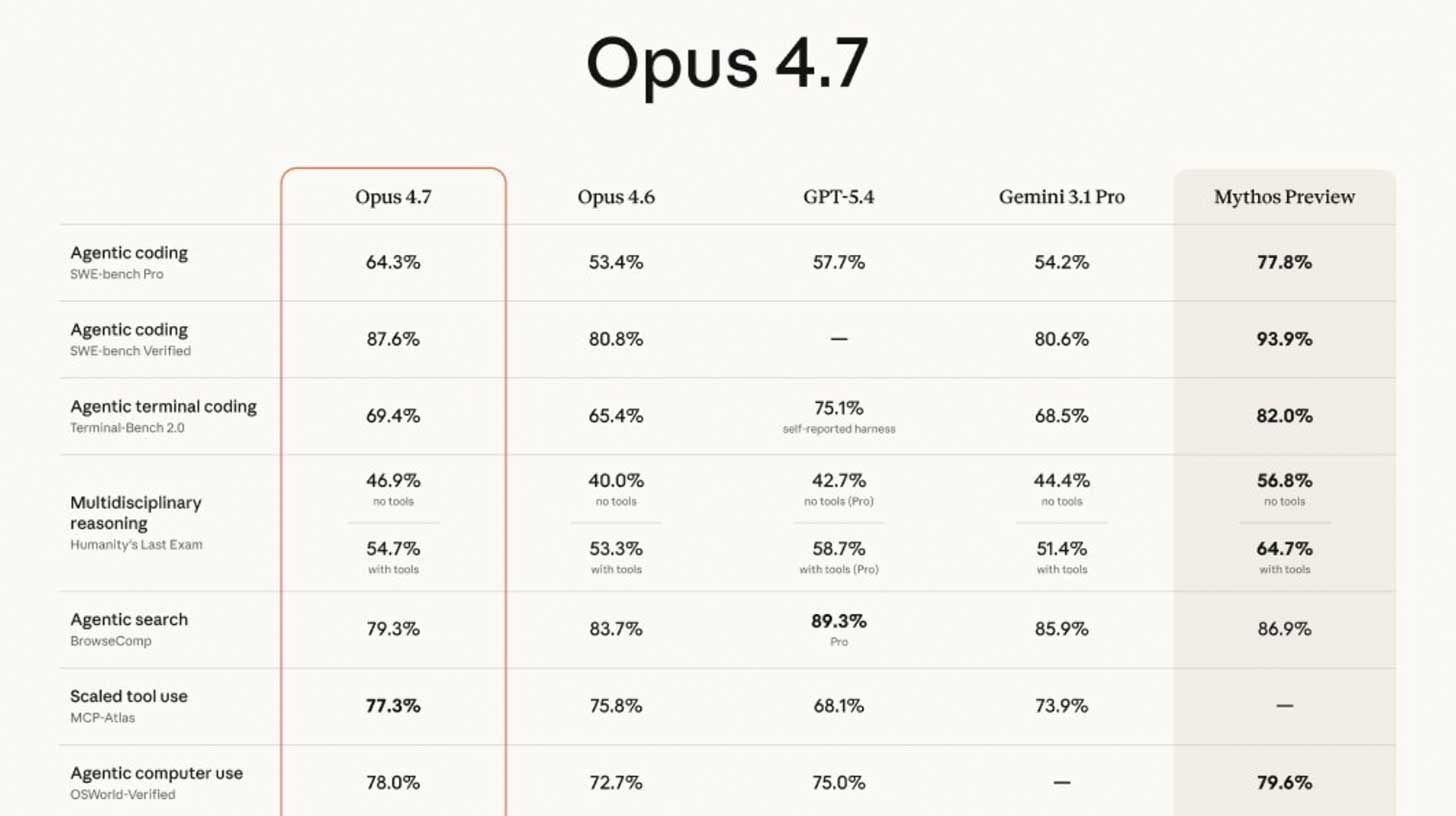

⚙️ Anthropic's Opus 4.7 tops rivals, trails Mythos

Image source: Anthropic

The Rundown: Anthropic just released Claude Opus 4.7, the company’s new top publicly available model that tops GPT-5.4 and Gemini 3.1 Pro on agentic coding — though still lags behind the company's own unreleased Mythos Preview.

The details:

Opus 4.7 jumps from 4.6's 53.4% on SWE-bench Pro coding benchmark to 64.3%, with the gated Mythos Preview still ahead at 77.8%.

The model is priced identically to Opus 4.6 for API usage, though the upgrade uses tokens significantly faster than its predecessor.

Other rollouts include a Claude Code default ‘xhigh’ effort in between high and max, and an /ultrareview slash command that flags bugs and design issues.

The release comes amid user complaints of degraded performance on 4.6, with 4.7 early reactions also coming out divided on capabilities despite benchmarks.

Why it matters: Anthropic is now running two parallel tracks: a fast 2-month public release cadence and a gated frontier line in Mythos, accessible only to exclusive partners. That split lets the company stress-test its most powerful models, but also marks one of the first times public access feels behind the true frontier.

AI TRAINING

🦙 Run an LLM on your laptop for free with Ollama

The Rundown: In this guide, you will learn how to install Ollama and run a real AI model on your laptop for free. No subscription, no account, and no data leaving your machine.

Step-by-step:

Go to ollama.com/download, get the installer for your Mac / Linux / Windows PC, and set it up. Open the app once it's installed

In the app, go to New Chat, select a lightweight model like gemma3 (about 3GB, suitable for any 8GB RAM laptop), and wait for it to download

After the model downloads, type a prompt and hit enter. That's it. You're chatting with a real AI running entirely on your laptop

Try turning on airplane mode and sending another message to watch it work with no internet at all

Pro tip: You can use Ollama's API to give your model access to web and other tools. You can also point a coding agent like Claude Code at the model and run it for free.

PRESENTED BY FIDDLER

💸 Cut the trust tax of evaluating AI agents at scale

The Rundown: Evaluating agents with external LLMs looks affordable. Until your agent traffic grows. Fiddler’s guide breaks down how to reduce Total Cost of Ownership while eliminating risk gaps in production.

Learn how to:

Evaluate every trace without sampling

Evaluate agents in-environment with batteries-included Trust Models

Reduce API costs at scale

OPENAI

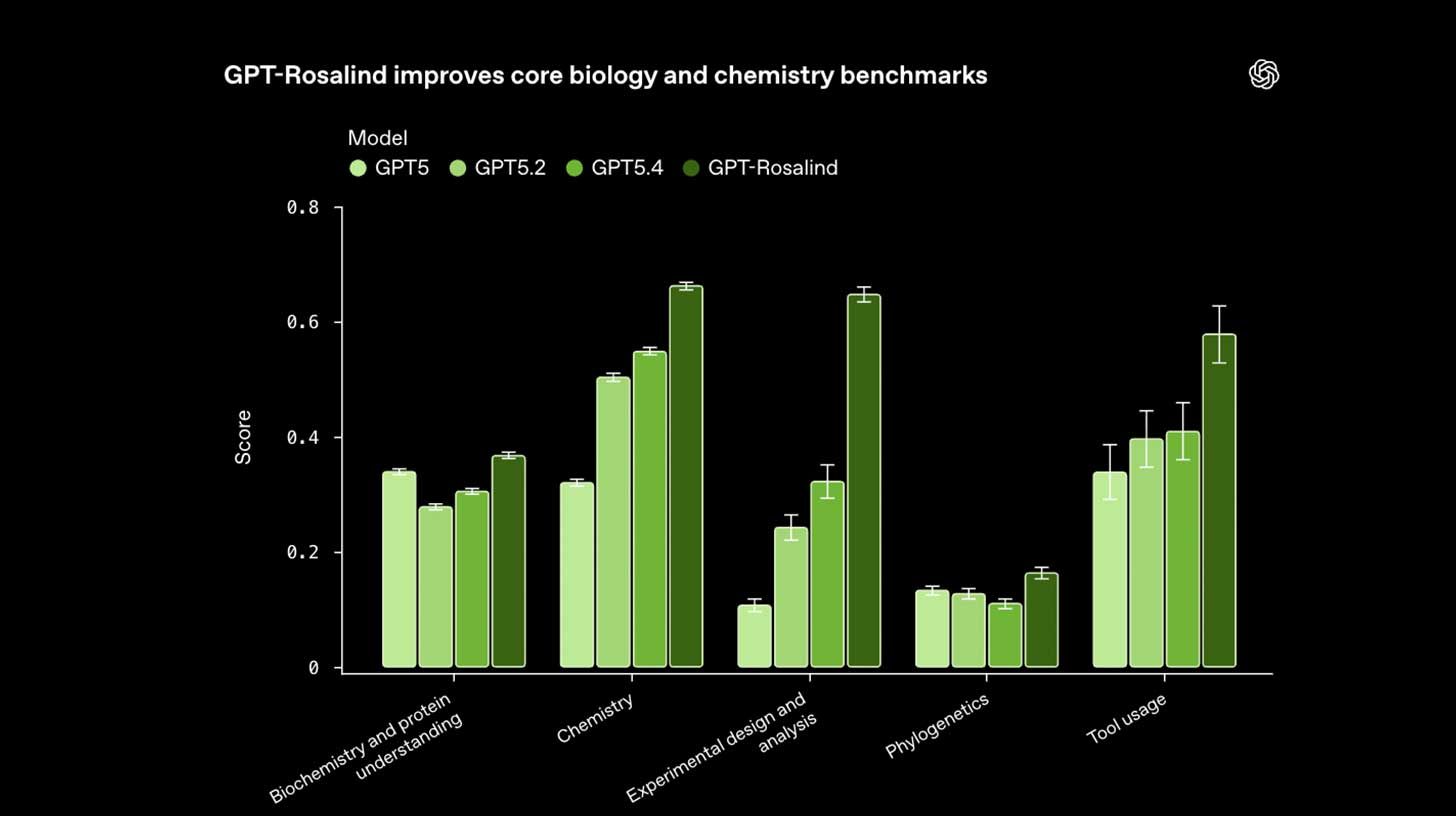

🧬 OpenAI’s first science domain-specific model

Image source: OpenAI

The Rundown: OpenAI launched GPT-Rosalind, the first model in a new life sciences series built for drug discovery and biological research, and the company's first real step into domain-specialized reasoning — following Tuesday's GPT-5.4-Cyber.

The details:

Rosalind can read scientific papers, query lab databases, design experiments, and generate biological hypotheses, simplifying the research process.

The model shows strong jumps on science-specific benchmarks for biochemistry, experiment design, tool usage, and more over GPT-5.4.

On a blind RNA test from gene therapy lab Dyno Therapeutics, Rosalind's answers scored better than 95% of human scientists on prediction tasks.

The model is available to qualifying enterprise users during the test phase, with companies like Amgen, Moderna, and the Allen Institute already using it.

Why it matters: Tuesday, it was GPT-5.4-Cyber, and today, it's GPT-Rosalind. That's two domain models in three days, showing a trend — the flagship may be good at everything, but the actual massive wedges at the top of industries like defending networks or designing drugs may need purpose-built models.

QUICK HITS

🛠️ Trending AI Tools

🤖 Claude Opus 4.7 - Anthropic’s new top AI with advanced agentic coding

⚙️ Windsurf 2.0 - Agentic IDE with new command center, Devin cloud agent

🚀 Codex - OAI's coding agent, now with computer use, in-app browser, more

🧠 HY-World 2.0 - Tencent’s world model that creates interactive 3D scenes

📰 Everything else in AI today

Perplexity rolled out Personal Computer, a Max-tier Mac app that runs agents across 20+ frontier models to drive native apps, read files, and pilot its Comet browser 24/7.

Windsurf launched 2.0, adding an Agent Command Center with a new command center view for fleets of parallel cloud and local agents and bringing Devin into the IDE.

Tencent's Hunyuan team open-sourced HY-World 2.0, a world model that generates editable 3D scenes with physics-aware movement, pushing directly into 3D pipelines.

The U.S. government is reportedly preparing to give certain agencies access to Anthropic’s Mythos AI, despite the blacklist and current legal battle with the company.

Alibaba’s ATH team introduced Happy Oyster in beta, a new world model that can create interactive 3D environments on the fly from multimodal inputs.

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader Jerry G. in Gig Harbor, WA:

"I'm a 73-year-old author and screenwriter. I have been using Claude to build my last two books. Claude helped design the book covers, formatted the interior, and even suggested titles for my stories.

Now that they are printed, Claude helps me market them to book clubs, libraries, and social media sites. Claude helped me build a Substack following and post bi-weekly new stories on Instagram and BlueSky. I'm a Claude convert and won't write any more books with his assistance.”

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Allbirds ditches sneakers for AI compute

Read our last Tech newsletter: Meta closing in on Google ad crown

Read our last Robotics newsletter: Uber’s $10B robotaxi pivot

Today’s AI tool guide: Run an LLM on your laptop for free with Ollama

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown

No matching search results

Try using different keywords, double-check your spelling, or explore related categories.

Stay Ahead on AI.

Join 2,000,000+ readers getting bite-size AI news updates straight to their inbox every morning with The Rundown AI newsletter. It's 100% free.