Get the latest AI news, understand why it matters, and learn how to apply it in your work — all in just 5 minutes a day. Join over 2,000,000+ subscribers.

Grokipedia is here, like it or not

Read Online | Sign Up | Advertise

Good morning, tech enthusiasts. Elon Musk’s xAI just rolled out Grokipedia, an AI-forged remix of Wikipedia and his latest move to upend how “neutral” knowledge gets made online.

The site so far features skeletal entries, minimal design, and a mysterious edit system with no names attached. Musk calls it already better than Wikipedia — but what’s really under the hood?

In today’s tech rundown:

Elon Musk’s Grokipedia is here

Amazon to slash 14K corporate jobs

ChatGPT’s accidental suicide hotline problem

Tesla’s end-to-end AI for robotaxis, robots

Quick hits on other tech news

LATEST DEVELOPMENTS

XAI

📖 Elon Musk’s Grokipedia is here

Image source. xAI

The Rundown: Elon Musk’s xAI just launched Grokipedia, a Wikipedia-like encyclopedia and the latest salvo in Musk's culture war against perceived "woke bias" — but it appears to be built on the bones of the very platform he's trying to replace.

The details:

Version 0.1 surfaced more than 885K articles by Monday evening, versus Wikipedia’s 7+ million in English; Musk says rapid iterations are forthcoming.

The site ships with a stark, search-first UI and bare-bones entries that mimic Wikipedia’s structure — yet so far, little to no imagery.

Editing remains gated: an "Edit" button materializes on select pages only, revealing a changelog of completed edits stripped of clear attribution.

Musk is pitching a fast update: a 1.0 “10X better” release, with the claim that the current site already beats Wikipedia.

Why it matters: Musk positions Grokipedia as an AI‑led, less‑biased alternative that will “pursue the truth,” reflecting his critiques of Wikipedia’s alleged left-wing tilt. Yet the launch leans heavily on Wikipedia’s look and content — and is sometimes cited as ‘adapted’ from it. Plus, Grok’s “fact-checking” claim raises some practical questions.

AMAZON

📦 Amazon to slash 14K corporate jobs

Image source: Creative Commons Attribution 4.0

The Rundown: Amazon is set to cut 14K corporate roles starting Tuesday — below the 30K initially reported but still a major downsizing as CEO Andy Jassy's cost-cutting campaign continues. Notifications arrive via email.

The details:

The retail giant’s last major round of job cuts was at the end of 2022 and into 2023, when 27K positions were axed.

Leadership is selling the cuts as cost-cutting and a correction to pandemic-era bloat, while flattening management hierarchies that inflated over the years.

Initial reports stated that 14K roles get eliminated now, with another wave likely hitting in January once the holiday crunch ends, which Amazon refutes.

HR, Devices & Services, and Operations take the heaviest hits as Amazon redirects capital toward data centers and generative AI infrastructure.

Why it matters: CEO Andy Jassy has been telegraphing this for months, telling staffers in June that generative AI would mean "fewer people doing some of the jobs that are being done today." Slashing thousands of corporate staff certainly gets the message across that Amazon thinks AI infrastructure matters more than headcount.

OPENAI

⛔️ ChatGPT’s accidental suicide hotline problem

Image source: Creative Commons Attribution 2.0

The Rundown: OpenAI just released new data showing just how many ChatGPT users are in crisis: 0.15% of weekly active users have conversations flagging explicit suicidal planning or intent. With 800M weekly users, that's over a million people every week.

The details:

Another 0.15% display what OpenAI calls "heightened emotional attachment" to ChatGPT, while hundreds of thousands more exhibit signs of psychosis.

OpenAI says it brought in over 170 mental health experts to vet the updates, who found ChatGPT now "responds more appropriately” than earlier versions.

ChatGPT's default GPT-5 model now includes upgraded distress detection designed to de-escalate and funnel users toward crisis resources, OpenAI says.

OpenAI cites that high-risk conversations get rerouted to safer models, while marathon chat sessions trigger gentle "take a break" prompts.

Why it matters: OAI is playing therapist-by-proxy at an unprecedented scale, deploying triage protocols, such as crisis hotline redirects and pathways for high-risk users, while insisting ChatGPT isn't a substitute for mental health professionals. But millions in suicidal crisis are already treating it like one, and that’s not likely to change.

TESLA

🚘 Tesla’s end-to-end AI for robotaxis, robots

Image source: Tesla / X

The Rundown: Tesla's AI chief revealed at ICCV how the company's camera-only FSD system processes massive fleet data through end-to-end neural nets to control vehicles, with plans to use the same brain for both robotaxis and Optimus robots.

The details:

Tesla's approach is pure vision: millions of cars feeding multi-camera data into a neural net that outputs direct driving commands.

The system includes "auxiliary heads" that expose its reasoning process, plus real-time 3D scene reconstruction that maps the world as it drives.

A learned world simulator generates rare, dangerous scenarios, like pedestrians darting into traffic, that could take years to capture naturally.

Tesla plans to scale the same neural architecture globally for robotaxis and port it directly to the Optimus humanoid.

Why it matters: A single vision‑only brain trained on millions of real‑world drives could let Tesla ship one model that powers robotaxis today and humanoids tomorrow. But if camera‑only perception and simulated edge cases miss safety‑critical failures, those blind spots won’t just affect cars but every Optimus that steps onto a factory floor.

QUICK HITS

📰 Everything else in tech today

PayPal struck a partnership with OpenAI to embed its wallet in ChatGPT, enabling instant in-chat checkout with buyer/seller protections, plus card payment processing.

Apple says it will soon let U.S. iPhone users create a passport‑based Digital ID in Apple Wallet for use at select TSA checkpoints.

Swiss drugmaker Novartis will acquire U.S. biotech Avidity Biosciences for about $12B in cash to bolster its pipeline of therapies for rare muscle disorders.

Apple is planning to place ads on Apple Maps next year, letting local businesses buy search placement, Bloomberg reports.

Qualcomm unveiled new AI accelerator chips to challenge Nvidia, sending its shares up 11% on Monday.

X is killing off Twitter.com and forcing users who log in with hardware security keys or passkeys to re‑enroll those credentials by November 10.

Satya Nadella’s pay jumped 22% to $96.5M — about $84M in performance stock and ~$9.5M in cash — even as Microsoft cut more than 15K jobs this year.

Instagram now lets you revisit previously watched Reels with a new Watch History feature, catching up to TikTok’s long-standing version.

Threads is rolling out “ghost posts,” disappearing updates that auto‑archive after 24 hours to encourage more unfiltered sharing without the pressure of permanence.

Google is releasing a redesigned Fitbit app anchored by a Gemini‑powered Personal Health Coach that adapts plans to your progress and surfaces smarter weekly insights.

OnePlus launched the OnePlus 15 in China with Qualcomm’s Snapdragon 8 Elite Gen 5 and a 165Hz display, promising a global rollout “soon” after months of teasers.

OpenAI will make its sub-$5 ChatGPT Go plan free for one year to new and existing users in India during a limited-time promo starting November 4.

China unveiled BI Explorer (BIE‑1), a desk‑side “brain‑like” computing device that its developers tout as the world’s first supercomputer that can fit under a desk.

COMMUNITY

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Anthropic's Claude comes to Excel

Read our last Tech newsletter: Apple may strike space deal with Musk

Read our last Robotics newsletter: Nike’s robotic sneaker

Today’s AI tool guide: Create a Claude Skill that designs YT thumbnails

RSVP to next workshop @ 4PM Thursday: Build pro PPTs with Claude Skills

See you soon,

Rowan, Jennifer, and Joey—The Rundown’s editorial team

Anthropic's Claude comes to Excel

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. Every AI company wants to conquer spreadsheets, and Anthropic just made a bet on where the real money lives: finance.

With Claude's new Excel integration shipping alongside a slew of connectors and finance-specific Skills, AI’s Wall Street ambitions just gained a powerful new analyst.

In today’s AI rundown:

Anthropic brings Claude directly into Excel

Odyssey-2 turns AI video into real-time interaction

Create a Claude Skill to design YouTube thumbnails

OpenAI’s GPT-5 to better handle mental health crises

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

AI RESEARCH

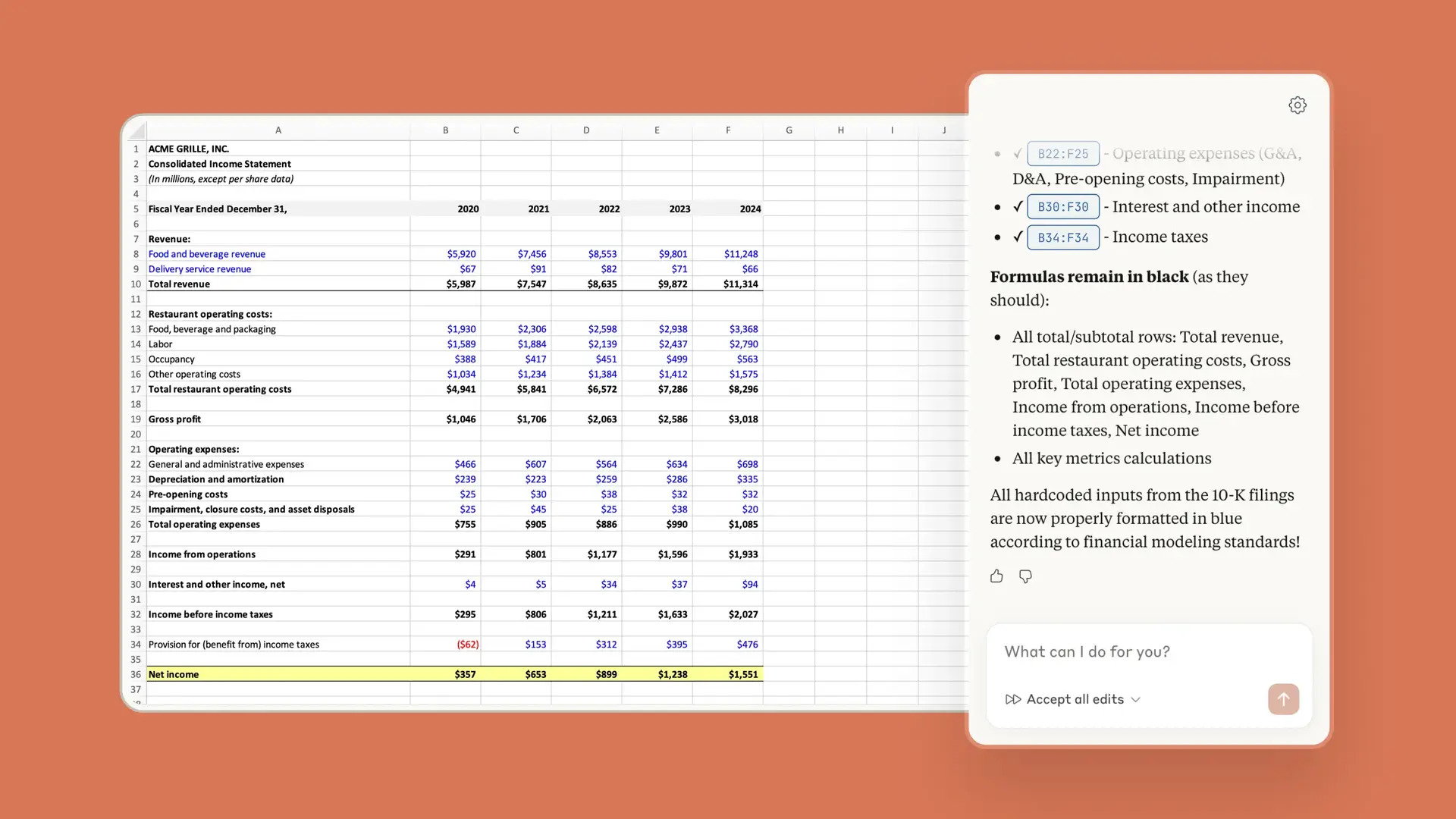

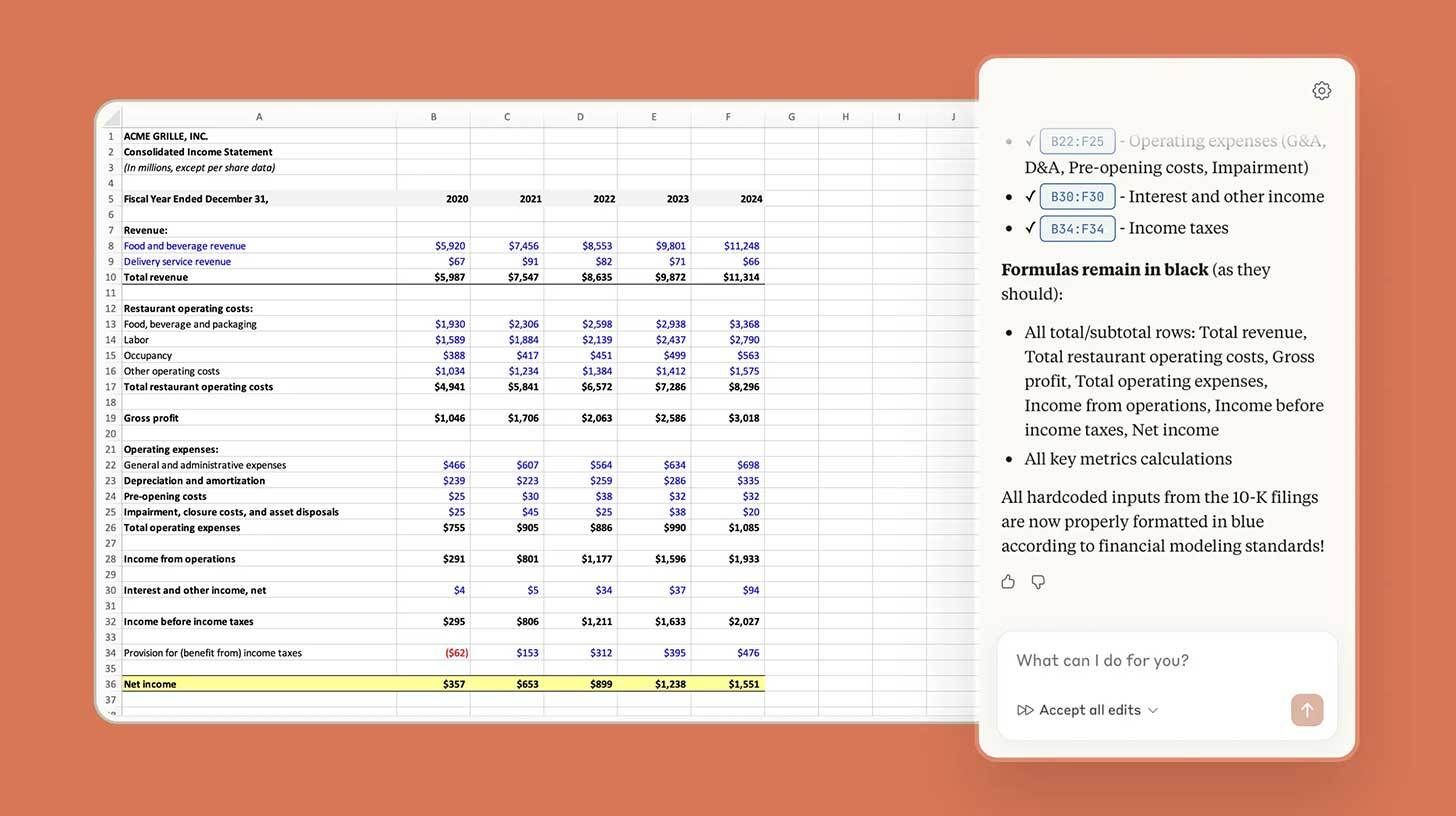

📊 Anthropic brings Claude directly into Excel

Image source: Anthropic

The Rundown: Anthropic just released Claude for Excel in beta, letting users interact with the AI assistant through a sidebar that can read, analyze, and modify spreadsheets, alongside new connectors and Skills for the financial industry.

The details:

The integration allows Claude to explain spreadsheets, fix formulas, populate templates with new data, or build new workbooks from scratch.

Seven new connectors link Claude to financial platforms like Aiera for earnings call transcripts, LSEG for live market data, and Moody's for credit ratings.

Anthropic also debuted several new finance-specific Agent Skills, including building cash flow models, company analysis, coverage reports, and more.

Claude for Excel and the new Skills are rolling out as a research preview to Max, Enterprise, and Teams subscribers before a broader release.

Why it matters: AI for spreadsheets has been a hot topic over the last few months, with ChatGPT, Copilot, and now Claude all pushing solutions. While the others have been more agent-focused, Claude’s native Excel access and financial connectors may bring new reliability to an area still waiting for a true AI breakthrough.

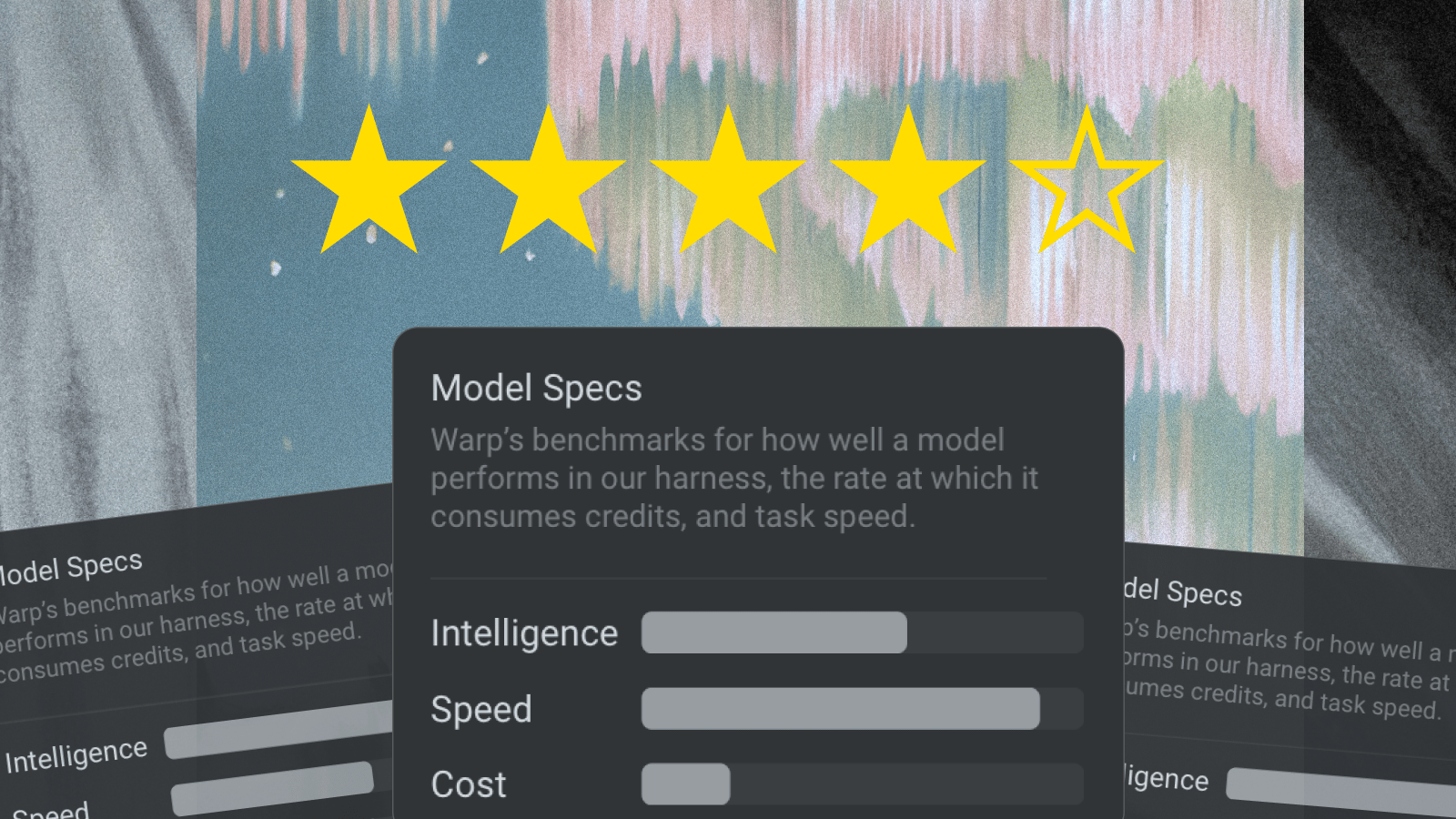

TOGETHER WITH WARP

🧠 Know your model before you code

The Rundown: We tested every top coding model so you don’t have to. Warp rates models by intelligence, cost, and speed, drawing from SWE-bench Verified scores, per-token efficiency, and time-to-first-token. Choose the right model for your specific coding task, without getting lost in the data.

With Warp, you get:

Access to top models like Opus, Sonnet, and GPT-5

Transparent ratings for cost, speed, and quality

Free AI-trial with full model access

Download Warp for free, and get bonus credits for your first week.

ODYSSEY

🎥 Odyssey-2 turns AI video into real-time interaction

Image source: Odyssey

The Rundown: Odyssey just launched Odyssey-2, a new interactive video model that generates streaming AI footage at 20 frames per second, allowing users to shape and control multi-minute videos through text prompts as they explore the scene.

The details:

Unlike other video models that take minutes to produce short clips, Odyssey-2 streams footage immediately, with new frames appearing every 50 milliseconds.

The system generates video segments without planning, with each new frame based on what has already happened and what users prompt in real-time.

Users can direct the video's evolution through natural language prompts while it plays via a chat box, with the AI continuously adapting to each input.

The model learns physics and dynamics from video data, enabling it to simulate realistic behaviors like waves moving across water or light shifting on surfaces.

Why it matters: Odyssey-2 feels very different from interactions with other AI video platforms out there, closer to a hybrid world generator than a Veo or Sora. While the quality may not be as impressive as other models, the real-time, open-ended exploration feels like a concept with a ton of potential for new content experiences.

AI TRAINING

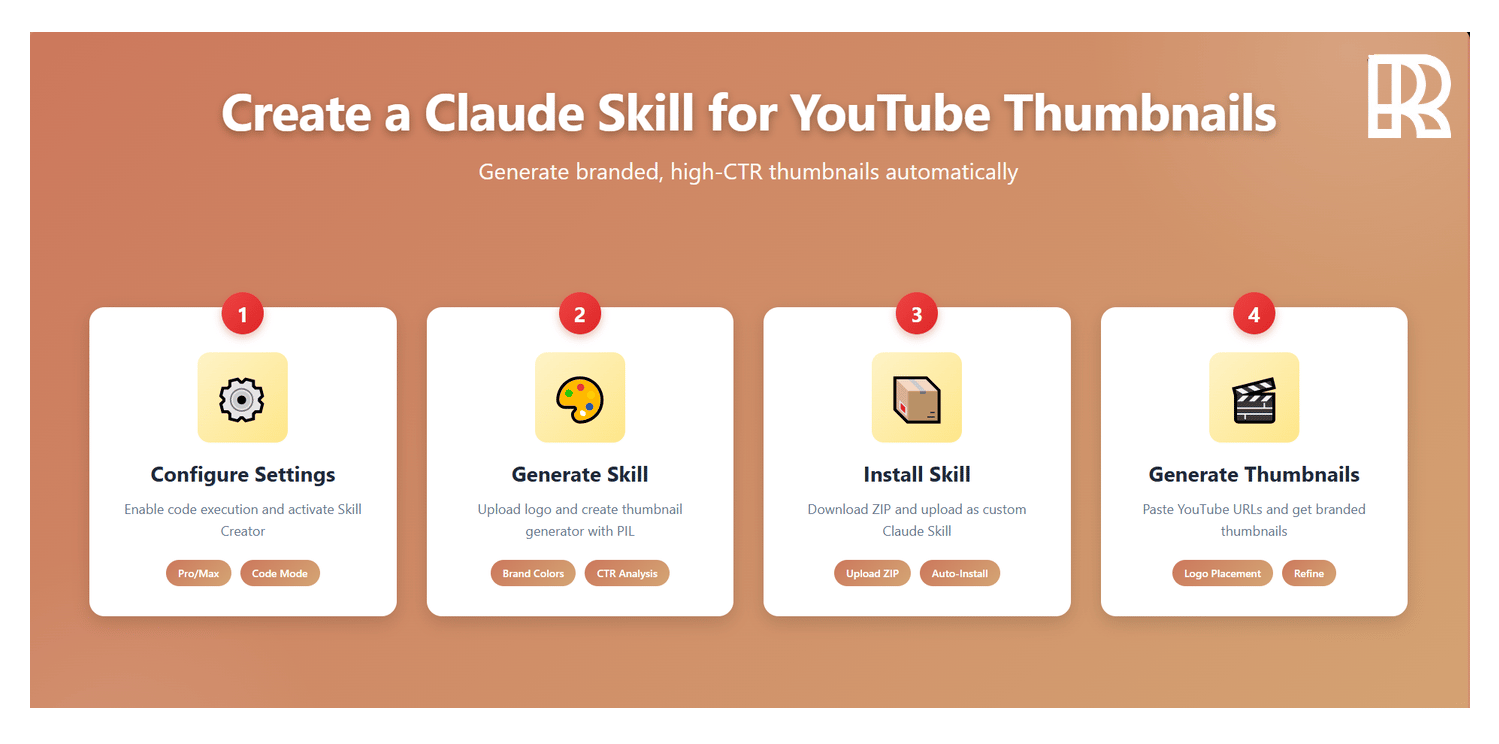

🤩 Create a Claude Skill to design YouTube thumbnails

The Rundown: In this tutorial, you will learn how to build a custom Claude Skill that automatically generates branded YouTube thumbnails by analyzing top-performing videos and applying your brand guidelines.

Step-by-step:

Go to Claude Settings → Capabilities, enable "Code execution & file creation", then scroll to Skills section, toggle on "skill-creator" and click "Try in Chat"

Start chat, upload your logo, prompt: "Create a skill that generates high CTR thumbnails. It should analyze YouTube video links, generate thumbnails using the PIL library with a logo in the top-right corner. Use Black, White, Blue colors"

Download the generated ZIP file, go to Profile → Settings → Capabilities → Upload Skill, drop the ZIP to install your Thumbnail Generator Skill

Start new chat, prompt: "Generate YouTube thumbnail for 'AI Productivity Tools 2025'. Here are the top videos: [paste URLs]. Create a high-CTR thumbnail with logo top-right using brand colors"

Refine output with follow-ups like "Use Roboto font" or "Make text bolder with Sans Serif" until you get the perfect thumbnail

Pro tip: Use this approach to create other productivity Skills, such as a presentation generation Skill for automated slide decks or an email marketing campaign Skill.

PRESENTED BY YOU.COM

💸 Learn how to make every AI investment count

The Rundown: Successful AI transformation starts with deeply understanding your organization’s most critical use cases. You.com’s AI Use Case Discovery Guide walks through a proven framework to identify, prioritize, and document high-value AI opportunities.

In the guide, you’ll learn how to:

Map internal workflows and customer journeys to pinpoint where AI can drive measurable ROI

Ask the right questions when it comes to AI use cases

Align cross-functional teams and stakeholders for a unified, scalable approach

Get the Guide.

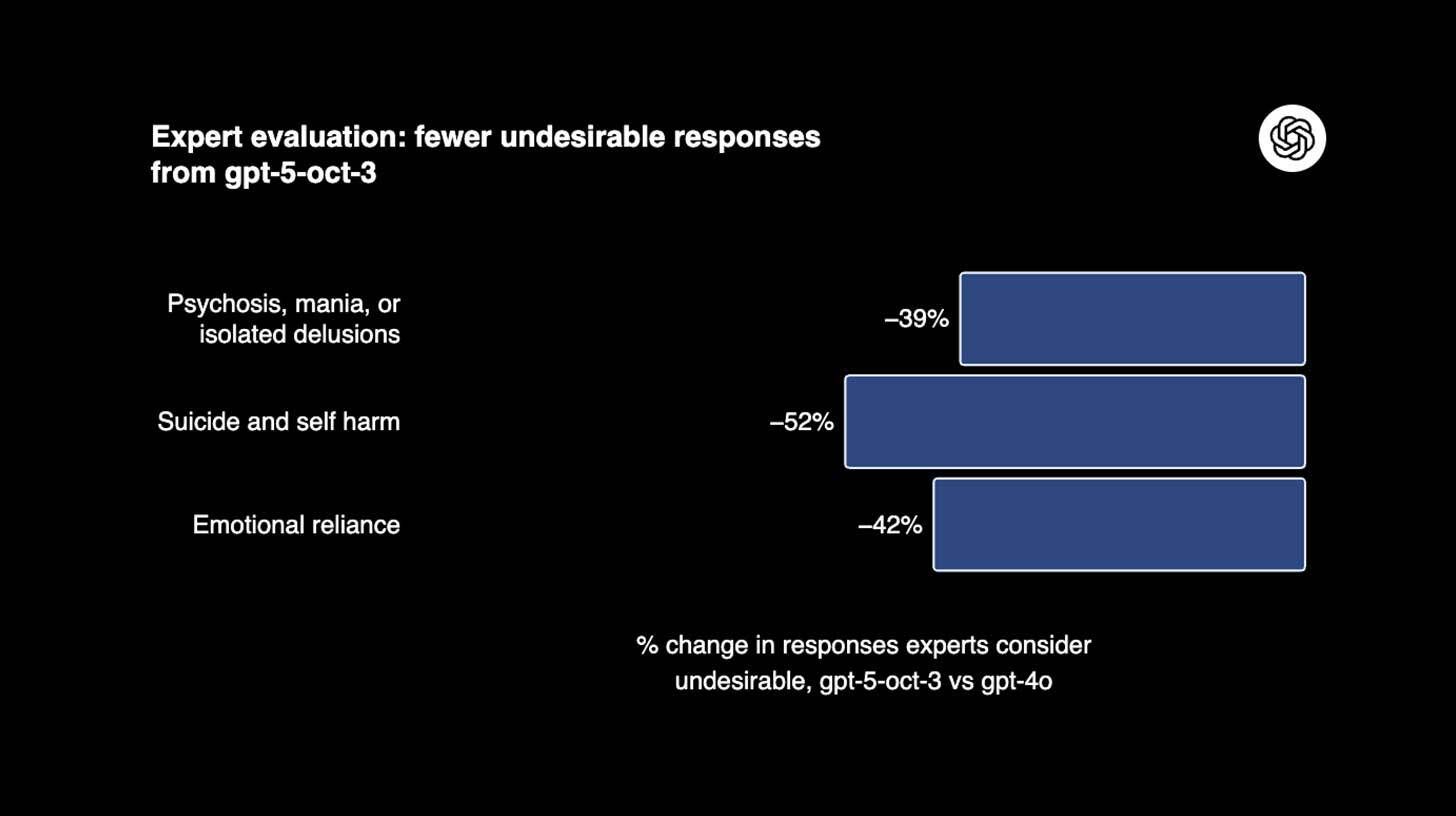

OPENAI

🧠 OpenAI’s GPT-5 to better handle mental health crises

Image source: OpenAI

The Rundown: OpenAI just rolled out major updates to GPT-5 designed to better recognize and respond to users experiencing mental health emergencies, after consulting with over 170 mental health professionals across dozens of countries.

The details:

The updated model significantly reduces problematic responses, with clinicians rating GPT-5 as 91% compliant with mental health protocols vs. 77% for 4o.

OpenAI said it trained GPT-5 to express empathy without reinforcing delusional beliefs, also fixing an issue where safeguards degraded during long chats.

OAI said roughly 0.07% of its 800M weekly active users show signs of potential psychosis or mania, translating to millions of concerning conversations.

The updates come amid mounting legal pressure, including lawsuits from families and warnings from state officials about protecting vulnerable users.

Why it matters: The amount of mental health chats being fielded by AI is staggering, and is a difficult situation for OAI to moderate, with some cases likely benefiting from the tool and others leading to even worse spirals. While it’s clear positive steps are being taken, it’s a segment of AI use without a clear-cut ‘correct’ path forward.

QUICK HITS

🛠️ Trending AI Tools

🔐 Incogni - Remove your personal data from the web so scammers and identity thieves can’t access it. Use code RUNDOWN to get 55% off for annual plans*

💼 Claude for Excel - Anthropic’s new research preview for AI in spreadsheets

💭 Odyssey 2 - Instant and interactive AI video generation

🏗️ Mistral AI Studio - New platform for going from AI prototypes to production

*Sponsored Listing

📰 Everything else in AI today

MiniMax released M2, an AI that ranks as the strongest open model (and #4 on Artificial Analysis’ Intelligence Index), excelling in agentic, tool use, and STEM tasks.

Qualcomm unveiled its AI200 chip for data centers that aims to compete with Nvidia, with Saudi AI startup Humain revealed as the first customer for the new accelerators.

AMD and the U.S. Department of Energy announced a $1B partnership to build two supercomputers to accelerate research in areas like energy, medicine, and security.

AI hiring startup Mercor raised $350M in funding at a $10B valuation, benefiting from a pivot to data-labeling following Meta's acquisition of a stake in competitor Scale AI.

Expense management platforms report that AI-faked receipts now account for up to 14% of fraudulent submissions, with companies detecting over $1M in falsified invoices.

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader Christopher S. in Houston, TX:

"I recently came across a sales development agent. As an independent business loan broker, prospecting and lead nurturing take 60% of my time. This SDR, at no cost for now, prospects, researches, emails, and follows up with 150 new contacts a day. My pipeline of applications has grown from 5-10 to over 45-50 a month, and the SDR found and helped me close my first and second multimillion-dollar loans."

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: The ‘Meta-fication’ of OpenAI

Read our last Tech newsletter: Apple may strike space deal with Musk

Read our last Robotics newsletter: Nike’s robotic sneaker

Today’s AI tool guide: Create a Claude Skill that designs YT thumbnails

RSVP to next workshop @ 4PM Thursday: Build pro PPTs with Claude Skills

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown

Nike's robotic sneaker

Read Online | Sign Up | Advertise

Good morning, robotics enthusiasts. Nike just powered up walking. Project Amplify wraps your leg in a robotic brace that adds motor-driven push to every step — think pedal-assist, but for your stride.

It's clunky, conspicuous, and might actually make your walking commute a lot more fun.

In today’s robotics rundown:

Nike’s robot sneaker for power walks

Humanoids have a big ‘hands problem’

SoftBank on the ‘hunt’ for humanoids

AgiBot’s zero-code tool turns video into motions

Quick hits on other robotics news

LATEST DEVELOPMENTS

NIKE

👟 Nike’s robot sneaker for power walks

Image source: Nike

The Rundown: Nike just debuted its first “powered footwear” with Project Amplify, a robotic exoskeleton designed to boost your walking speed for faster commutes, like having a second set of calf muscles, Nike says.

The details:

The powered footwear system integrates a lightweight motor, drive belt, and rechargeable cuff battery with a carbon-fiber-plated runner.

A calf-mounted battery feeds a hinged arm into a heel socket, delivering assist at the ankle to help you go faster with less effort in walking and running.

Nike is aiming at everyday athletes rather than elites, targeting roughly 10–12-minute-mile paces and commuter mileage, not race-day PRs.

Still in testing, Nike pegs a broad consumer release to the coming years, with one roadmap pointing to a 2028 launch window.

Why it matters: If Amplify reaches scale, Nike aims to do for walking what e-bikes did for cycling — normalizing motor-assist as everyday infrastructure. The collaboration with robotics startup Dephy marks the jump from shoes that return energy to shoes that inject it, potentially developing a new category of propulsion you strap to your feet.

HUMANOIDS

👌🏼 Humanoids have a big ‘hands problem’

Image source: Ideogram / The Rundown

The Rundown: Humanoids are moving from demos to factory pilots, but the bottleneck isn’t walking — it’s a “hands problem” that separates show bots from robots that can wrench, wire, and swap tools on real lines, reports the Wall Street Journal.

The details:

Current humanoids can walk and lift, but lack the finger‑level dexterity, tactile sensing, and in‑hand control needed to wrench, wire, and swap tools.

Teams are splitting on strategy: some chase five-finger dexterity with touch sensing, others bet on optimized three-finger grippers or two-finger pincers.

Engineers point to hard limits in actuators, tendon routing, compliant joints, tactile "skin," and AI that can fuse vision with millisecond-accurate control.

Morgan Stanley estimates that cracking the hands problem could unlock a $5 trillion humanoid market by 2050, spanning industrial and service roles.

Why it matters: Even as bots like Agility's Digit haul bins in warehouses, researchers say hands capable of skilled tool use are years away, not months. Grasping and in-hand control — not locomotion — will decide which humanoids actually scale beyond logistics into the work that requires real manipulation.

SOFTBANK

🤖 SoftBank on the ‘hunt’ for humanoids

Image source: Agility Robotics

The Rundown: SoftBank is reviving its robotics ambitions with targeted stakes in both humanoid hardware and AI — joining Agility Robotics’ reported $400M round, backing platforms like Skild AI, and agreeing to acquire ABB’s robotics unit for $5.4B.

The details:

It explored a $900M Agility Robotics buyout but instead joined a funding round reportedly near $400M at a ~$1.75B valuation.

SoftBank agreed to buy ABB’s robotics unit for about $5.375B, with closing targeted for mid‑to‑late 2026.

Softbank’s Masayoshi Son is reportedly intensifying the pursuit of investments in humanoid startups as its next growth engine beyond software.

Competitive pressure is mounting as Tesla, Figure, and Boston Dynamics head into pilots while Agility’s Digit scales enterprise trials and capability upgrades.

Why it matters: A decade after SoftBank's "emotional" bot Pepper flopped, the conglomerate is hunting for AI-robotics bets that can actually scale — this time hedging with equity stakes and supply agreements instead of outright acquisitions. If pilots turn into purchase orders, SoftBank sits first in line for units and partnerships.

AGIBOT

📱AgiBot’s zero-code tool turns videos into motions

Image source: AgiBot

The Rundown: China’s AgiBot launched LinkCraft, a zero‑code “robot director tool” that turns smartphone videos of dances, martial arts, or everyday gestures into precise humanoid motions — no specialized gear or coding required.

The details:

It blends AI motion capture, intelligent retargeting, and cloud imitation learning to map 2D video into 3D robot control trajectories for live performances.

A timeline editor lets creators sequence actions, audio, and expressions, while ‘Speech Choreography’ auto-syncs facial movements from text or voice input.

Multi-robot ‘Group Control’ deploys synchronized routines across multiple units, and a built-in library supplies 180+ actions and 140 expression templates.

It launches on the X2 humanoid and will roll out to A2 and other platforms, aligning with AgiBot's push from demos to real-world deployments.

Why it matters: LinkCraft removes the programming barrier that has confined humanoids to labs and controlled demos. Now, just a smartphone video is enough to program motion. If the tool works as advertised, it shifts the model from engineers scripting every gesture to end users in the driver’s seat.

QUICK HITS

📰 Everything else in robotics today

Meta’s AI chief, Yann LeCun, warns the humanoid boom is prioritizing hardware over cognition, saying most startups have “no idea” how to make robots generally useful.

GM promised “eyes-off” driving for 2028, debuting on the Cadillac Escalade IQ, backed by 600K mapped hands‑free miles and 700M crash-free Super Cruise miles.

Grubhub is rolling out a Jersey City pilot with Avride to deliver Wonder orders via sidewalk robots, the company’s first autonomous delivery effort beyond campuses.

A UK-based 16‑year‑old and his father built a four‑finger, tendon‑driven Lego hand that uses a clutch‑gear differential to synchronize digits for adaptive grasps.

Chinese researchers unveiled an “underwater phantom” jellyfish robot that can blend in to conduct covert missions, intelligent detection, and real-time monitoring.

Korean researchers built OCTOID, a bilayer soft robot using elastomers that fuses color-shifting camouflage with programmable shape-morphing for modular locomotion.

Bonsai Robotics unveiled the Amiga Flex, its first unified platform since the July farm-ng acquisition, bringing accessible autonomy to growers who couldn't afford it before.

A Harvard-led study shows how living muscle, integrated with synthetic scaffolds via advanced biofabrication, could power biohybrid robots that flex and adapt like humans.

Russia’s Rosatom unveiled a “spider robot” that ultrasonically inspects reactor and steam‑generator welds up to 30 cm thick three times faster than traditional methods.

Unitree launched an in-person education program built around its Go2 robot dog to teach operation, maintenance, and applications.

Liuyang, China, set a world record with 16K-drone night show that drew millions and showed swarms can outdo fireworks on complexity, runtime, and emissions.

COMMUNITY

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: The 'Meta-fication' of OpenAI

Read our last Tech newsletter: Apple may strike space deal with Musk

Read our last Robotics newsletter: Amazon’s massive robot hiring spree

Today’s AI tool guide: Build a 30-day plan with Manus 1.5 to achieve goals

Watch our last live workshop: Learn Context Engineering

See you soon,

Rowan, Jennifer, and Joey—The Rundown’s editorial team

The 'Meta-fication' of OpenAI

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. OpenAI's transformation from research lab to consumer giant is coming with an unexpected side effect: a massive influx of Meta DNA.

With a fifth of OpenAI's workforce now ex-Zuck employees (including key leadership positions) and previously rebuffed advertising initiatives now in the works, the company’s meteoric growth might be coming with a heavy culture tax.

In today’s AI rundown:

OpenAI’s ‘Meta-fication’ sparks culture clash

Survey: Artificial Analysis ‘State of Generative Media’

Build a 30-day plan with Manus 1.5 to achieve any goal

OpenAI’s AI models for music generation

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

OPENAI

👀 OpenAI’s ‘Meta-fication’ sparks culture clash

Image source: Reve / The Rundown

The Rundown: A new report from The Information just revealed that one in five OpenAI employees now comes from Meta, bringing Facebook-style growth tactics that are reshaping the AI startup's culture and product strategy.

The details:

Over 600 of OAI’s 3,000 staffers are former Meta, including applications CEO Fidji Simo, with an internal Slack channel existing specifically for the group.

Internal surveys asked whether OAI was becoming "too much like Meta," with former CTO Mira Murati reportedly leaving over user growth disagreements.

Teams are exploring using ChatGPT's memory for personalized ads, despite CEO Sam Altman previously calling a similar idea "dystopian."

The report also details internal criticism surrounding the Sora 2 rollout, with employees skeptical of the social app’s direction and the ability to moderate it.

Why it matters: It’s hard to maintain the identity of a startup that goes from research lab to one of the world’s biggest consumer products in a few years, but the influx of Meta DNA may be a double-edged sword, with OpenAI expanding with growth-centric talent, but potentially losing the scrappy, smaller vibe that fostered its initial success.

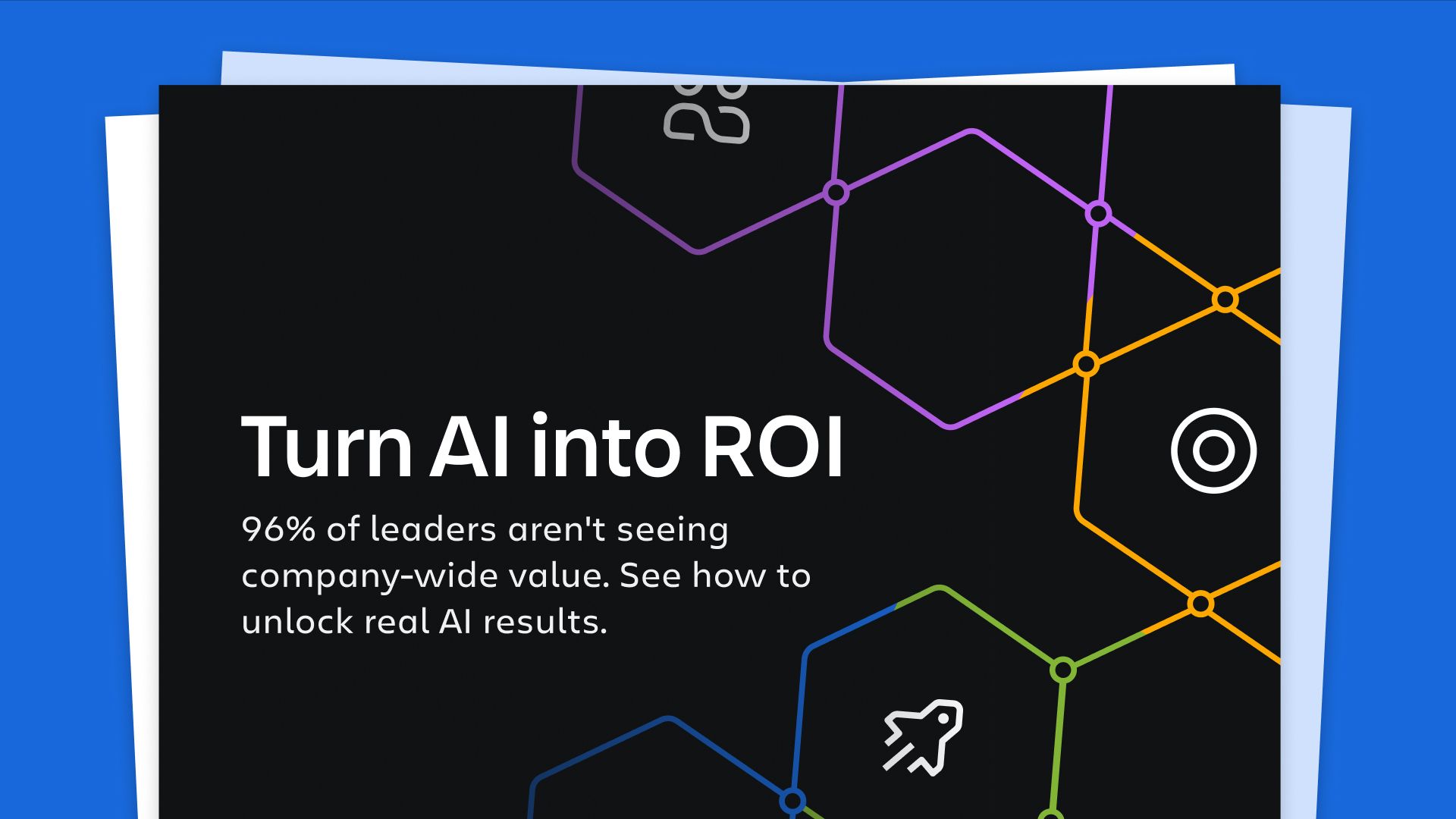

TOGETHER WITH ATLASSIAN

📊 96% of companies aren't seeing AI ROI — here's why

The Rundown: AI can write code, draft emails, and analyze data — but if it's not transforming your business, you're not alone. Atlassian's AI Collaboration Index shows that 96% of companies haven't seen organization-wide improvements despite broader AI adoption.

The report reveals:

Workers feel 33% more productive, but work is fragmented across applications

Organizations are treating AI as a personal tool, not a collaboration enabler

The 4% seeing results build connected bases for AI-powered team collaboration

Get the full report to discover what's holding teams back with AI.

AI RESEARCH

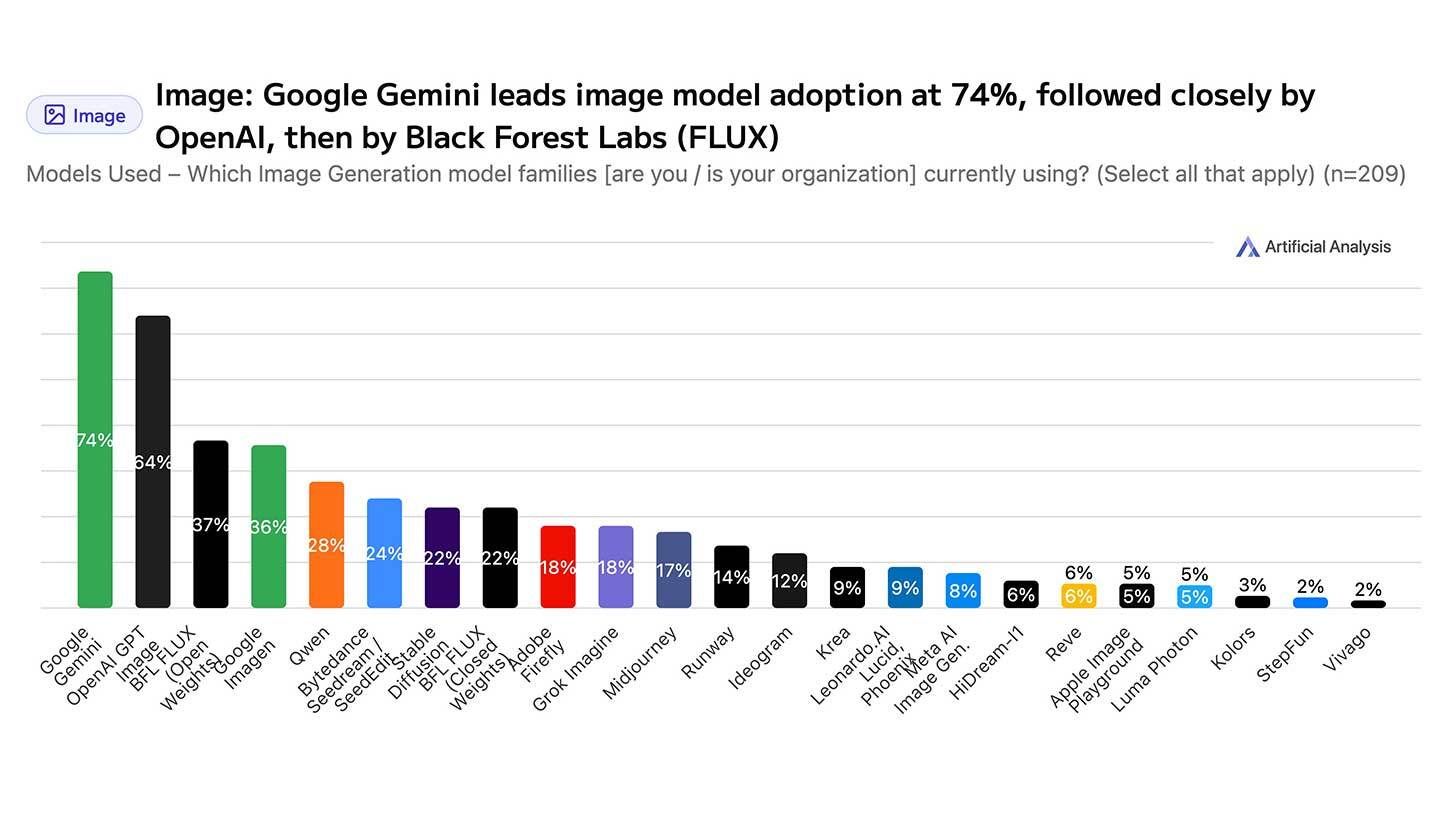

📖 Survey: Artificial Analysis ‘State of Generative Media’

Image source: Artificial Analysis

The Rundown: Benchmarking platform Artificial Analysis just released its 2025 ‘State of Generative Media’ report, which polled 300 developers and creators to track personal and enterprise AI adoption levels, model preferences, and more.

The details:

Google’s Gemini captured 74% of AI image use, and Veo took 69% of video creators, beating out rivals OAI, Midjourney, and Chinese options like Kling.

Personal creators have integrated image tools into workflows at an 89% adoption rate, with video still at just 58% of users despite rapid growth.

Organizations report surprisingly quick returns, with 65% achieving ROI within 12 months and 34% already seeing profits from their AI media initiatives.

Model quality was the most important criterion at 76% for personal users, with enterprises prioritizing cost reduction (57%) when choosing an AI platform.

Why it matters: Google is dominating on both the AI image and video front, which may surprise given OpenAI’s typical adoption rates for traditional AI use. While the sample size wasn’t huge, the ROI numbers for AI image and video usage are far stronger than the doom and gloom from other surveys of AI’s success in enterprise.

AI TRAINING

🗓️ Build a 30-day plan with Manus 1.5 to achieve any goal

The Rundown: In this tutorial, you will learn how to use Manus 1.5 to reverse-engineer any goal into a 30-day execution plan with daily inputs, then import it directly to Google Calendar as a color-coded schedule.

Step-by-step:

Go to manus.im, select "Agent" mode (not standard chat) to enable autonomous file outputs and planning capabilities

Craft a detailed prompt: "Reverse-engineer [$X goal] into a 30-day execution plan with time-blocked daily inputs. Color-code by priority: 🔴Outreach 🟠Content 🟡Fulfillment 🟢Community 🔵Planning. Output calendar-ready ICS file in EST timezone"

Submit and let Manus generate your files: 30-day markdown plan, daily checklist, ICS calendar file, and summary with power hours and weekly anchors

Download the ICS file, open Google Calendar, click "+" under "Other calendars", select "Import", choose your ICS file, and destination calendar

Review your imported calendar with color-coded, time-blocked daily inputs ready to execute

Pro tip: If daily quotas feel off, tweak inputs in the prompt (e.g., raise follow-ups, reduce content time) and regenerate a new .ics. Inputs drive outcomes.

PRESENTED BY INVISIBLE

💡FDEs: Where AI ambition meets execution

The Rundown: The difference between AI leaders and laggards isn't the technology — it's execution. Invisible's latest guide shows how Forward Deployed Engineers embed within operations to make AI actually work at scale.

Invisible's new guide reveals:

Why most AI initiatives fail and how the top 5% actually deliver ROI

The difference between FDEs, consultants, and integrators

Real deployment stories of speed, precision, and measurable outcomes

When to use forward deployment, how it scales, and what it really cost

OPENAI

🎵 OpenAI’s AI models for music generation

Image source: Reve / The Rundown

The Rundown: OpenAI is reportedly developing AI models for music creation, enlisting students from the prestigious Juilliard School to annotate musical scores while positioning itself against startups like Suno and Udio.

The details:

OAI is working with Juilliard students to create musical annotations, helping to build training datasets for audio generation across instruments and styles.

The tech would enable text-to-song creation, with use cases like layering tracks onto existing vocals or creating soundtracks for video content.

OAI previously explored AI music with MuseNet and Jukebox in 2019-20 before abandoning the projects, with the new effort now marking their third attempt.

Internal discussions suggest advertising agencies could leverage the platform for campaign jingles, soundtrack composition, and style-matching capabilities.

Why it matters: Another branch of OAI’s everything AI strategy has emerged, and this time, they are coming for the music front. Audio generation was already the biggest improvement of Sora 2, and a music model directly accessible to ChatGPT’s nearly 1B users would be a major adoption moment for the AI audio sector as a whole.

QUICK HITS

📰 Everything else in AI today

Google unveiled Google Earth AI, a platform that combines satellite imagery with AI models to help organizations tackle environmental challenges like floods and wildfires.

Anthropic and Thinking Machines published a study showing that AI models have distinct "personalities", with Claude prioritizing ethics, Gemini emotional depth, and OpenAI models focusing on efficiency.

Mistral AI launched Studio, a platform for companies to move from AI prototypes to production with built-in tools for performance tracking, testing, and security.

Oreo-maker Mondelez is reportedly using a new AI tool developed with Accenture to cut marketing content costs by 30-50%, with plans to create TV-ready ads next year.

Anthropic announced a multibillion-dollar expansion with Google Cloud to access up to 1M TPU chips for over 1 GW of compute power.

Pokee AI released PokeeResearch-7B, a new open-source deep research agent that tops benchmarks compared to other similarly sized rivals.

Meta added new AI tools to Instagram Stories, allowing users to restyle, edit, and remove objects from photos and videos directly within the platform.

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader Anonymous in Lincoln, NE:

"I use Perplexity’s Comet browser to automatically queue new prompts for Sora generated using Grok. When the maximum number of simultaneous videos is generated, it autonomously feeds a new prompt into Sora. It can successfully post videos generated with Sora on the platform, and I’m hoping to expand to YouTube, which I’ll use Comet for to optimize my channel SEO, so I can monetize my videos."

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Microsoft’s ‘Mico’ personality upgrade

Read our last Tech newsletter: Apple may strike space deal with Musk

Read our last Robotics newsletter: Amazon’s massive robot hiring spree

Today’s AI tool guide: Build a 30-day plan with Manus 1.5 to achieve goals

Watch our last live workshop: Learn Context Engineering

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown

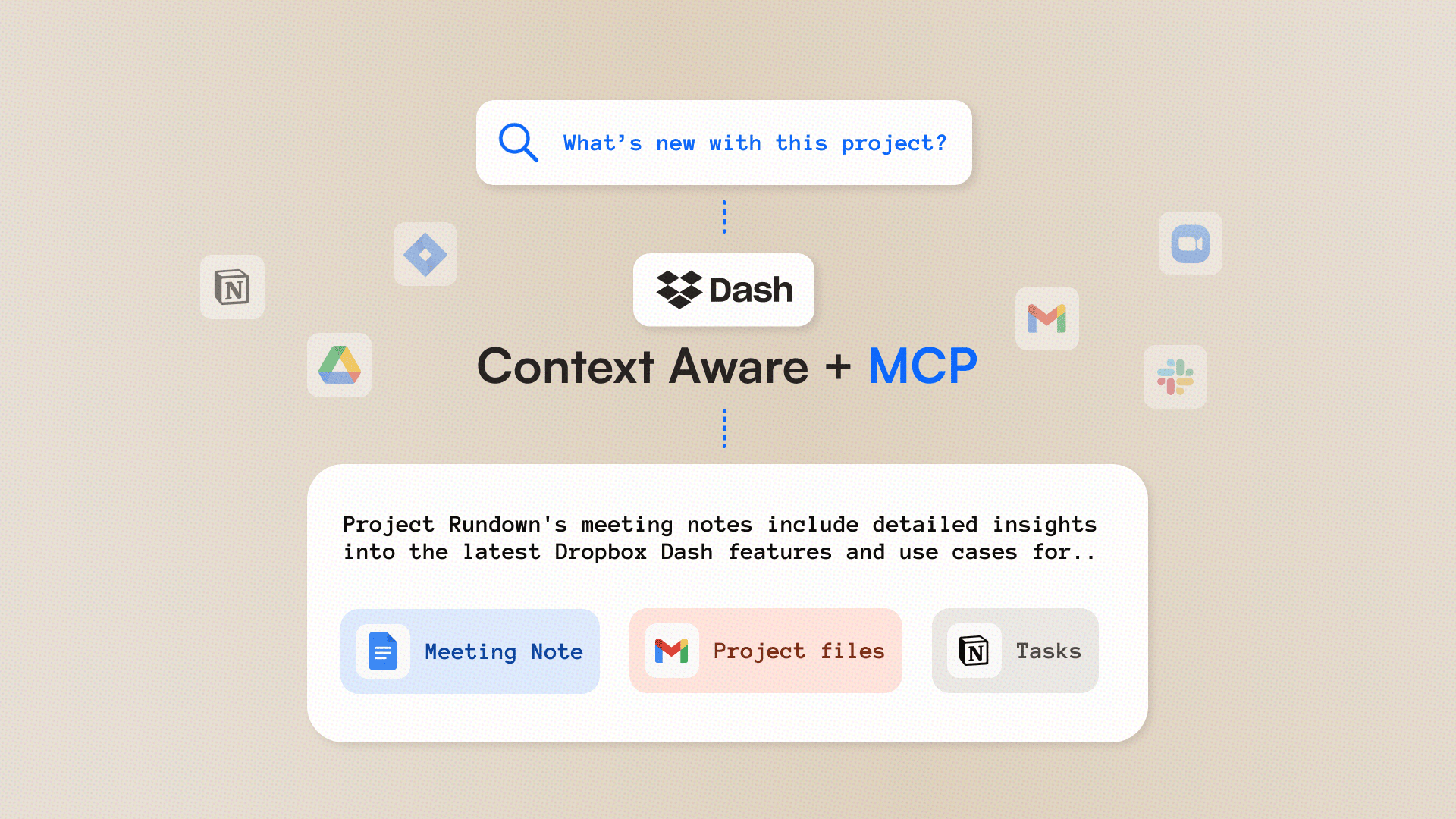

Dropbox redefines the future of work with Dash

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. Dropbox has dropped its Fall 2025 release, pushing AI deeper into teams’ workflows with an enhanced Dash AI experience.

Built as a context-aware AI teammate, Dash connects scattered apps, files, and conversations into one intelligent workspace, helping teams cut through information overload and find what matters faster.

To unpack how this new release is reshaping the way businesses organize, discover, and act on their data, we sat down with Manik Singh, VP and GM of Dropbox Dash, for an exclusive Q&A.

In today’s AI rundown:

An ‘AI teammate’ for work

Context-awareness: The next frontier

Going beyond text with Mobius Labs

Building for enterprise confidence

Dash experience inside Dropbox

LATEST DEVELOPMENTS

DROPBOX DASH

🤖 An ‘intelligent teammate’ for work

The Rundown: Dash acts as a context-aware AI teammate that understands not only how users work but also how their teams operate — helping organize and search across massive datasets to deliver relevant, grounded answers instantly.

Cheung: Teams deal with massive volumes of data from different apps. How does Dash understand how users work across these systems and what’s relevant to them?

Singh: For most people, work happens across multiple apps and file types, from Zoom calls and Slack threads to client decks and meeting transcripts. Dash bridges those silos by understanding both your intent and your workflow.

It cuts through information overload by indexing all of your cloud content in connected apps to provide context-aware intelligence. While other tools pull information from the web, Dash stays grounded in all your data and its context, knowing the difference between what’s merely related and what’s actually relevant.

So when you ask something like, “Can you summarize all the reporting from last year’s brand campaign?” Dash knows exactly what project you’re referring to, where the relevant files live, and what’s changed since you last checked. It turns scattered data into cohesive, actionable insights to make it easier for people to focus on bringing impact that only humans can do.

Why it matters: By understanding how and where teams actually work (not just what they search for), Dropbox Dash transforms knowledge from chaos into clarity. It bridges the gap between data and decisions, cutting through app sprawl and information overload to deliver the right answers in real time.

CONTEXTUAL INTELLIGENCE

🧠 Context-awareness: The next frontier

The Rundown: Dropbox is doubling down on context-awareness with Dash, putting everything users need for work into one place — and adding more automated actions to help teams work faster and smarter.

Cheung: How do you see context-awareness shaping enterprise knowledge work, and which workflows will benefit the most?

Singh: Rather than isolated chatbots, context-aware systems like Dash can understand a user’s goals, tools, and content in real time. Dash evolves with your tools by connecting and building a work context knowledge graph that surfaces unique insights to help people do their best work. It already connects to the most critical apps, like Slack, Notion, and Microsoft 365, and we’re continually exploring new connectors.

The biggest impact of context-aware systems will be in media and creative workflows that handle a variety of content types, where connecting insights across images, docs, charts, and meetings provides richer context for faster, smarter decisions.

Cheung: As Dash gets more context-aware, will it start taking actions — letting users hand off tasks to AI?

Singh added: Dash can already automate many workflows today and is continuing to bring more and more advanced workflows to the market. By using our deeper app integrations and tools like MCPs, Dash acts as an AI teammate that doesn’t just answer questions but also takes action, whether that’s drafting a summary or organizing content within your workspace.

Why it matters: Context-aware AI marks the shift from intelligent retrieval to action. By understanding the full context of work, Dash delivers faster answers as well as meaningful automation. As it evolves, it will become a proactive collaborator, anticipating needs, running tasks, and freeing teams to focus on high-value decisions.

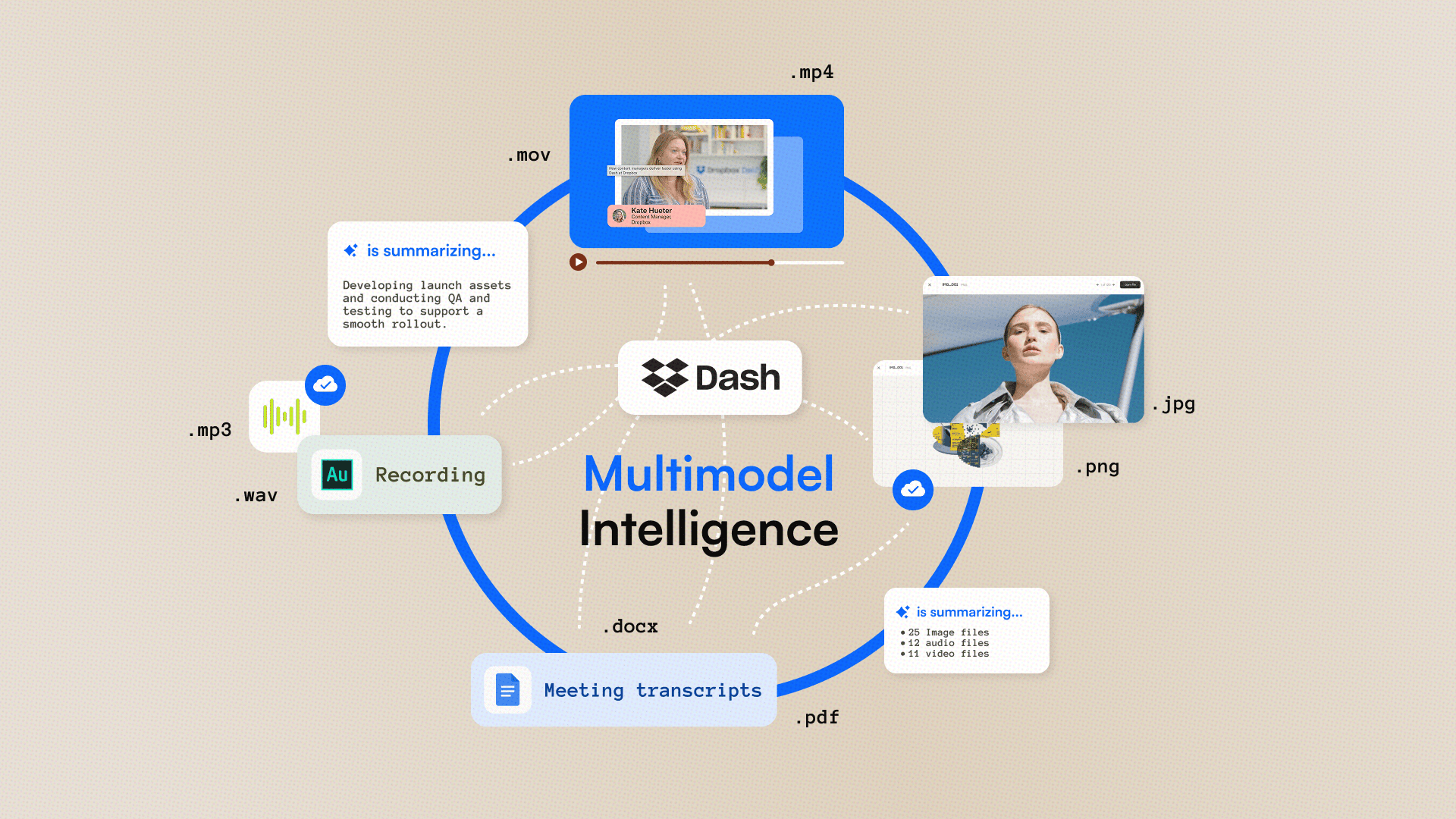

MULTIMODALITY

🎥 Going beyond text with Mobius Labs

The Rundown: Dash’s ability to connect the dots at work is also getting a major boost with Dropbox’s acquisition of Mobius Labs technology, whose custom AI models process multimedia at scale, enabling smarter search across text, images, audio, and video.

Cheung: Can you tell us a bit about the tech acquired from AI startup Mobius Labs and how it adds to Dash’s capabilities?

Singh: Not all work happens in writing. That’s why Mobius Labs has joined Dropbox to help us take Dash’s multimodal capabilities to the next level. The Mobius Labs team has been developing custom AI models optimized for large-scale multimedia processing.

Dropbox already processes and stores Petabytes of data, so this acquisition will unlock massive value for our customers. Whether your work involves video, audio, or images, we’ll be able to support even more complex workflows for search and answers in Dash.

Singh added: Multimodality will directly benefit creative and marketing teams, content producers, and enterprise professionals with content-heavy workflows. Dash’s intelligence will simplify everything from asset management to campaign retrospectives, enabling teams to find, reference, and repurpose multimedia assets faster, all while maintaining brand and data integrity.

Why it matters: Work today goes beyond documents, with valuable insights living inside video and audio files. By expanding Dash with Mobius’ tech, Dropbox is building a truly multimodal AI workspace that sees the full picture of how teams create and share knowledge, making every file searchable, connected, and actionable.

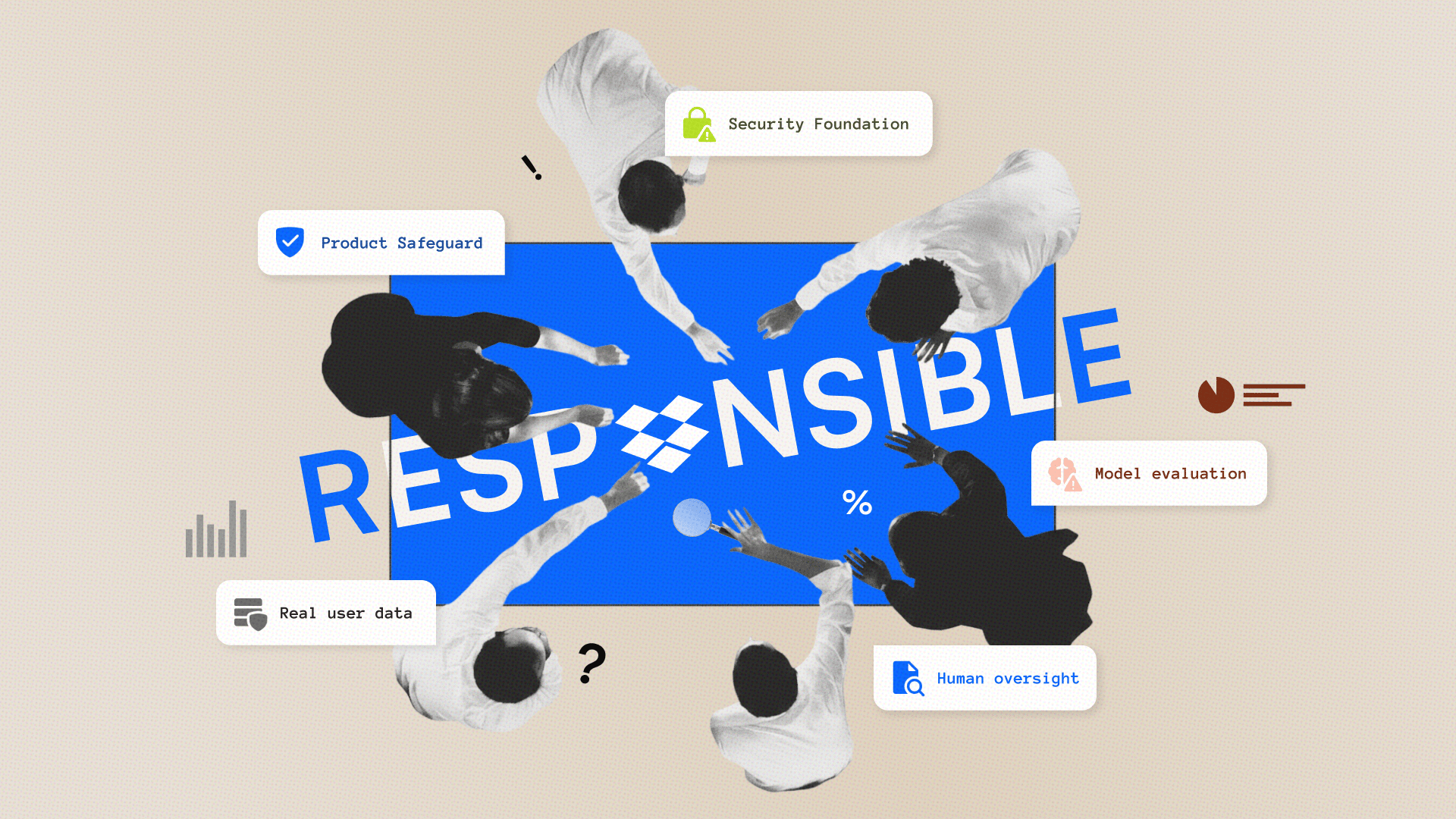

TRUST

👍️ Building AI for confidence

The Rundown: Dropbox is reinforcing trust and accuracy by not only grounding Dash in users’ data but also running a rigorous governance process spanning model checks, ethical reviews, and user feedback to keep reliability baked in from the start.

Cheung: As users will use Dash for business-critical work, what steps are you taking to detect and minimize AI hallucinations?

Singh: Dash grounds responses in users’ actual data, rather than open-ended inference, to give relevant insights and facts. This reduces the risk of hallucinations and ensures that every answer can be traced back to a real source.

We’re also investing heavily in quality assurance and responsible model evaluation to ensure we’re measuring the quality of our responses. You can’t fix what you don’t know, so we’re doing very robust measurements in order to avoid hallucinations.

Internally, Dropbox follows a rigorous AI governance process that spans model evaluation, ethical review, and user feedback. We emphasize transparency, accountability, and human oversight at every stage of product design. This ensures that innovation and responsibility advance hand in hand.

Why it matters: AI is only as powerful as it is trustworthy. By grounding insights in actual, live data and building transparency into every layer of development, Dropbox is ensuring Dash delivers reliable intelligence that teams can confidently act on, right from the word go.

DROPBOX UPGRADE

📦️ Dash experience inside Dropbox

The Rundown: Dash features are also making its way into Dropbox, turning the storage solution into an intelligent hub — with AI search, automated organization, and contextual summaries built in. The new experience is already live for select customers, with a broader rollout expected in the coming months.

Cheung: How does bringing Dash directly into Dropbox change the user experience? What’s next for Dash and Dropbox as you expand your AI capabilities?

Singh: Integrating Dash capabilities directly into Dropbox transforms the way users interact with their content.

Instead of just storing files, users can now search, summarize, and get contextual answers within Dropbox itself. Existing users will experience smarter search, automatic organization, and AI summaries within the interface they already know and trust.

Singh added: Dropbox Dash acts as an AI teammate that actually has context on your work, and we want to continue to evolve the ways Dash can help people cut through the busy work and focus on what matters most.

Looking ahead, we’ll continue to expand Dash’s integrations, deepen multimodal understanding, and develop more features that help users take action directly from their workspace while maintaining context-aware intelligence that consumer AI lacks.

Why it matters: Dropbox’s integration of Dash signals a shift from passive storage to active collaboration. By weaving AI into the core experience, Dropbox is creating a workspace that understands content, connects it, and helps users act on it in ways simpler and more fluid than ever before.

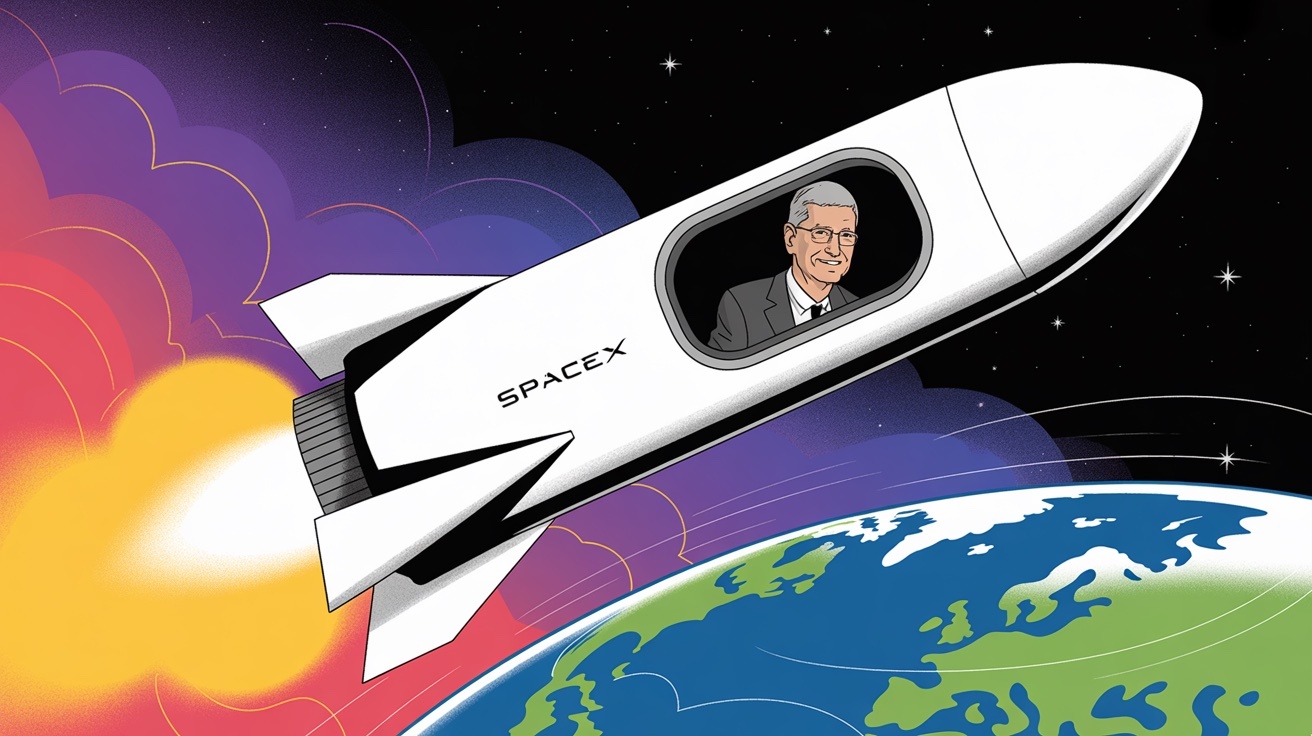

Apple may strike space deal with Musk

Read Online | Sign Up | Advertise

Good morning, tech enthusiasts. Apple’s dream of texting from anywhere is running into a satellite problem. The Information reports that the Cupertino giant may need to strike a deal with Elon Musk’s Starlink after its go-to partner started showing cracks.

Suddenly, the iPhone maker that avoids dependencies might have to phone a rival.

Reminder: Our next live workshop is today at 4 PM EST — join and learn the importance of context engineering and how to incorporate the core skill into your AI workflows. RSVP here.

In today’s tech rundown:

Apple’s orbital problem has a SpaceX solution

Rivian’s spinoff debuts wild new e-bike

A new blood test screens for 50 types of cancer

Google bets on carbon-capture gas power plant

Quick hits on other tech news

LATEST DEVELOPMENTS

APPLE/SPACEX

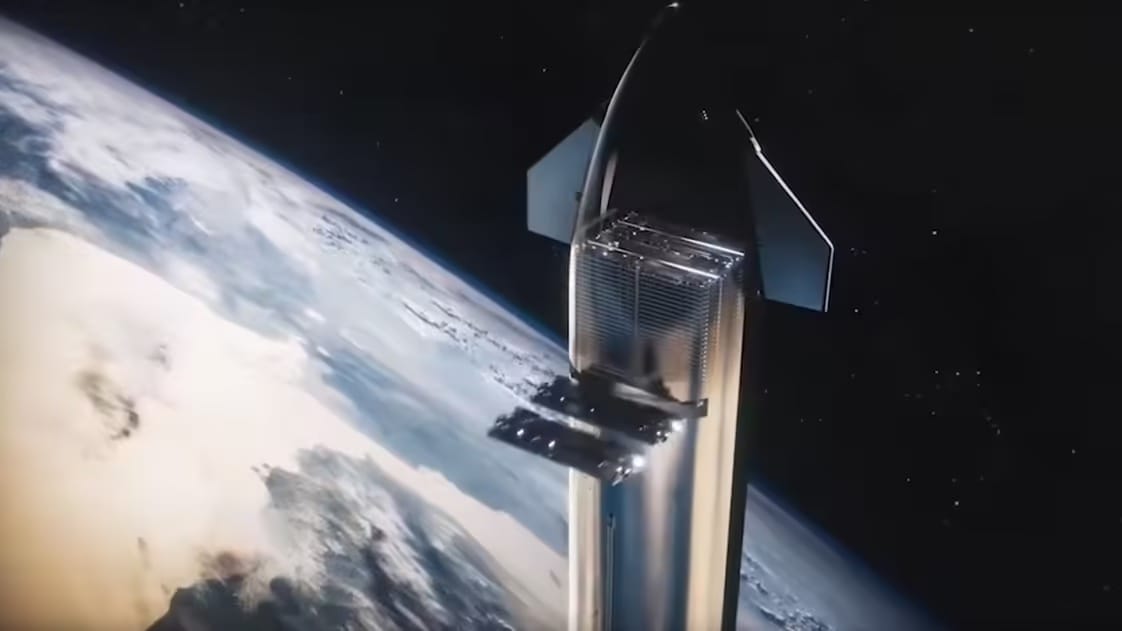

🚀 Apple’s orbital problem has a SpaceX solution

Image source: SpaceX

The Rundown: Apple’s dream of beaming data straight to iPhones is colliding with orbital reality. According to The Information, the Cupertino giant may need to partner with Starlink after its go-to satellite partner, Globalstar, started showing cracks.

The details:

Next-gen Starlink satellites are said to support the same spectrum Apple uses for Emergency SOS, making direct-to-device compatibility plausible.

Globalstar, Apple’s existing partner, has warned of heavy dependence on Apple, and chair James Monroe is reportedly discussing a sale for $10B.

Apple has already pumped $1.5B into Globalstar to keep its satellite texting feature alive, but the orbital infrastructure remains fragile and limited.

A Starlink tie-up could extend coverage, add capacity, and provide redundancy for safety features, likely complementing rather than replacing Globalstar.

Why it matters: Neither company has formally commented on the report, but a Starlink partnership would give Apple access to thousands of low-Earth-orbit satellites instead of Globalstar's sparse constellation. The deal would also mean handing leverage to Musk at a time when the two companies are increasingly competitive.

RIVIAN/ALSO

🚲 Rivian’s spinoff debuts wild new e-bike

Image source: ALSO

The Rundown: Rivian's new micromobility spinoff, ALSO, just pulled the cover off its long-awaited e-bike, the TM-B, along with a matching helmet and a pedal-assist quad aimed at shaking up how people move around cities.

The details:

The TM-B runs on DreamRide, ALSO's proprietary pedal-by-wire drivetrain that ditches the mechanical chain for software-controlled pedaling.

Its removable battery packs come in 538 Wh or 808 Wh options, claiming an impressive 100-mile range, plus dual USB-C ports to juice up your phone.

A modular top frame lets you swap between a solo seat, cargo hauler, or tandem bench in seconds without digging through a toolbox.

The $4,500 TM-B Launch Edition is open for preorders with spring 2026 delivery, while the $4K base version arrives later in the year.

Why it matters: DreamRide is the real headline — pedal-by-wire cuts the mechanical chain and lets software shape every aspect of the ride, delivering up to 20 mph on throttle alone and 28 mph with pedal assist. If ALSO can nail the execution, it's betting this digital-first approach will make e-bikes feel more like the EVs Rivian’s known for.

BIOTECH RESEARCH

🩸 A new blood test screens for 50 types of cancer

Image source: Kateryna Hliznitsova / Upsplash

The Rundown: A single blood draw that screens for more than 50 cancers just delivered encouraging trial data across North America, flagging tumors with no existing screening and catching more than half at early stages.

The details:

In a yearlong follow‑up of roughly 25K adults across the U.S. and Canada, about 1% returned a positive signal on the blood test, called the Galleri test.

Of those who tested positive, about 62% were later confirmed to have cancer after diagnostic work‑ups — a solid hit rate, but far from perfect.

Layered on top of standard screenings, Galleri boosted overall cancer detection more than sevenfold compared to routine tests alone.

False positives remain a problem: 38% of alerts were false alarms, and 196 cancers slipped through undetected during follow-up.

Why it matters: If multi-cancer blood tests like Galleri scale, they could catch deadly tumors — pancreatic, ovarian, esophageal — that currently have no early detection tools. It’s promising, but still in early days, and experts say that Galleri is meant to complement, not replace, established tests like mammograms or colonoscopies.

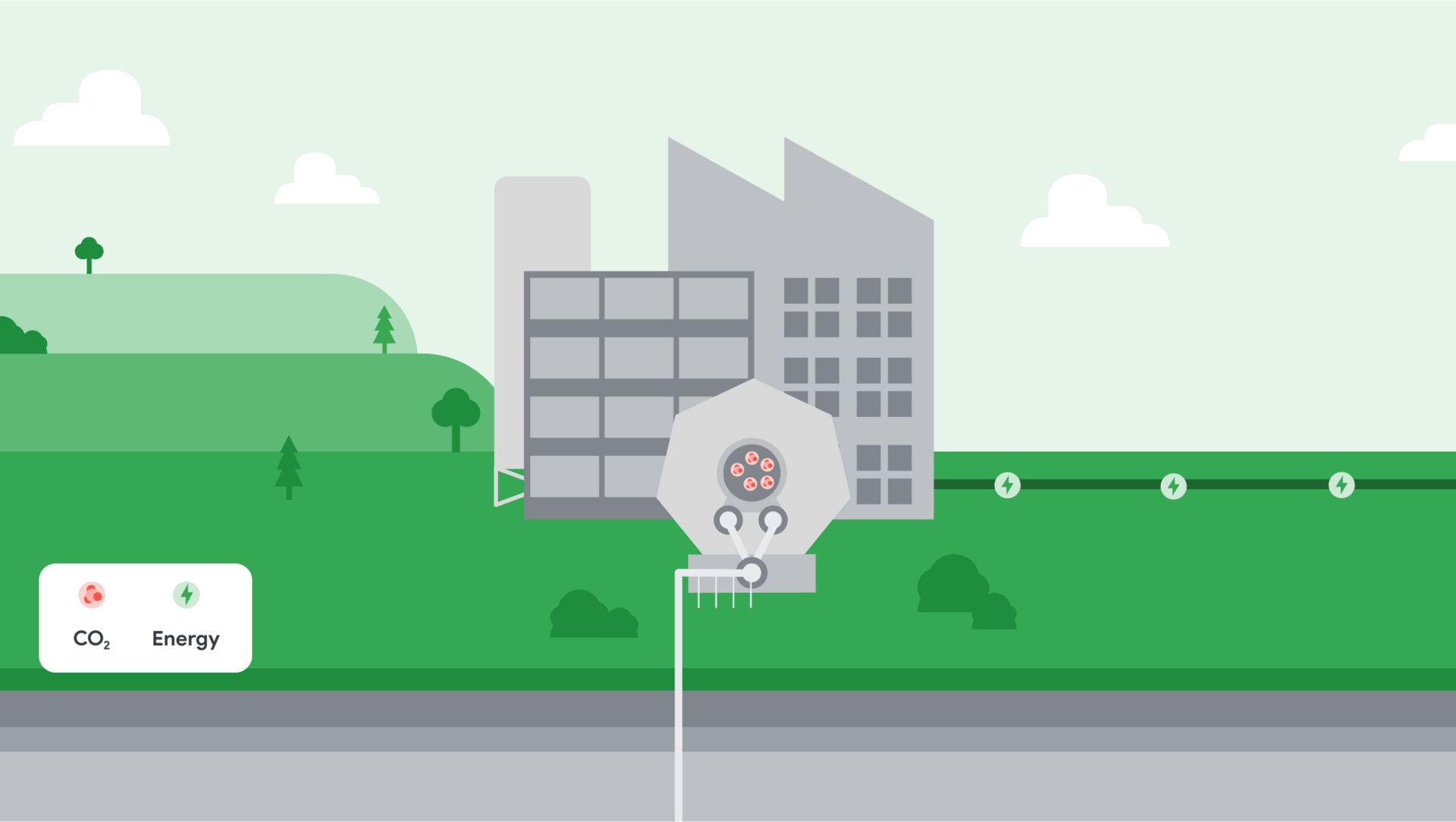

🏭 Google bets on carbon-capture gas power plant

Image source: Google

The Rundown: Google is backing a new gas-fired power plant in Illinois that will strip CO₂ from its smokestacks and pump it underground. It’s a bet on “clean firm” power to feed AI-hungry data centers when wind and solar aren’t available.

The details:

The play is “clean firm” power: always-on capacity to feed AI-scale data centers and support Google’s 24/7 carbon-free energy goal.

Google has committed to buy the majority of the Broadwing Energy Center’s planned 400 MW output once the plant comes online in 2030.

But capture systems aren’t perfect; they burn extra energy, and they don’t eliminate local air pollutants or upstream methane leaks from the gas supply.

Past marquee projects have stumbled, pipelines and storage bring regulatory and community risks, and critics see fossil lock-in by another name.

Why it matters: AI’s electricity appetite is colliding with the hard reality that renewables can't always deliver on demand, forcing tech giants to place risky bets on unproven low-carbon technologies. If carbon capture stumbles again, Google’s climate targets — and the promise of “clean” natural gas — go up in smoke.

QUICK HITS

📰 Everything else in tech today

Uber will now pay drivers $4K to swap gas cars for zero‑emission vehicles in a reversal of its 2020 stance to directly accelerate EV adoption.

Valthos, a NY-based biosecurity software startup, emerged from stealth with $30M from OpenAI and others, to build AI tools to thwart AI-enabled biological attacks.

GM will roll out a Google Gemini–powered conversational assistant across its cars, trucks, and SUVs starting next year, the automaker announced at a NYC event.

MD Anderson reports mRNA COVID shots given with checkpoint inhibitors are linked to significantly longer survival in advanced lung and skin cancers.

Airbus, Thales, and Leonardo are merging key satellite units into a 25K‑employee “European space champion,” slated to launch in 2027 to challenge Starlink.

Microsoft’s AI chief, Mustafa Suleyman, said on Thursday the company won’t build erotica‑focused AI, marking a clear split from longtime partner OpenAI.

Apple began shipping U.S.-made AI servers from its new Houston factory, ahead of schedule, as part of the company's $600B domestic buildout.

Denver-based AI data center startup Crusoe said Thursday it is raising $1.38B at a roughly $10B valuation in an oversubscribed Series E round.

Silicon Valley AI researchers are reportedly working what’s jokingly dubbed “0-0-2” schedules, from midnight to midnight with only two hours off on the weekend.

Seattle’s Basel Action Network says a two-year probe found at least 10 U.S. firms shipping used electronics to Asia and the Middle East, in a “hidden tsunami” of e-waste.

COMMUNITY

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Microsoft's 'Mico' personality upgrade

Read our last Tech newsletter: ‘ChatGPT for doctors’ hits $6B

Read our last Robotics newsletter: Amazon's massive robot hiring spree

Today’s AI tool guide: Turn data into insights with Copilot Vision

RSVP to our next workshop @ 4PM EST today: Learn Context Engineering

See you soon,

Rowan, Jennifer, and Joey—The Rundown’s editorial team

Microsoft's 'Mico' personality upgrade

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. Microsoft’s Clippy virtual paperclip wasn’t the most appreciated assistant, but it may have just been ahead of its time.

Microsoft’s just introduced ‘Mico’, a companion that aims to bring a new visual personality to Copilot — alongside new features built for the “human-centered” AI push, helping carve the company’s own identity beyond OpenAI's shadow.

Reminder: Our next live workshop is today at 4 PM EST — join and learn the importance of context engineering and how to incorporate the core skill into your AI workflows. RSVP here.

In today’s AI rundown:

Copilot’s personality upgraded with ‘Mico’

OpenAI acquires Mac automation startup

Turn spreadsheet data into insights with Copilot Vision

Netflix ‘all in’ on AI for advertising, production

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

MICROSOFT

👋 Copilot’s personality upgraded with ‘Mico’

Image source: Microsoft

The Rundown: Microsoft just hosted its Fall Release event and introduced ‘Mico’, an animated blob avatar that gives Copilot a visual personality — alongside the launch of new personalization features, health initiatives, browser automation, and more.

The details:

Mico appears as an animated orb that shifts colors based on tone, with an Easter egg that morphs it into the classic ‘Clippy’ when repeatedly tapped.

Copilot introduces features like Memory & Personalization for recall, connectors for new data links, and Proactive Actions for more hands-on help.

The assistant is also getting Groups, allowing for real-time collaboration with up to 32 people across AI tasks.

Health upgrades include medical responses based on Harvard Health sources, along with the ability to help locate doctors based on specific preferences.

Copilot Mode gets several new features in Microsoft’s Edge browser, including Actions for multi-step workflows and Journeys for returning to old projects.

Why it matters: Microsoft returns to its Clippy roots (love the Easter egg) with a new mascot/companion for the AI era. With its new personalization and ‘human-centered’ efforts, the tech giant is forging even more of an AI identity separate from the company’s hot and cold relationship with OpenAI. Watch the full Fall Release here.

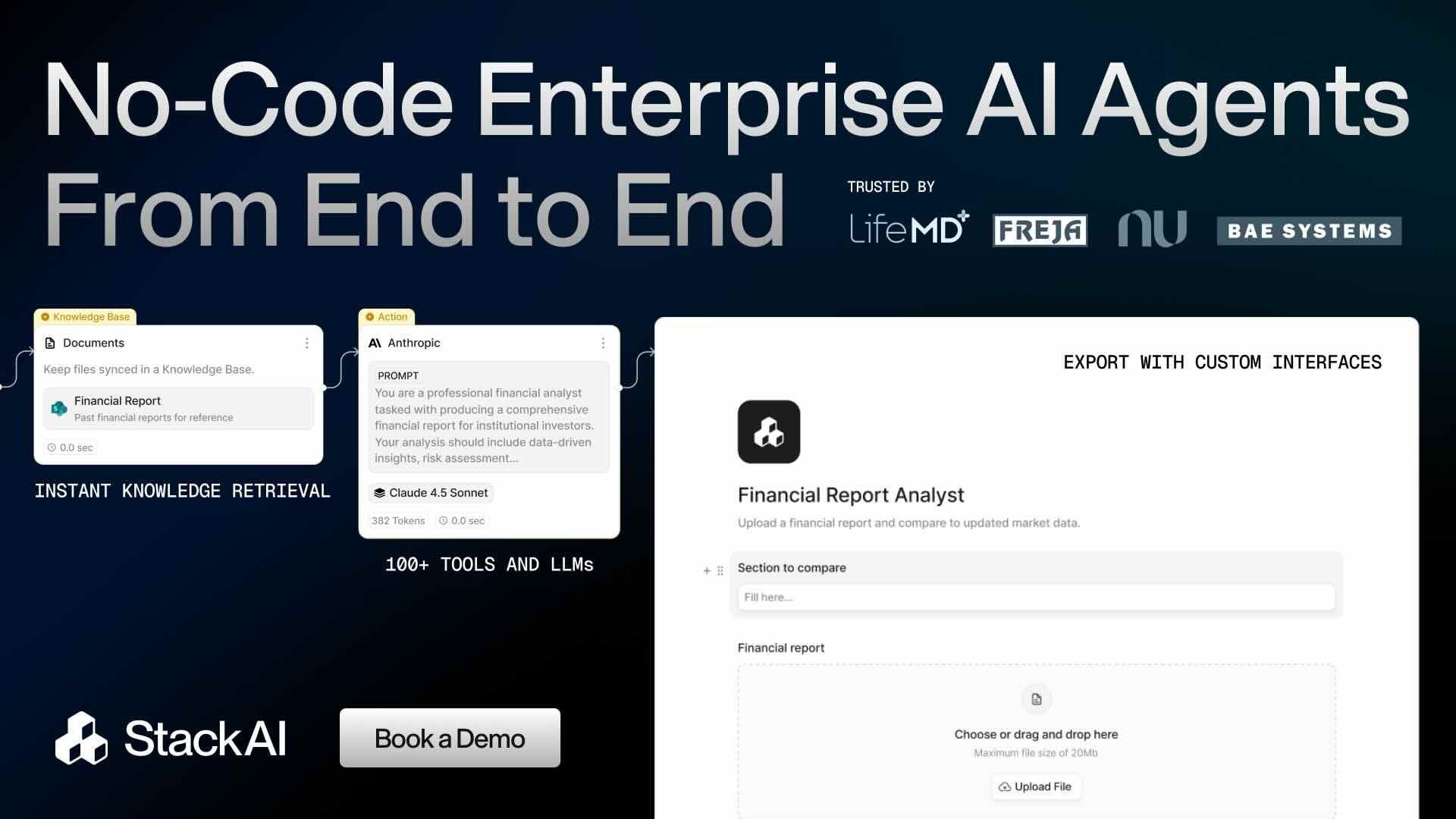

TOGETHER WITH STACKAI

⌛️Enterprise AI with the fastest time to value

The Rundown: StackAI is the no-code, drag-and-drop building platform for enterprise AI. Easily design and deploy powerful AI agents to automate tedious tasks, all with white-glove support from AI experts.

StackAI allows you to:

Leverage integrations with 100+ enterprise tools

Export as a chatbot, form, API, and more

Manage roles, control feature access, and monitor analytics

OPENAI

💻 OpenAI acquires Mac automation startup

Image source: OpenAI

The Rundown: OpenAI just acquired Software Applications Incorporated, the startup behind unreleased Mac automation tool Sky — bringing on the team that created the iOS app (Workflow) that eventually became Apple Shortcuts after a 2017 acquisition.

The details:

Sky operates as a floating AI interface on Mac desktops, analyzing screen content and executing tasks across applications.

OAI plans to integrate Sky's macOS capabilities into ChatGPT, potentially enabling the assistant to control desktop apps and automate workflows natively.

The acquisition comes on the heels of OpenAI’s Atlas browser release this week, which is currently a Mac-only application.

The move also adds to OpenAI’s list of recent acqui-hires that includes startups Statsig, Context AI, Roi, Multi, Crossing Minds, and Alex.

Why it matters: While the initial reception to Atlas has been mixed, OAI is clearly moving to position itself as the AI layer for Mac users — all before the tech giant even gets its own strategy together. The move also follows the acqui-hire trend that has seen AI labs bring on full, specialized teams to raise talent in specific product areas.

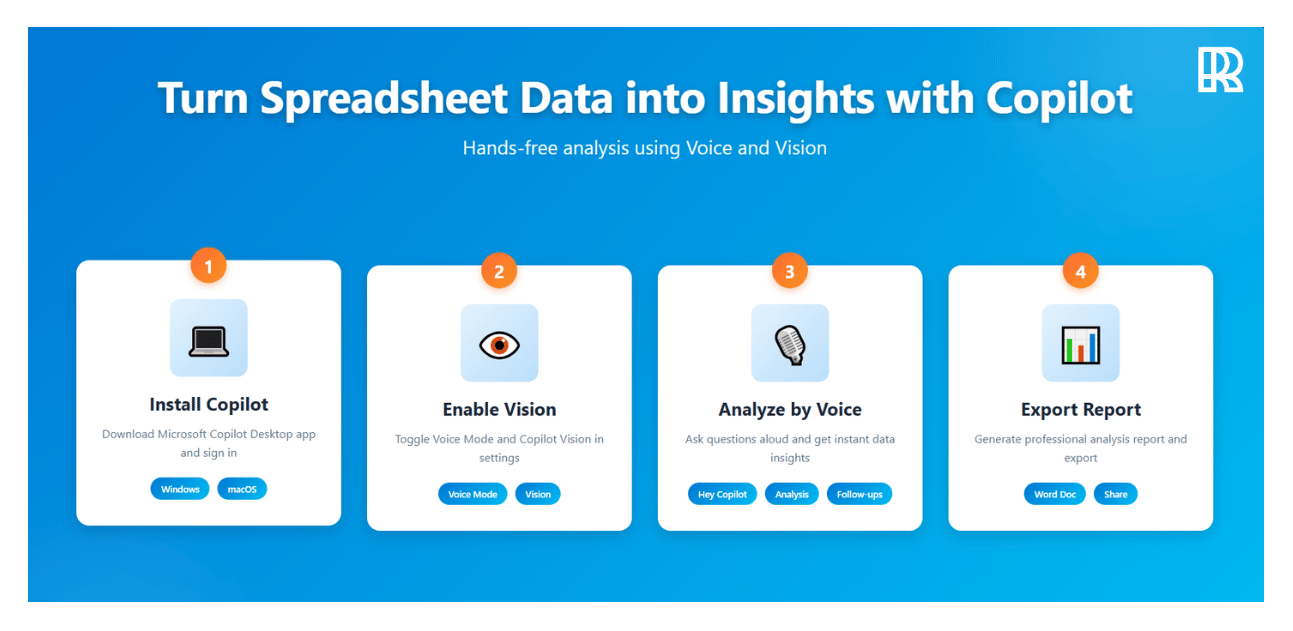

AI TRAINING

📊 Turn spreadsheet data into insights with Copilot Vision

The Rundown: In this tutorial, you will learn how to use Microsoft Copilot Desktop's Voice and Vision features to analyze Google Sheets or Excel data hands-free, asking questions aloud and getting instant insights without typing formulas.

Step-by-step:

Install Microsoft Copilot from Microsoft Store (Windows) or App Store (macOS 14.0+/M1 chip), open the app, and sign in with your Microsoft account

Go to Settings via profile icon, toggle on "Voice Mode" and "Copilot Vision", then open your Google Sheets/Excel file in the browser

Say "Hey Copilot", click the specs icon (eye glasses) on the toolbar to enable Vision mode — Copilot scans and confirms it sees your data

Ask analysis questions: "What's the most revenue-generating product?" or "Calculate total revenue" - Copilot highlights cells and explains calculations

Close the toolbar, then prompt: "Draft a professional analysis report with Executive Summary, Top Performers table, and Key Insights"

Pro tip: Use this workflow for learning new skills, reading technical documents, or studying articles.

PRESENTED BY MONGODB

🤖 Build AI Agents with MongoDB

The Rundown: AI agents are reshaping how startups build intelligent products, and MongoDB is the engine that makes it possible. As a unified vector and document database, MongoDB helps your agents think, plan, and remember.

MongoDB gives your AI agents:

Vector & Hybrid Search for smarter context

Short & long-term memory in one place

Unified platform from prototype to scale

Turn your next big idea into an intelligent product with MongoDB.

NETFLIX

📺 Netflix ‘all in’ on AI for advertising, production

Image source: Midjourney

The Rundown: Netflix executives declared that the streaming giant is going “all in” on AI during the company’s earnings call this week across both business operations and content production, coming despite the broader industry’s continued skepticism.

The details:

Netflix outlined plans to deploy AI across recommendations, advertising, and production workflows, saying the company is “well positioned” for the AI boom.

Several Netflix productions have already incorporated the tech for uses like age-reversing and experimenting with wardrobe and set concept ideation.

CEO Ted Sarandos said he isn’t worried about AI replacing creativity, believing the tech will help creators “tell stories better, faster, and in new ways.”

He also said, “AI can give creatives better tools to enhance experiences for our members, but it doesn’t automatically make you a great storyteller if you’re not.”

Why it matters: Between AI actors, feuds with OpenAI over Sora, constant battles with Hollywood unions, and backlash from fans, AI’s transition into entertainment has been anything but smooth. But as advances continue, the balancing act between companies like Netflix, talent, and fans will only get more difficult.

QUICK HITS

🛠️ Trending AI Tools

👨💻 Averi - The AI Marketing Workspace combining strategy, content creation, and expert collaboration in one unified platform*

🌎 HunyuanWorld-Mirror - Create 3D worlds from text, image, or video

📚 PokeeResearch - Open-source, SOTA 7B parameter deep research agent

✍️ Spiral - Every’s new AI writing partner with taste

*Sponsored Listing

📰 Everything else in AI today

OpenAI’s head of Sora, Bill Peebles, announced new upgrades coming to the AI video tool, including character cameos, video editing, and community improvements.

Stability AI unveiled a new partnership with Electronic Arts, bringing AI models and tools to the company’s game design process.

OpenAI introduced Company Knowledge, a new feature for ChatGPT Enterprise, Business, and Edu users that helps consolidate information across apps.

Anthropic rolled out Claude’s memory feature to Max users, allowing the assistant to remember previous conversations and create specific context for individual projects.

Lightricks launched LTX-2, an open-source AI video model with native 4K generations, synchronized audio, and up to 15-second outputs.

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader Canaan M. in Sprague River, OR:

"I own a small upholstery business and specifically use ChatGPT to generate ideas for blog posts, competitor analysis of my business compared to other shops in the area, and image generation for custom seat designs and vehicle additions."

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Open letter demands ASI freeze

Read our last Tech newsletter: ‘ChatGPT for doctors’ hits $6B

Read our last Robotics newsletter: Amazon's massive robot hiring spree

Today’s AI tool guide: Turn data into insights with Copilot Vision

RSVP to our next workshop @ 4PM EST today: Learn Context Engineering

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown

Amazon's massive robot hiring spree

Read Online | Sign Up | Advertise

Good morning, robotics enthusiasts. Amazon is planning to replace more than half a million workers with robots by 2033, according to leaked documents that outline automating 75% of operations while saving billions.

The warehouse of the future, if Amazon’s plan holds, looks to be a robot empire. But the fate of the humans who once filled them? That’s a harder question.

In today’s robotics rundown:

Amazon to replace 600K U.S. job with robots

Musk says he wants control over Tesla’s ‘robot army’

Water-blasting drones fight fires autonomously

Noetix debuts ‘family-friendly’ humanoid for $1.4K

Quick hits on other robotics news

LATEST DEVELOPMENTS

AMAZON

📦 Amazon to replace 600K U.S. jobs with robots

Image source: Amazon

The Rundown: Amazon plans to cut 600K U.S. jobs, replacing humans with robots across three-quarters of its operations, according to leaked documents seen by The New York Times. Morgan Stanley says the switch could save the retail giant $4B a year.

The details:

Amazon’s roadmap targets avoiding more than 600K new U.S. hires by 2033, including about 160K roles it would otherwise need as early as 2027.

The company reportedly aims to automate roughly 75% of its operations through next‑gen facilities that already run with skeleton crews.

Projected savings: 30 cents per package and $12.6B between 2025–2027, according to the NYT, with Morgan Stanley suggesting $4B a year.

Internal communications advised teams to scrub terms like “automation” and “AI” from public messaging, opting for “advanced technology” and “cobots.”

Why it matters: Amazon is pushing back on the report, noting it plans to recruit 250K workers for the holiday season and has tripled its U.S. headcount since 2018 to nearly 1.2M. Meanwhile, its Shreveport, LA site already deploys 1K robots and runs with 25% fewer workers — a template Amazon plans to replicate across 40 sites by 2027.

TESLA

🤯 Musk says he wants control over Tesla’s ‘robot army’

Image source: Tesla

The Rundown: Elon Musk says he needs investors to approve a $1T payday so he can keep control of Tesla’s “robot army” — yes, really. As the EV business scrapes out a modest rebound, he’s doubling down on Optimus and robotaxis for Tesla’s future.

The details:

On this week’s Q3 earnings call, Musk pressed investors to back a proposed $1T pay package and his authority to “control” Tesla's emerging “robot army.”

Musk says Optimus will become an “incredible surgeon” and, paired with robotaxis, claims Tesla’s robots could help build a world without poverty.

Tesla shares dropped after hours following the earnings report, with Musk hyping robotaxis and Optimus rather than core EV fundamentals.

Musk says Tesla is aiming to demo its next‑gen “Optimus V3” in Q1 2026.

Why it matters: Musk is effectively asking shareholders to fund his pivot from carmaker to robotics empire, betting Tesla’s future on unproven humanoid tech while threatening to walk if they refuse. If investors balk, it could show waning confidence in his ability to deliver moonshots while the core EV business stalls.

SENECA

🔥 Water-blasting drones fight fires autonomously

Image source: Seneca

The Rundown: Sausalito startup Seneca raised $60M to build autonomous, water‑cannon drones that self‑launch to attack wildfires before they spread. After putting out test burns, Seneca is aiming for its first real‑world deployments next year.

The details:

The drones self‑dispatch, fly autonomously, and pummel wildfires with dual water cannons engineered for rapid knockdowns.

Seneca targets sub‑10‑minute response from remote launch sites, using heavy‑lift airframes that haul 100 lb. payloads and blast water at over 100 PSI.

The startup says that five-drone teams can lay around 1,280 feet of foam perimeter with precision, boxing in small fires before they run amok.

A five‑drone kit is priced from the high six figures into the low seven figures, undercutting helicopter sorties for initial attack.

Why it matters: California’s fire season now runs year-round, with the state losing over 4M acres in 2020 alone and climate change pushing ignition risk higher. Seneca’s drones could rewrite response by wiping out blazes in their first critical minutes — that narrow window that separates a contained burn from an unstoppable inferno.

NOETIX ROBOTICS

🤖 Noetix debuts ‘family-friendly’ humanoid for $1.4K

Image source: Noetix

The Rundown: Beijing startup Noetix Robotics just unveiled Bumi, a lightweight, child-sized humanoid priced under CN¥ 10K (about $1,370) that can walk, balance, and even dance — squarely aimed at classrooms and living rooms, not research labs.

The details:

Bumi stands 3' 1" tall, weighs 26 lb., and at $1,370 claims to be the first “high-performance” humanoid under the CN¥ 10K threshold.

Noetix says the robot walks, balances, and dances with bipedal stability and coordinated movement designed for everyday spaces.

Built with lightweight composites and a self-developed motion control stack, Bumi pairs an open programming interface with voice interaction.

Power comes from a 48V battery (over 3.5Ah) delivering roughly 1–2 hours of runtime, as per launch materials; presale kicks off on Singles’ Day, Nov. 11.

Why it matters: Pricing a capable humanoid below $1.5K undercuts even China's cheapest models and costs a fraction of U.S.-available options like the $16K Unitree G1 or Tesla's projected $20K–$30 Optimus. If Bumi finds traction in schools and homes, expect Western robotics firms to scramble for their own sub-$2K response.

QUICK HITS

📰 Everything else in robotics today

Amazon unveiled Blue Jay, a system of robotic arms that merges three warehouse stations into one so it can pick, sort, stow, and consolidate items simultaneously.

Elon Musk predicts that Tesla will remove the safety monitor from its robotaxis by year-end and launch a robotaxi service in 8–10 new markets by the end of 2025.

South Korea's massive Future Innovation Technology Expo is showcasing flying taxis, humanoids, and autonomous vehicles across 2K booths in Daegu.

Serve Robotics, a developer of sidewalk delivery bots, agreed to sell more than 6M shares in a registered direct offering expected to raise about $100M.

China’s UBTECH says this year’s orders for its Walker humanoid series have surpassed CN¥ 630M (about $88M).

Walmart has created a new executive role to oversee delivery drones and other autonomous fulfillment tech, naming company veteran Greg Cathey to lead the effort.

Shenzhen-based Leju Robotics raised $207M in pre‑IPO funding to speed humanoid development and scale manufacturing.

NC State researchers 3D‑printed paper‑thin magnetic films that, when attached to origami, act as “muscles” and move under magnetic fields without hindering folding.

COMMUNITY

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Open letter demands ASI freeze

Read our last Tech newsletter: 'ChatGPT for doctors' hits $6B

Read our last Robotics newsletter: Unitree's 'bionic face' humanoid

Today’s AI tool guide: Create an email campaign generator with Build

RSVP to our next workshop @ 4PM EST Friday: Learn context engineering

See you soon,

Rowan, Jennifer, and Joey—The Rundown’s editorial team

No matching search results

Try using different keywords, double-check your spelling, or explore related categories.

Stay Ahead on AI.

Join 2,000,000+ readers getting bite-size AI news updates straight to their inbox every morning with The Rundown AI newsletter. It's 100% free.