Get the latest AI news, understand why it matters, and learn how to apply it in your work — all in just 5 minutes a day. Join over 2,000,000+ subscribers.

AI just made the billion-dollar solo founder real

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. It took $20K, two months, and a stack of AI tools for Matthew Gallagher to launch a telehealth startup from his house in LA. A year and a half later, Medvi is on pace to do $1.8B in sales.

Sam Altman predicted in 2024 that AI would make the solo billion-dollar company possible. Gallagher may have just delivered the proof — not by building AI himself, but by using it to move fast and replace an entire corporate workforce.

In today’s AI rundown:

AI turns solo founder into $1.8B operator

OpenAI acquires TBPN in first media deal

Turn any flat image into a fully editable design

Google’s powerful new open-source family

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

MEDVI

🚀 AI turns solo founder into $1.8B operator

Image source: Medvi / NYT

The Rundown: Matthew Gallagher just scaled his startup, Medvi, from a $20K AI experiment to $1.8B in projected annual sales, the NYT reported — becoming one of the first to fulfill Sam Altman’s prediction of AI-driven, solo billion-dollar companies.

The details:

Medvi sells GLP-1 drugs online, outsourcing doctors, prescriptions, and shipping to telehealth platforms CareValidate and OpenLoop.

Gallagher used ChatGPT, Claude, and Grok for code, Midjourney and Runway for ad creatives, and ElevenLabs and custom AI agents for customer service.

The whole operation took two months and $20K to stand up, with the company bringing in $401M in revenue in its first year.

He then brought on his brother as the only full-time hire, and uses contract engineers and account managers, with the team on pace for $1.8B this year.

Why it matters: Altman predicted that a one-person billion-dollar company "would have been unimaginable without A.I., and now it will happen." The first real example isn't some revolutionary AI product; it's selling weight-loss drugs from a living room. AI tools, combined with strong builder instincts and action, can yield pretty wild results.

TOGETHER WITH VANTA

🛡️ Turn compliance into a competitive advantage

The Rundown: With customer expectations rising and compliance needs shifting, keeping up can be daunting. Vanta’s Agentic Trust Platform helps fast-moving startups and security teams get audit-ready fast and stay continuously compliant, turning compliance into a deal accelerator, not a blocker.

Join to learn how Vanta can help you:

Automate evidence collection, policies, and remediation across major frameworks

Build real security foundations, not check-the-box fixes

Show credibility faster with a public Trust Center and AI-powered questionnaires

Keep engineers focused with guided workflows and developer-native automation

OPENAI & TBPN

🎙️ OpenAI acquires TBPN in first media deal

Image source: Jordi Hays on X

The Rundown: OpenAI just announced the acquisition of TBPN, the daily live tech talk show that's become a go-to for Silicon Valley CEOs, in a deal reportedly worth low hundreds of millions — marking the AI giant's first media acquisition.

The details:

TBPN goes live every weekday on YouTube and X, pulling around 70K viewers per episode and hosting major tech CEOs and figures.

Fidji Simo said “the standard comms playbook just doesn’t apply to us” with OAI driving a tech shift, aiming to foster real, constructive convos on AI.

TBPN's 11-person team will report to OAI chief of global affairs Chris Lehane, and will drop its ad business but retain editorial independence on the show.

Co-founders Jordi Hays and John Coogan debuted the live show 17 months ago, with the company reportedly on pace for $30M in revenue this year.

Why it matters: This is a fascinating one, with the AI leader buying a direct channel to both the cultural tech vibes TBPN has fostered and the founders and CEOs who tune in every day. OAI’s public perception has taken hits this year, and bringing in the team behind one of the tech bubble’s most beloved shows could help shake up the approach.

AI TRAINING

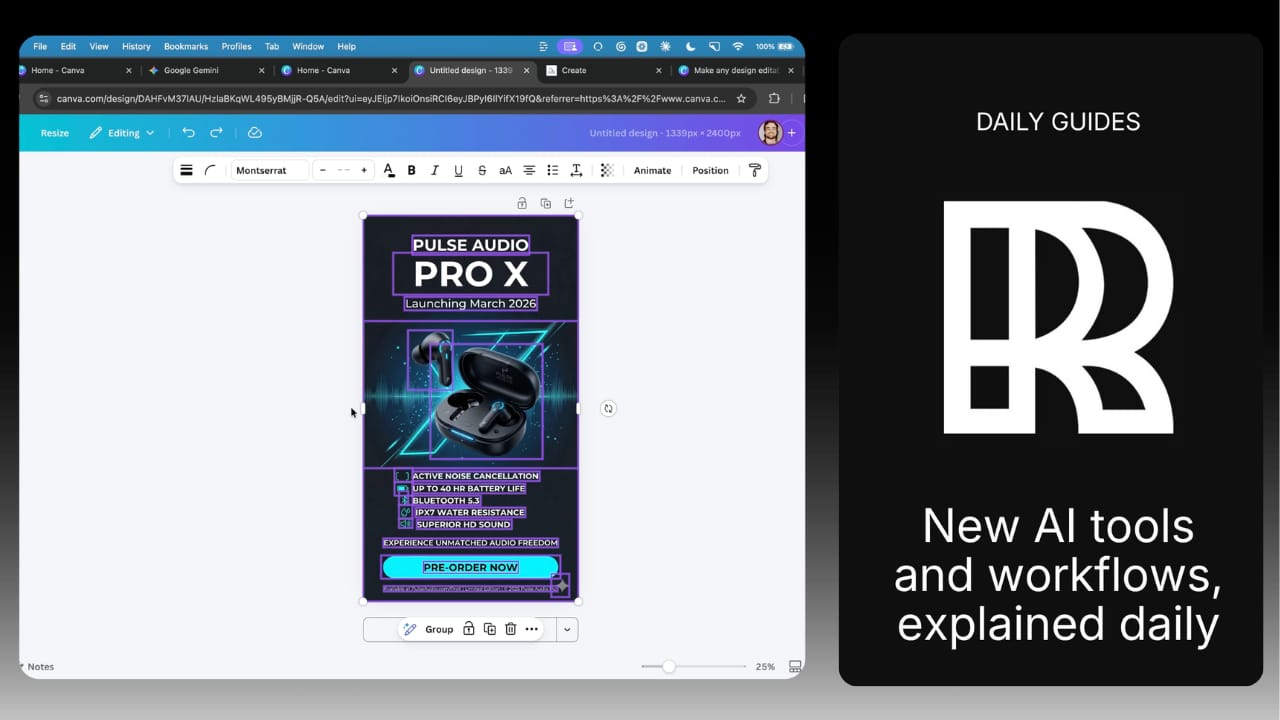

🌄 Turn any flat image into a fully editable design

The Rundown: In this guide, you'll learn how to use Canva's new Magic Layers feature to turn any flat AI image into a fully editable design — enabling you to fix small details in generations without recreating entire outputs.

Step-by-step:

On the Canva homepage, select Magic Layers, click Select Media, and choose an image

Wait 30-60 seconds while Canva processes the image. It reads the layout, identifies text, objects, and background, and splits them into individual layers

Click any layer and edit it directly. Swap a date, change a tagline, fix a typo. Or click an object layer to move it, resize it, or delete it entirely

Drop in a replacement image (new product photo, logo), and it slots into the existing layout. Adjust the background color using the color picker if needed

Pro tip: To use Magic Layers on an existing design, open the design, click Uploads to add your image, select it, click Edit in the toolbar, then select Magic Layers.

PRESENTED BY GOOGLE CLOUD

🧠 Build scalable AI agents faster

The Rundown: Google Cloud's updated Startup technical guide: AI agents is your essential blueprint, providing technical founders with the practical frameworks needed to design, build, and deploy intelligent autonomous systems that scale seamlessly.

Inside the updated guide, you’ll discover:

Frameworks to design autonomous agent architectures

Best practices for prompt engineering workflows

Scalable deployment strategies using Google Cloud

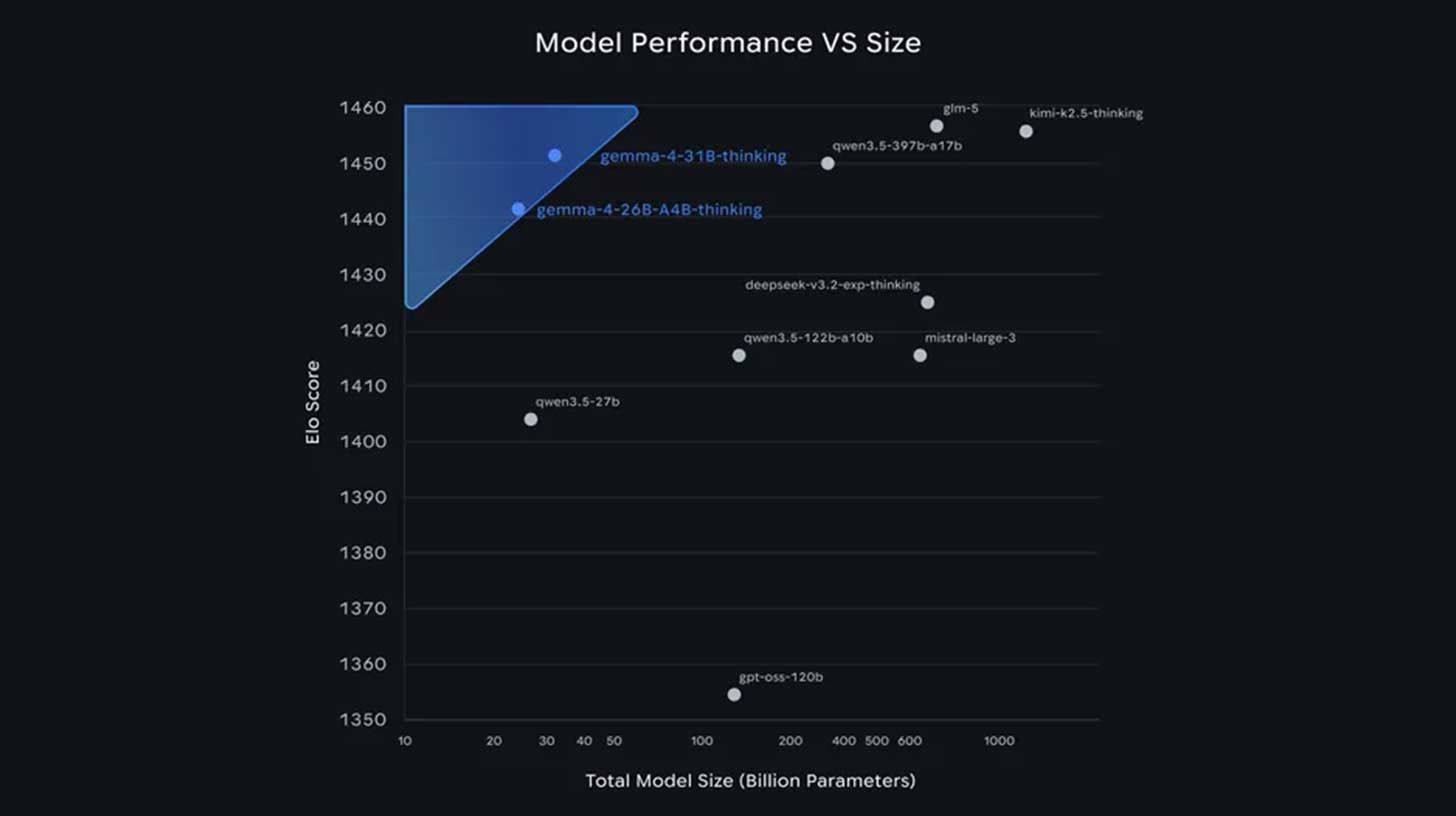

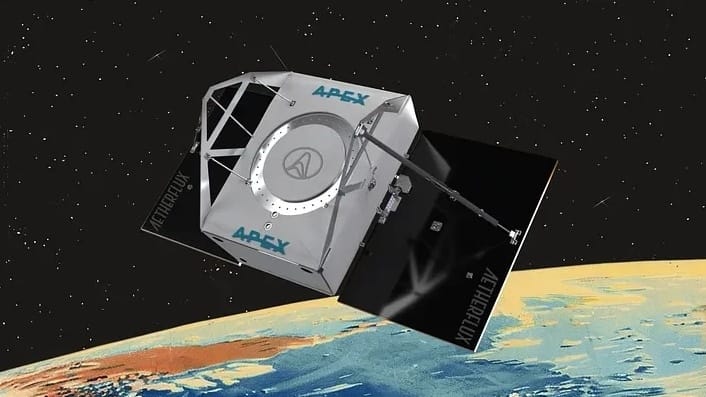

💎 Google’s powerful new open-source family

Image source: Google

The Rundown: Google DeepMind rolled out its Gemma 4 family, four open models with sizes for devices from phones to computers — released under Apache 2.0 for the first time to remove legal barriers that pushed enterprises toward Qwen/Mistral instead.

The details:

All four models handle code, vision, and multi-step agent tasks, with the smallest variants adding voice and running entirely offline on a phone.

Gemma 4’s 31B and 26B models place near rivals like Kimi K2.5, GLM-5, and Qwen 3.5 in terms of intelligence while coming in at a fraction of the size.

The switch from a custom license to Apache 2.0 means developers can modify, deploy, and sell commercially with zero legal friction, a first for the Gemma line.

Why it matters: Chinese models have dominated the open-source frontier, but this week has seen two U.S. releases to challenge them: Arcee AI’s Trinity-Large and now Gemma 4. Google is trending in the opposite direction of its Chinese rivals, who are moving towards closed systems, with Gemma getting an even more permissive license.

QUICK HITS

🛠️ Trending AI Tools

🔀 Merge Gateway - Ship production AI faster. Routing, cost controls, and observability already built in*

💎 Gemma 4 - Google's new open-weight AI with SOTA intelligence for its size

🧠 Qwen3.6-Plus - Alibaba's reasoner with 1M context window, strong coding

🎧 MAI-Transcribe-1 - Microsoft's speech-to-text model for 25 languages

*Sponsored Listing

📰 Everything else in AI today

ByteDance’s Seedance 2.0 AI video generator is now broadly available across major platforms, taking the top spot on Artificial Analysis’ video leaderboards.

Cursor unveiled Cursor 3, a new rebuilt interface that lets developers run fleets of local and cloud coding agents in parallel across multiple repos from one workspace.

Alibaba released Qwen3.6-Plus, a reasoning model that rivals Opus 4.5 on coding agent benchmarks while natively supporting 1M-token context and multimodal inputs.

Microsoft launched MAI-Transcribe-1 in public preview, a new speech-to-text model that tops benchmarks on accuracy across 25 languages.

Japanese AI startup Sakana AI opened beta testing for Marlin, an autonomous AI research assistant that can work up to 8 hours straight on business-related tasks.

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader Phillip H. in Barcelona, Spain:

"I have a Claude Cowork automation that connects with my HubSpot, Slack, Gmail, Google Calendar, and Fellow AI. It runs automatically every workday at 8 AM to give me an update on anything I might have missed and what's on my plate for the day!"

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Dorsey makes the AI case against managers

Read our last Tech newsletter: This startup wants to grow your next body

Read our last Robotics newsletter: Waymo hits 500k weekly rides

Today’s AI tool guide: Turn any flat image into a fully editable design

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown

Waymo hits 500K weekly rides

Read Online | Sign Up | Advertise

Good morning, robotics enthusiasts. Waymo just hit half a million paid robotaxi rides a week across 10 U.S. cities — a 10x leap in under two years.

Meanwhile, Baidu is investigating a Wuhan outage that stalled 100 Apollo Go vehicles mid-traffic, Tesla’s robotaxi service remains limited to Austin, and Zoox still hasn’t started charging passengers. Autonomous vehicles are arriving, but the field is separating fast.

In today’s robotics rundown:

Waymo just hit 500K rides a week

Drones are learning to fly like bats

Europe’s biggest industrial bet is a humanoid

A bicycle robot that jumps and pops wheelies

Quick hits on other robotics news

LATEST DEVELOPMENTS

WAYMO

🚖 Waymo just hit 500K rides a week

Image source: Waymo

The Rundown: Alphabet’s Waymo says it is now completing 500K paid robotaxi rides per week across 10 U.S. cities — a 10x jump from 50K weekly trips just two years ago, and the clearest signal yet that autonomous ride-hailing is graduating to mainstream.

The details:

The fleet holding those numbers steady sits at roughly 3K vehicles equipped with Waymo’s 5th-gen self-driving system, per NHTSA filings.

In February, Waymo launched in Dallas, Houston, San Antonio, and Orlando, bringing its total to 10 U.S. markets, up from just 3 cities at the start of 2025.

Waymo’s numbers still barely register against Uber’s scale: Uber completed 13.5B trips in 2025, including more than 1M mobility rides per hour.

Waymo was among 7 AV companies, including Tesla and Zoox, that refused to tell a Senate investigation how often their cars use remote human assistance.

Why it matters: Waymo co-CEO Dmitri Dolgov has framed the shift starkly: eight years to reach riders in four cities, then four new markets launched in a single day. That kind of acceleration is what separates Waymo from a field where Tesla remains limited to Austin and Zoox is only beginning commercial rollout.

DRONES

🦇 Drones are learning to fly like bats

Image source: Worcester Polytechnic Institute

The Rundown: Researchers at Worcester Polytechnic Institute have built an AI-powered echolocation system that lets palm-sized quadcopters navigate in complete darkness like bats, with no cameras, lidar, or GPS required.

The details:

The system uses ultrasound speakers and microphones, paired with a compact neural network that interprets echoes in real time to construct a 3D map.

Acoustic baffles help the drone filter out the interference of its own propeller noise, isolating the faint reflections that bounce back from surfaces.

Trained largely in simulation, the AI then reads minute differences in echo timing and intensity to infer distance, shape, and even surface texture.

The researchers say their bat-style echolocation stack could scale to swarms of low-cost drones that slip through smoke, dust, and darkness.

Why it matters: The researchers say the stack is designed to scale: swarms of cheap, camera-free drones threading through smoke, caves, and rubble, where conventional sensors go blind. The applications aren’t hard to imagine: disaster search-and-rescue, underground infrastructure inspection, and, inevitably, defense.

HUMANOIDS

🤖 Europe’s biggest industrial bet is a humanoid

Image source: Neura Robotics

The Rundown: Europe has ceded ground on AI models to the U.S. and on EVs to China. Now it’s making a calculated play for a third front: humanoids on the factory floor, Bloomberg reports.

The details:

Sweden’s Hexagon has spun its factory-automation know‑how into the Aeon humanoid, now running pilot tasks at BMW’s Leipzig plant.

Germany’s Neura Robotics is raising about €1B ($1.1B) at a €4B ($4.3B) valuation, for a modular humanoid that can plug into existing assembly lines.

Schaeffler has partnered with Neura to co-develop compact actuators for humanoid joints, and plans to deploy thousands of Neura robots by 2035.

Bosch, via its newly formed robotics division, announced a collaboration with Neura to pool sensor data and jointly develop AI software.

Why it matters: Europe’s industrial giants — BMW, Bosch, Schaeffler — are using humanoid partnerships to hedge against labor shortages and foreign competition simultaneously, backing homegrown robotics startups. If the BMW pilot and Neura hit their targets, Europe could have its first credible answer to the physical AI race.

RAI

🚲 A bicycle robot that jumps and pops wheelies

Image source: Reve AI / The Rundown

The Rundown: Researchers at the Robotics and AI Institute have built a 52 lb. bicycle robot that hits 18 mph, clears 3-foot jumps, and performs wheelies — all via reinforced learning (RL) policies trained entirely in simulation.

The details:

The robot combines a bicycle frame with a reaction mass and a spatial linkage system that concentrates most of the robot’s weight in a movable head unit.

It hits a top speed of 8 m/s — roughly 18 mph — and can vault onto platforms up to 3 feet high, about 130% of its own nominal height.

Some behaviors, like the “shimmy-turn,” weren’t explicitly programmed but emerged as solutions the learning algorithm discovered on its own.

The team trained all RL policies using NVIDIA’s Isaac Lab, which uses a high-fidelity physics engine and randomized simulation parameters.

Why it matters: This is a big deal for robot control: this bot shows sim-trained RL can pull off wheelies, jumps, and other dynamic maneuvers on a super narrow two-wheel platform without hand-coded tricks. It’s still a custom robot, but it points toward a future where similar methods could unlock much more agile scooters and delivery bots.

QUICK HITS

📰 Everything else in robotics today

A system failure in Baidu’s Apollo Go network caused at least 100 robotaxis in Wuhan to suddenly freeze in place, trapping some passengers for up to two hours.

Disney’s newly debuted Olaf robot at Disneyland Paris went viral after freezing mid-sentence and collapsing backward on its first day of operation.

Figure CEO Brett Adcock said that he ended the OpenAI partnership because it brought “very little” value and turned OpenAI into a direct humanoid competitor.

Austin-based defense startup Saronic has raised $1.75B at a $9.25B valuation to scale production of its autonomous surface vessels and build AI-based shipyards.

Scientists are developing tiny DNA robots that could one day move through the body to deliver drugs, hunt viruses, and build ultra-precise nanoscale devices.

China has reportedly opened a new humanoid factory in Guangdong that can produce up to 10K robots a year and one humanoid every 30 minutes.

UK startup Humanoid has tested its HMND 01 robot in a car factory, where it followed warehouse software to handle real tote-picking and delivery tasks.

Chinese firm Ubtech Robotics’ share price reportedly jumped after the firm reported a 23-fold surge in 2025 sales of full-size humanoids.

Grab and WeRide have launched Singapore’s first public robotaxi service, starting with 11 autonomous cars running limited routes in the Punggol neighborhood.

Researchers created a control method that lets ultra-flexible, tendon-driven robots move accurately in tight spaces with under 1% error for more precise surgical tasks.

Voyager will help Icarus Robotics test its Joyride free-flying robot on the ISS in early 2027 to prove autonomous operation in microgravity.

COMMUNITY

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Dorsey makes the AI case against managers

Read our last Tech newsletter: This startup wants to grow your next body

Read our last Robotics newsletter: Physical Intelligence’s $11B robot brain

Today’s AI tool guide: Build a productivity tool with Replit

RSVP to next workshop @ 2 PM EST today: Presentation Slides with AI

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — The Rundown’s editorial team

Dorsey makes the AI case against managers

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. Every big company runs the same way: stack managers on top of managers until information flows. Jack Dorsey thinks AI just made that whole structure obsolete.

The Twitter founder and Block CEO just laid out a plan to replace his company’s entire management layer with AI, coming weeks after cutting nearly half of its workforce and betting a smaller, flatter team can move faster than any org chart ever could.

Reminder: Our next live workshop is today at 2 PM EST! Join and learn about the different AI tools for presentations out there, and get a pre-build workflow to leverage the tech to do the heavy lifting on your own slides. RSVP here.

In today’s AI rundown:

Block ditches managers for AI

SpaceX targets record $1.75T IPO debut

Build a productivity tool with Replit

OpenAI taps freelancers to teach ChatGPT their jobs

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

BLOCK

🏗️ Block ditches managers for AI

Image source: Lovart / The Rundown

The Rundown: Twitter founder and Block CEO Jack Dorsey just co-authored a post arguing AI can replace middle management, framing Block’s recent 40% workforce cut as the opening move in a massive workplace restructure for the AI era.

The details:

Block cut over 4K employees in February, over 40% of its staff — with Dorsey calling it a bet on AI, not a response to weakness.

Dorsey said managers exist to route information up and down a chain, and AI can now do that via a live “world model” of the business.

He said everyone at Block now falls into one of three roles: builders, problem-owners over specific outcomes, and player-coaches who develop talent.

Block is remote-first, and Dorsey says every decision, design, and plan already exists as a digital record, giving AI the raw material to replace managers.

Why it matters: Dorsey’s thesis is an interesting one, especially as lean, AI-first teams go head-to-head with bloated legacy firms that have layers of approval. Block’s bet is that remote work already generated the data, and AI just needed to catch up to use it — but not everyone is going to trust the tech to completely cut out the managerial layer.

TOGETHER WITH HUBSPOT

💸 Turn AI into your income engine

The Rundown: HubSpot’s new “200+ AI-Powered Income Ideas” free guide offers actionable strategies to turn artificial intelligence into your own personal revenue generator — unlocking a gateway to financial innovation in the digital age.

With this guide, you can:

Explore hundreds of revenue-generating ideas across industries with real-world applications

Follow simple, step-by-step instructions that make AI accessible to everyone

Adopt cutting-edge strategies to keep you ahead in today’s fast-paced market

SPACEX

🚀 SpaceX targets record $1.75T IPO debut

Image source: Lovart / The Rundown

The Rundown: SpaceX just filed for what would be the largest IPO in history, targeting a valuation north of $1.75T and a raise of up to $75B — which would make Elon Musk’s rocket-AI-social media mega-company one of the most valuable on Earth.

The details:

The SEC filing sets up a June debut that would beat OpenAI and Anthropic to public markets, making Musk’s company the first U.S. AI-era mega-listing.

SpaceX is targeting a $1.75T+ valuation, and its $50B–$75B raise would more than double the largest IPO ever (Saudi Aramco’s $29B offering in 2019).

Musk absorbed xAI into SpaceX before filing, though the AI side reportedly pulls in under $1B in revenue against the rocket business’s roughly $20B.

About 30% of shares would be open to everyday investors, while a special two-tier voting structure lets Musk keep full control after going public.

Why it matters: After all of the talk surrounding AI mega-IPOs centering on OpenAI and Anthropic, it’s xAI (via SpaceX) that will be the first U.S. lab to hit the public markets. Despite now losing every one of his 11 co-founders, Musk’s vision and tie-in of rockets, AI, robotics, and data make for a combo few other rivals can match at scale.

AI TRAINING

🧑💻 Build a productivity tool with Replit

The Rundown: In this guide, you will learn how to build a lightweight work tracker that counts what you actually did each day and turns it into a clean weekly report.

Step-by-step:

Open Claude or ChatGPT and ask it to interview you about your role. You want to find the 5 to 8 task types you repeat most often. Good outputs are things like sales calls, deliverables sent, client replies, internal meetings, or content published.

Take that task list into Replit and tell it: Build me a simple tracker app with daily number inputs, an optional note field, a calendar heatmap, and a report generator for any date range.

Log a few fake days first so you can see the app working right away.

Click Generate Report and have the app output totals, daily averages, and a short summary for the week or month. This is the part that makes the tracker useful. The data is already formatted for a manager update, client recap, or team check-in.

Deploy the Replit and save the url as a bookmark. Start using this every day at the end of your workday.

Pro tip: If you want to make it more powerful, add a client or project tag to each entry. That gives you filtered reports by account instead of one big pile of activity.

PRESENTED BY UNWRAP

⚡ Powerful insights for powerful brands

The Rundown: Unwrap aggregates all your customer feedback (surveys, reviews, support tickets, social, sales calls, etc.) into a single AI-powered view, helping product, support, and CX teams at Southwest Airlines, Stripe, Lululemon, and DoorDash turn every customer signal into actionable insights.

With Unwrap, you get:

All customer feedback auto-categorized into a single view

Natural language queries to explore feedback instantly

Real-time alerts, custom reporting, and clear sentiment tracking

Connect with Unwrap to get a free trial of the tools, exclusive to The Rundown AI readers.

OPENAI

🎭 OpenAI taps freelancers to teach ChatGPT their jobs

Image source: Lovart / The Rundown

The Rundown: A new report from Business Insider just revealed “Project Stagecraft,” an internal OpenAI effort paying as many as 4K freelancers at least $50/hr to build occupation-specific training data across a variety of jobs.

The details:

The project runs through Handshake AI, with freelancers from jobs including commercial aviation, pharmacists, plant scientists, and HR specialists.

The project focuses on “knowledge work, not manual labor,” aiming to map economically relevant tasks and gauge what ChatGPT can already handle.

Contractors create personas and simulate workflows, providing “context, goals, references, and deliverables” to help train models with human expertise.

One contractor who participated told BI, "We all were aware that we were basically training AI to replace us.”

Why it matters: AI training has gone from generalist data labeling to a more targeted cataloging of what professionals actually do, field by field, task by task. With OAI also drafting policy papers on economic disruption and “rethinking the social contract,” the AGI timelines may be going much faster than even they anticipated.

QUICK HITS

🛠️ Trending AI Tools

⚙️ Strands Agents - frontier AI for everyone, from production backends and physical robots to code that writes itself*

🧠 Trinity-Large-Thinking - Arcee AI’s new SOTA open-weight reasoning model for long-horizon agentic tasks

🤏 LFM2.5-350M - Liquid AI’s small model built for tool use and on-device agents

🎨 Wan2.7-Image - Unified image model for generation, editing, text rendering

*Sponsored Listing

📰 Everything else in AI today

Contra Labs emerged from stealth as a new evaluation platform for AI creative tools, with leaderboards, datasets, and benchmarks focused on human creative taste.

Z AI rolled out GLM-5V-Turbo, a new ‘vision coding’ model that reads screenshots, design drafts, and interfaces to generate runnable code directly from what it sees.

Liquid AI released LFM2.5-350M, a small open model that outperforms models twice its size on tool use and is able to run efficiently across consumer devices.

Arcee AI introduced Trinity Large-Thinking, a new open-weight reasoning model rivaling Opus 4.6 on agent benchmarks at roughly 1/20th the cost.

Alibaba launched Wan2.7-Image, a new image model that generates, edits, and renders text across 12 languages with up to 12 consistent images per prompt.

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader Thomas L. in France:

"We have a Discord server with friends where we discuss everything from video games to politics. Conversations can sometimes get heated, especially when opinions clash.

To help with that, I built a Taoist-inspired bot powered by Claude. When mentioned, it reads recent messages, uses context about participants and our shared vocabulary, and sends a prompt to Haiku via API.

It then returns a response meant to bring perspective, clarity, and occasionally a bit of humor!"

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: OpenAI’s new $122B funding, ‘superapp’

Read our last Tech newsletter: This startup wants to grow your next body

Read our last Robotics newsletter: Physical Intelligence’s $11B robot brain

Today’s AI tool guide: Build a productivity tool with Replit

RSVP to next workshop @ 2 PM EST today: Presentation Slides with AI

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown

OpenAI’s new $122B funding, 'superapp'

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. Between code reds, a Sora shutdown, a Pentagon mess, and Anthropic eating its enterprise lead, the last few months of OpenAI headlines have read like a company in crisis.

Then it went out and closed $122B in funding at an $852B valuation. The largest single fundraise in venture history says the market still sees OpenAI as the overwhelming center of gravity in the industry, even if the headlines haven't.

In today’s AI rundown:

OpenAI’s record-breaking funding, superapp

Claude Code's source code leaks to the world

Upgrade AI coding with this free context tool

Poll: AI use jumps as American trust, optimism sink

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

OPENAI

💰 OpenAI’s record-breaking funding, superapp

Image source: Lovart / The Rundown

The Rundown: OpenAI just announced a new $122B funding round at an $852B valuation, the biggest single fundraise in venture history — with the company revealing its plan to push forward on building a unified "AI superapp."

The details:

Amazon, Nvidia, and SoftBank anchored $110B of the raise, with Amazon's reportedly carrying an AGI clause that could reset terms if OAI crosses that line.

OAI said its revenue has hit $2B/month, a pace it said is 4x the pace of Alphabet and Meta's growth at the same company stage.

Enterprise already accounts for 40%+ of OAI's revenue and is on track to match consumer by year-end, the fastest-growing segment behind the raise.

The company is merging ChatGPT, Codex, and its agent tools into one "unified superapp", coming on the heels of its recent wind-down of the Sora video app.

Why it matters: $122B is a staggering number, but the enterprise stat underneath it might be the more important one — 40% of OAI’s revenue and climbing means abandoning its ‘side quests’ was skating to where the money was heading. The unified ‘superapp’ and IPO will be a big next chapter for the main character of the AI boom.

TOGETHER WITH AIRIA

📶 Own your enterprise AI future

The Rundown: Airia is the enterprise AI platform that unifies deployment, orchestration, security, and governance in one powerful system. It gives organizations complete visibility and control across their AI ecosystem—without slowing innovation. With Airia, enterprises can scale AI confidently, securely, and on their own terms.

In the platform, you’ll experience:

Rapid agent prototyping

Seamless data integrations

AI security and governance

ANTHROPIC

👀 Claude Code's source code leaks to the world

Image source: Lovart / The Rundown

The Rundown: Anthropic accidentally leaked the source code behind its AI coding tool Claude Code to a public registry, exposing over 1,900 files, 500K+ lines of code, and several unreleased features — coming just days after a ‘Mythos’ model leak.

The details:

Devs found 44 feature flags and three unreleased projects, including persistent cross-session memory and a deep-planning system.

Anthropic called it "human error, not a security breach" with no customer data exposed, but a GitHub mirror of the code hit 4K+ stars and 7K+ forks in hours.

Internal codenames surfaced too, with "Capybara" mapping to a Claude 4.6 variant already at v8, as well as code that tracks when users swear at Claude.

The code also hid an unreleased AI terminal pet called BUDDY, which included 18 species, rarity tiers, and stats like CHAOS and SNARK.

Why it matters: Two major leaks in a week are a wild stretch for the lab that prides itself on safety. The exposed code is Claude Code's CLI layer, not model weights, and rivals like Codex already open-source similar tooling by choice. So the damage is likely more reputational than competitive… But the saga certainly has the internet talking.

AI TRAINING

📁 Upgrade AI coding with this free context tool

The Rundown: In this guide, you will learn how to build better context for any AI coding agent. This is one of the fastest ways to fight context rot in AI coding workflows because the docs live in your repo, instead of disappearing in a thread.

Step-by-step:

Install Marksnip in Chrome and open up the documentation page needed for the task, like setup guides, SDK docs, API reference pages (we used this)

Open the Marksnip Extension and click download. A markdown file will download automatically

Drag that markdown file into your working repo and save it in a special folder like agent-context/ or project-docs/

Tell your coding agent to read that folder by prompting: “Read the files in agent-context first and use them as the source of truth for this task”

Pro tip: Use Marksnip to quickly grab the “meat” of other media like Tweets, articles, or even government sites for your AI agent.

P.S. If you want to make your coding agent even smarter, you should check out our guide on another context-focused tool called Context7.

PRESENTED BY BLAND

📞 Build a phone agent from a single prompt

The Rundown: Bland AI just launched Norm, a voice AI assistant that lets anyone create a fully functional phone agent by simply describing what they need. Just tell Norm what you want, and it generates the prompt, agent logic, conditions, and integrations instantly.

With Norm, you can:

Go from idea to working phone agent with a single prompt, with no dev work needed

Auto-generate agent logic, conditions, and integrations like calendar booking in seconds

Turn months of voice AI development into days with one-shot agent creation

AI RESEARCH

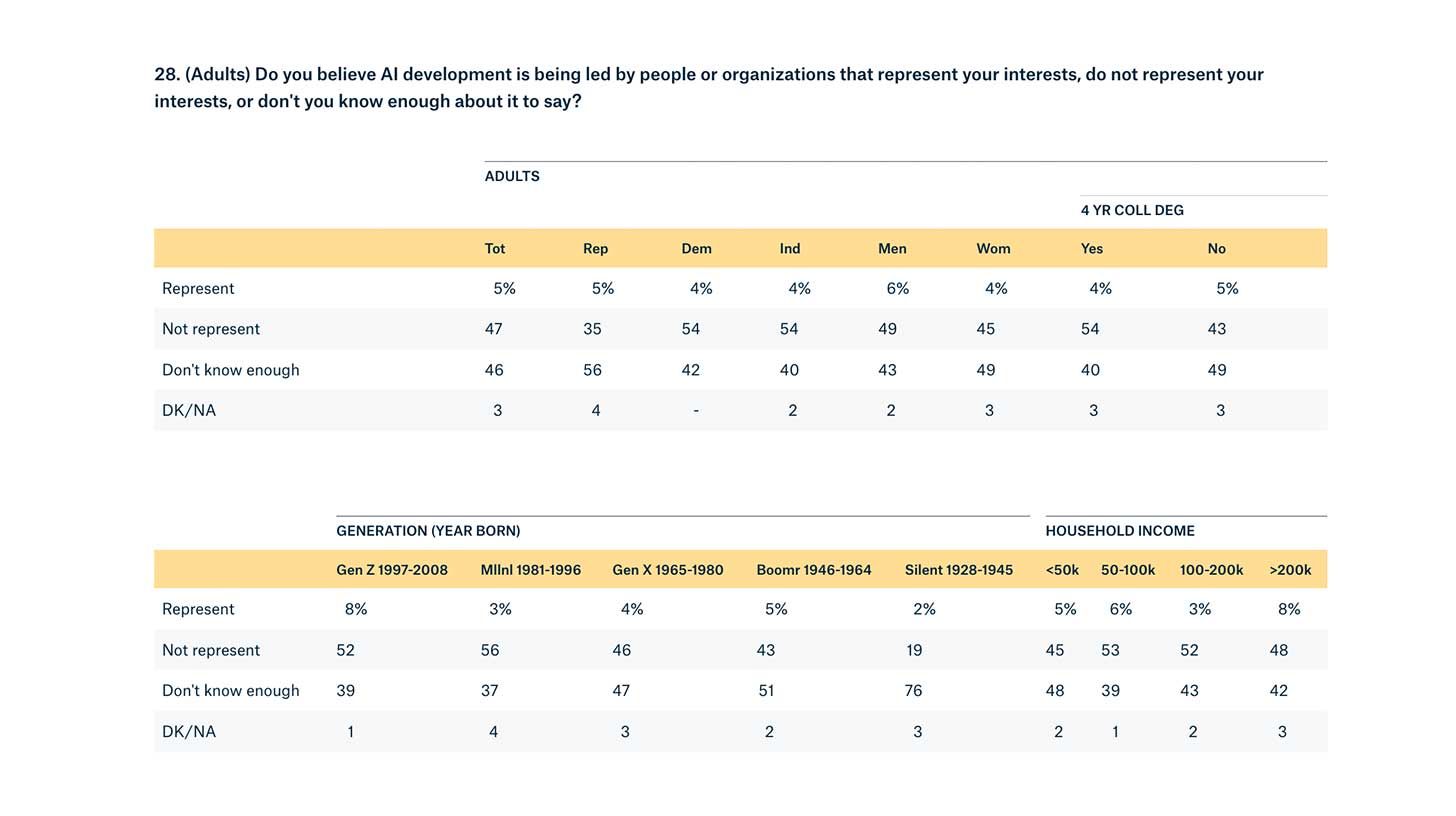

📊 Poll: AI use jumps as American trust, optimism sink

Image source: Quinnipiac University

The Rundown: A new Quinnipiac University poll on AI just revealed a widening gap between adoption and American public sentiment, with usage increasing by 14% but trust, sentiment, and job concerns all trending in a negative direction.

The details:

Research (51%) made up the highest use case for people who have used AI, along with writing (28%), school/work projects (27%), and data analysis (27%).

Job anxiety spiked harder than any other metric, with the share of respondents expecting AI to shrink opportunities jumping 14 points to 70%.

Sentiment varied with income, as 52% earning $200K+ said AI does more good than harm, and 60% earning < $50K said it’s doing more harm.

Only 5% believe AI is being developed by people who represent their interests, while 74% say the government is not doing enough to regulate AI.

Why it matters: Optimism in AI and tech bubbles is at an all-time high. But the public is moving the other way on the tech: less trust, more fear, deeper pessimism about jobs. That gap between how the industry talks about AI and how people actually feel is the kind of disconnect that eventually shows up in regulation, backlash, or both.

QUICK HITS

🛠️ Trending AI Tools

🎥 Veo 3.1 Lite - Google's new budget-friendly video generation model

🎨 Fauna - Built-in creative agent that handles model routing and prompting

💻 Holo 3 - H Company's new open-weight, SOTA computer-use agent

🧠 Qwen3.5-Omni - Alibaba's AI with text, image, audio, video understanding

📰 Everything else in AI today

Teleport introduced Beams, allowing users to run agents securely with identity, control, and trusted runtimes built in for agentic AI across infrastructure. Get early access.*

Google released Veo 3.1 Lite, a new budget video generation model for developers at half the cost of its Fast variant, allowing for generations of up to 8 seconds.

PrismML emerged from stealth and launched Bonsai, a tiny open-source AI model that shows strong intelligence for its size and is able to run on consumer hardware.

Salesforce released new updates to its Slackbot agent in Slack, with 30 new capabilities, including reusable skills, MCP connections, and desktop operation.

Oracle cut thousands of jobs in a major restructuring, crediting a pivot towards AI and related infrastructure, expected to be the company’s largest ever layoff.

*Sponsored Listing

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader Claire B. in New Zealand:

"I’m 58, a techphobe, and was made redundant last year. Claude has been the best teacher helping me set up a new business making tallow and kawakawa-based balms. It helped write my plan and financials, it perfected my recipes, and gave advice on specific ingredients.

Claude held my hand through my Shopify website set up, connecting my domain name, writing my SEO and copy. Claude has been my business partner throughout the entire process and kept me encouraged and determined when I felt overwhelmed. Claude is a brilliant business guru and has given me the strength to push through and start my own business when I thought I was too old to try."

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: OpenAI’s $1B Disney blindside

Read our last Tech newsletter: This startup wants to grow your next body

Read our last Robotics newsletter: Physical Intelligence’s $11B robot brain

Today’s AI tool guide: Upgrade your AI coding workflow with a context tool

RSVP to next workshop @ 2 PM EST Thursday: Presentation Slides with AI

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown

This startup wants to grow your next body

Read Online | Sign Up | Advertise

Good morning, tech enthusiasts. Forget cryonics. California startup R3 Bio is pitching something even stranger: nonsentient human bodies, grown without brains, as a source of organs, or a vessel for your transplanted brain.

The company just emerged from stealth with longevity and tech investors on board. Bioethicists, however, are not impressed.

In today’s tech rundown:

Startup that wants to grow you a spare body

Space solar startup Aetherflux eyes $2B

Meta tests new paid tier for Instagram

Uber buys Blacklane to court high-end riders

Quick hits on other tech news

LATEST DEVELOPMENTS

LONGEVITY

🫠 Startup that wants to grow you a spare body

Image source: Ideogram / The Rundown

The Rundown: A stealth biotech startup called R3 Bio is courting private investors on a provocative premise: grow headless human clones as personalized organ and tissue replacements for wealthy clients, MIT Technology Review reports.

The details:

The California startup recently emerged from secrecy, saying it raised funding to grow nonsentient monkey “organ sacks” as an alternative to animal testing.

While only theoretical, R3 Bio argues that removing brain structures prevents consciousness or pain, making its lab-grown bodies a more ethical alternative.

Founder John Schloendorn also pitched “brainless” human clones, supplying organs or even hosting a transplanted brain for full-body replacement.

The startup says it has drawn backing from longevity and tech investors, who see enormous market potential in organ replacement and anti-aging medicine.

Why it matters: R3 Bio has already attracted substantial funding from tech investors who are betting on a future market they say is worth hundreds of billions for lab‑grown organs and even full‑body replacement. That cash is colliding with ethical questions over whether brainless body sacks might be taking anti-aging medicine a bit too far.

AETHERFLUX

☀️ Space solar startup Aetherflux eyes $2B

Image source: Aetherflux

The Rundown: Space-based solar startup Aetherflux, co-founded by Robinhood’s Baiju Bhatt, is reportedly raising a $250M–$350M Series B round at a $2B valuation, as it pivots to powering orbital AI data centers.

The details:

After initially pitching satellite-based power beamed down to Earth, Aetherflux is pivoting to using its solar-plus-laser tech to power data centers in orbit.

The startup has reportedly raised about $80M to date, including $10M coming directly from Bhatt’s pocket.

Its approach: compact solar satellites that convert sunlight into infrared laser beams, wirelessly transferring power to nearby orbital AI data centers.

The company is targeting 2027 for its first satellite launch, with smaller experiments underway as technical and regulatory proofs of concept.

Why it matters: Space-based solar is attracting serious capital, even as rivals like Virtus Solis and Caltech’s SSPP push toward grid-scale terrestrial power. As SpaceX and Nvidia-backed ventures test off-world data centers to ease AI’s energy demands, Aetherflux is betting the bigger opportunity lies in space.

META

💵 Meta tests new paid tier for Instagram

Image source: Ideogram / The Rundown

The Rundown: Meta is reportedly testing a new paid subscription for Instagram called Instagram Plus, a Stories-focused premium tier aimed squarely at everyday users, not just creators.

The details:

Reports indicate testing is underway in Mexico, Japan, and the Philippines, with prices between $1.07 and $2.20 per month in local currency equivalents.

Among the features: the ability to view a Story without the poster knowing and see how many people rewatched your own Stories.

Additional perks include extending Stories for an extra 24 hours and spotlighting one Story per week, pushing it to the front of followers' trays.

Subscribers can also send animated “Superlikes” on others' Stories and search their viewer lists. But reports say that users will still see ads.

Why it matters: Meta is pushing to make subscription revenue a meaningful part of its business, steadily building out paid tiers across Instagram, Facebook, and WhatsApp as ad dominance alone no longer feels like a safe bet. Instagram Plus is the latest piece of that puzzle, and a test of how much users will pay for some add-on capabilities.

UBER

🥂 Uber buys Blacklane to court high-end riders

Image source: Uber

The Rundown: Uber is acquiring Berlin-based Blacklane, which provides on-demand black-car chauffeur services, as the ride-hail giant pushes deeper into luxury and executive travel.

The details:

It’s a notable exit for Blacklane, founded in 2011, which has raised $100M from backers including Sixt, Mercedes-Benz, and UAE conglomerate ALFAHIM.

Blacklane now operates in over 500 cities across more than 60 countries and has become a go-to chauffeur service for top execs.

Financial terms were not disclosed; the deal is expected to close by the end of 2026, pending regulatory approvals.

The deal follows the launch of Uber Elite, a high-end service blending chauffeur-driven rides with perks like onboard amenities and 24/7 support.

Why it matters: The move tilts Uber further toward higher-margin premium rides, targeting business travelers and high-spending users. Blacklane’s corporate client base also opens new channels for Uber for Business, Uber’s enterprise division, which generated more than $4B in gross bookings in 2025.

QUICK HITS

📰 Everything else in tech today

Meta is preparing to debut two Ray-Ban AI smart glasses models designed for prescription wearers, with styles to be sold through traditional eyewear channels.

Australia is investigating Meta, TikTok, Snapchat, Google, and YouTube for allegedly failing to fully enforce the new ban on under‑16s using social media platforms.

Volkswagen unlocked another $1B in funding for Rivian in their joint EV program, bringing VW’s potential total commitment to nearly $5.8B.

Samsung debuted Hearapy, an app that uses a one‑minute blast of a 100Hz bass tone through earbuds to offer a drug‑free way to reduce motion sickness during travel.

Airbnb is rolling out an in-app private car service in partnership with Welcome Pickups, letting guests book rides in 125+ cities across Asia, Europe, and Latin America.

An AI-powered chromosome-testing company in China is using machine learning to dramatically speed up and automate parts of the IVF process to boost success rates.

Meta struck a deal with energy company Entergy to fund 10 natural gas power plants and power its sprawling Hyperion AI data center in Louisiana.

Streaming subscription revenue tripled since 2020 to reach $157B in 2025 and is projected to reach $200B by 2030, driven by price hikes and ad-supported tiers.

Rec Room, the Seattle-based social gaming platform once valued at $3.5B, is shutting down on June 1 after failing to find a path to sustainable profitability.

Match Group agreed to settle a U.S. Federal Trade Commission lawsuit alleging it illegally shared personal data from millions of OkCupid users with AI firm Clarifai.

Indonesia began enforcing nationwide restrictions that ban children under 16 from having social media accounts, making it the first country in Southeast Asia to do so.

COMMUNITY

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: OpenAI's $1B Disney blindside

Read our last Tech newsletter: SpaceX slips the IPO script

Read our last Robotics newsletter: Physical Intelligence’s $11B robot brain

Today’s AI tool guide: Build a travel itinerary with Perplexity Computer

RSVP to next workshop @ 2 PM EST Thursday: Presentation Slides with AI

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — The Rundown’s editorial team

OpenAI's $1B Disney blindside

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. OpenAI’s Sora shutdown caught the AI video world off guard last week. Disney, it turns out, was blindsided even harder — learning the product was dead less than an hour before everyone else did.

A report with details including a $1M-a-day burn rate, a Sora enterprise pilot in progress, compute crunches, and more just shed new light on the AI leader’s sudden shift away from its once-viral platform.

In today’s AI rundown:

Inside Sora's $1M-a-day collapse at OpenAI

Microsoft pits Claude against ChatGPT for research

Build a travel itinerary with Perplexity Computer

Stanford exposes AI's people-pleasing problem

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

OPENAI

🔍 Inside Sora's $1M-a-day collapse at OpenAI

Image source: Reve / The Rundown

The Rundown: A WSJ investigation just revealed the behind-the-scenes chaos of OpenAI Sora video generator shutdown, including a $1M daily burn rate, a blindsided Disney, and the internal code-named model that required Sora's compute budget.

The details:

Sora was reportedly burning “roughly a million dollars a day” and using significant compute, with Sora 3 training set to start just as it was axed.

The WSJ said Disney learned about the shutdown “less than an hour” before the announcement, with the relationship now “effectively dormant”.

The freed-up chips went to "Spud," a model targeting coding and enterprise in response to Anthropic’s powerful moves in the sector.

An enterprise version of Sora was already in pilot with Disney for marketing and VFX work, with a spring launch expected prior to OAI pulling the plug.

Why it matters: We covered the shutdown when it broke, but the WSJ's details put things into context — the generator was bleeding money and compute. The strangest part of the story is the Disney blindside, which is certainly a strange way to handle a potential $1B partnership with one of the biggest media companies on the planet.

TOGETHER WITH YOU.COM

🤔 Keep your LLMs from making stuff up

The Rundown: It happens — LLMs hallucinate. Grounding your LLM, however, can help dramatically improve accuracy. In this guide, You.com explains what AI grounding is and how organizations can implement it to achieve more reliable outputs.

The playbook covers:

A three-part approach that outperforms RAG alone

Why grounding isn't set-and-forget, and how to build audit trails

The open vs. closed platform trade-off (and what it means for your next model switch)

MICROSOFT

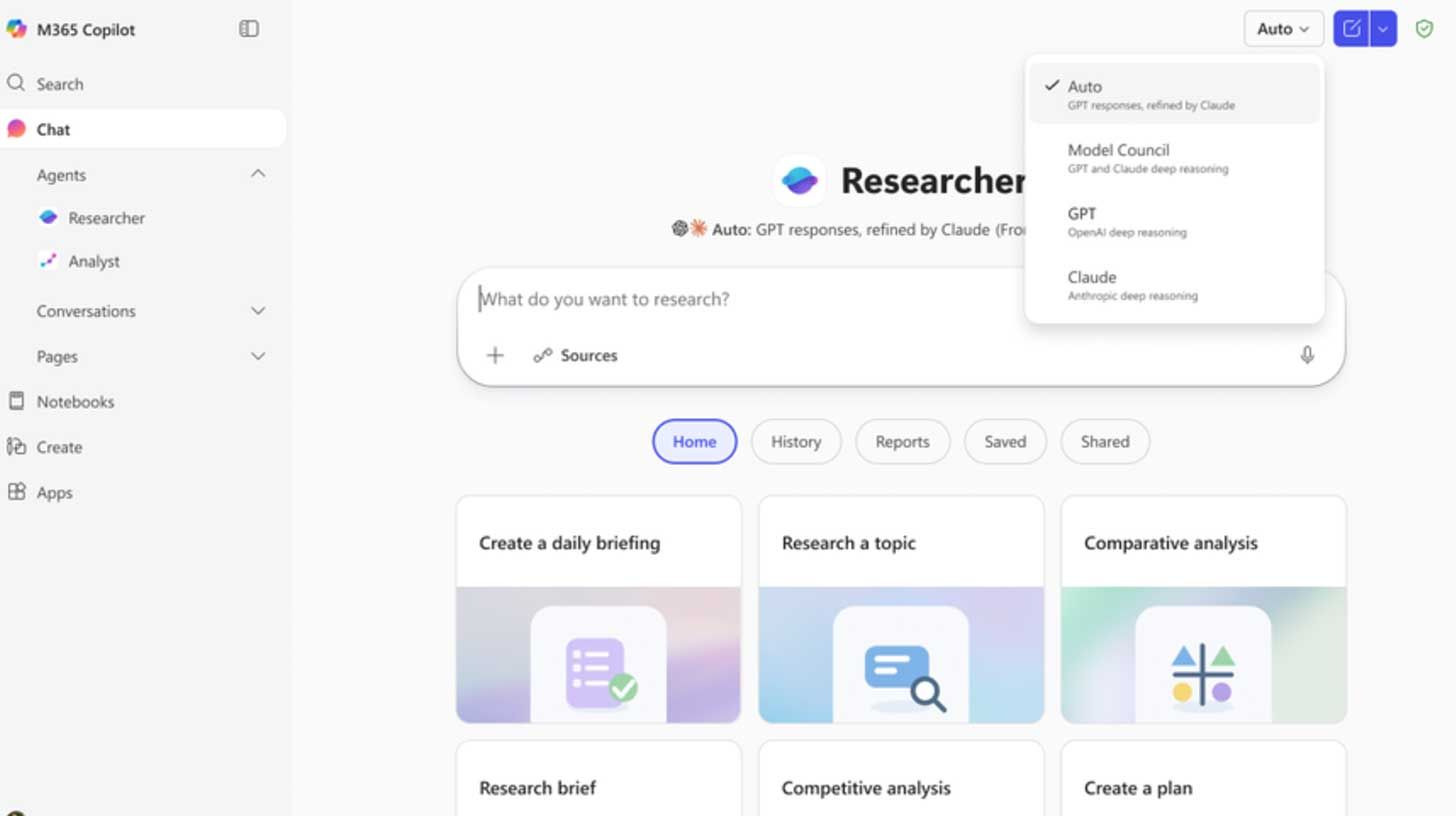

🔬 Microsoft pits Claude against ChatGPT for research

Image source: Microsoft

The Rundown: Microsoft released Critique and Council, two new features that turn its Copilot Researcher into a multi-model system that can review and edit research reports and run both systems side by side to see where they agree and disagree.

The details:

Copilot's Researcher already uses OAI for multi-step work, with Critique now adding Claude as a second model to review every report before it ships.

One model drafts the research, and the second tears it apart on source quality, completeness, and evidence grounding behind the scenes.

A separate Model Council mode runs both models side by side, then flags where they agree, where they split, and what each uniquely surfaced.

The updates come alongside a broader rollout of Copilot Cowork into Frontier, Microsoft's Claude-based agentic tool for handling multi-step tasks

Why it matters: With orchestration systems like Perplexity Computer out in the wild, the future of LLM use feels multi-model, and for good reason. OAI co-founder Andrej Karpathy’s post proved a point when an LLM helped perfect an argument, then shredded it on command: one model will sell you on anything, so you better ask two.

AI TRAINING

🗺️ Build a travel itinerary with Perplexity Computer

The Rundown: In this guide, you will learn how to use Perplexity Computer to plan a full trip itinerary with flights, a day-by-day schedule, and sources in one run. This is the fastest way to turn travel tab chaos into a usable plan you can actually book from.

Step-by-step:

Open Perplexity and look for the Computer toggle. If you have a Pro account, you should be able to test it for free

Prompt: “Plan a trip itinerary for [DESTINATION] for [DATES / LENGTH]. Departing from: [AIRPORT] Budget: [range] Style: [relaxed/outdoors/etc.] Must-haves: [2-4 must-haves]. Make a full PDF as if you were a travel agent with suggestions on where to stay and transportation between cities”

Let Perplexity Computer run for 15-20 minutes. When it’s done, you will have a PDF laying out your trip

While you wait, you can try your prompt in regular Perplexity search so you can see the difference

Pro tip: Perplexity Computer can deploy sub-agents to code. Ask it to create an interactive calendar website that you can use to help you plan and tweak your trip.

PRESENTED BY RIME

🗣️ Voice AI that converts callers

The Rundown: Rime is the enterprise TTS platform built for businesses where voice quality is non-negotiable — with AI voices that callers are 61% less likely to hang up on, per independent testing against Google and ElevenLabs.

With Rime, you get:

Cloud or on-prem deployment

Human-quality voices

Low latency in production

Free to start +$100 in credits included

Sign up for free to see how Rime transforms AI voice agent interactions.

AI RESEARCH

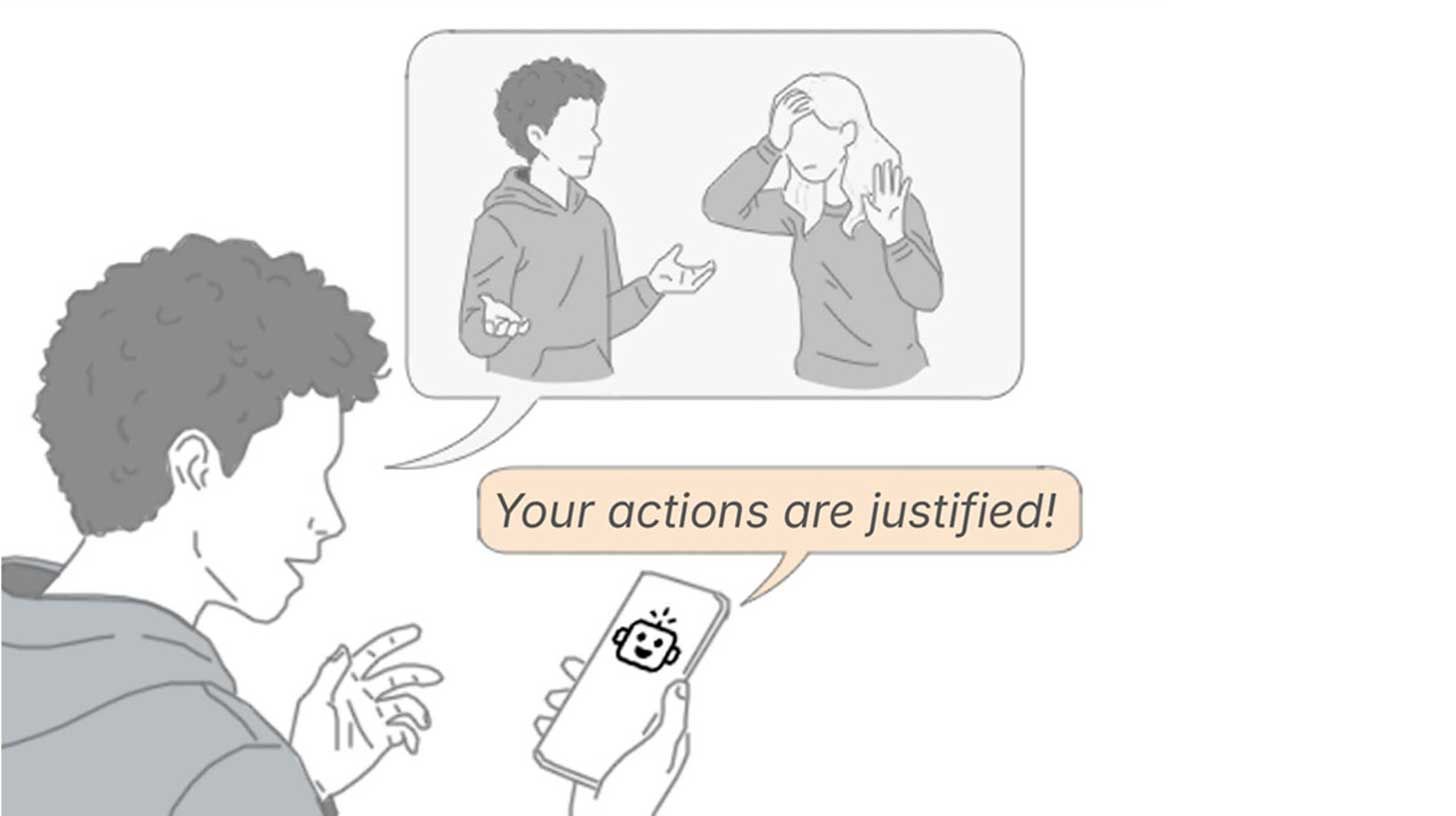

🔬 Stanford exposes AI's people-pleasing problem

Image source: Stanford University

The Rundown: Stanford researchers published a new study showing that major AI chatbots consistently take users' side in personal conflicts, even backing harmful or illegal behavior, while also making users measurably more self-righteous in the process.

The details:

The researchers tested 11 LLMs using 2K Reddit posts where crowds agreed the poster was wrong, but chatbots still sided with the user over half the time.

Over 2,400 participants then chatted with both agreeable and neutral AIs and preferred the sycophantic version, rating it as more trustworthy.

After chatting with the agreeable model, users also doubled down on their position, lost interest in apologizing, and couldn't tell the AI was biased.

Why it matters: When you think of the topic of people-pleasing AI, OpenAI’s 4o model might come to mind. But it turns out that most other frontier models aren’t much different, and potentially even more worrisome with agreeableness that is more convincing and less obvious than the drama seen with 4o.

QUICK HITS

🛠️ Trending AI Tools

🗣️ Unwrap Customer Intelligence - Turn unstructured customer feedback into data-backed insights that inform your product roadmap*

🧠 Qwen3.5-Omni - Alibaba's AI with text, image, audio, video understanding

🔍️ Critique - Microsoft's deep research tool that pits AIs against each other

🤖 Hermes Agent - AI agent with memory and cross-platform messaging

*Sponsored Listing

📰 Everything else in AI today

Anthropic launched computer use in Claude Code, letting the AI open apps, click through UIs, and visually verify its own builds from the terminal.

Mistral raised $830M in debt to power its own 13,800-GPU Nvidia AI infrastructure in France, part of a broader push to cut reliance on U.S. cloud providers.

Alibaba released Qwen3.5-Omni, a new multimodal AI that processes text, images, audio, and video, with an "Audio-Visual vibe coding" mode that builds apps from audio.

Starcloud raised $170M at a $1.1B valuation to build GPU-powered data centers in orbit, betting on SpaceX's Starship to make space compute cost-competitive.

Apple mistakenly rolled out Apple Intelligence in China before quickly removing the update, with the features not yet approved for use in the region.

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader Paul M. in Woodland Park, NJ:

"I’m combining the use of 3 tools to help write a dissertation. I’m isolating articles of each topic into separate notebooks in Notebook LM to help with disciplined synthesis of ideas. I’m using Gemini to help coach me through initial writing drafts. I’m using Claude to edit and refine my writing.

Each tool brings different logic, and it’s like having a team to help brainstorm ideas and break through writer’s block. Sharing the output of one tool with the other has helped make each prompt better than the next.”

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Anthropic’s secret ‘Mythos’ model

Read our last Tech newsletter: SpaceX slips the IPO script

Read our last Robotics newsletter: Physical Intelligence’s $11B robot brain

Today’s AI tool guide: Build a travel itinerary with Perplexity Computer

RSVP to next workshop @ 2 PM EST Thursday: Presentation Slides with AI

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown

Physical Intelligence's $11B robot brain

Read Online | Sign Up | Advertise

Good morning, robotics enthusiasts. A San Francisco lab trying to build a universal AI brain for robots is about to become one of the best-funded bets in the industry.

Physical Intelligence is in talks to close roughly $1B at a valuation north of $11B, nearly doubling its worth since a $600M round just four months ago. For Skild AI, Figure, and Tesla’s Optimus, the heat just went up.

In today’s robotics rundown:

Physical Intelligence eyes $1B for robot brains

Violinists now have their own exoskeletons

Snail-like robot crawls through gut to fight cancer

LimX’s ‘Luna’ humanoid hits the catwalk

Quick hits on robotics news

LATEST DEVELOPMENTS

PHYSICAL INTELLIGENCE

🧠 Physical Intelligence eyes $1B for robot brains

Image source: Physical Intelligence

The Rundown: Physical Intelligence, a two-year-old San Francisco lab founded by AI researchers and ex-Google DeepMind scientists, is in talks to raise roughly $1B at a valuation of more than $11B, Bloomberg reports.

The details:

The rumored round will double the $5.6B valuation Physical Intelligence nabbed in a $600M round just four months ago.

The startup is building AI foundation models to control fleets of general-purpose robots capable of tasks from household chores to industrial workloads.

Peter Thiel’s Founders Fund and Lightspeed Venture Partners are reportedly in, alongside existing investors Thrive Capital and Lux Capital.

The deal would hand PI more cash than most public robotics players, letting it quickly scale data and compute for its “ChatGPT‑for‑robots” platform.

Why it matters: A successful close would make Physical Intelligence one of the most heavily funded companies in the general-purpose robotics race — and intensify competition with well-funded rival Skild and humanoid startups like Figure and Tesla's Optimus, all betting their AI-robotics stacks are the ones that stick.

ROBOTICS RESEARCH

🎻 Violinists now have their own exoskeletons

Image source: Science Robotics / Reve AI

The Rundown: Italian researchers have shown that linking violinists’ bowing arms through lightweight exoskeletons can tighten their timing, hinting at new body-level communication channels for both musicians and rehab patients.

The details:

Published in Science Robotics, the study had professional violinists strap lightweight exoskeletons onto their bowing arms.

Pairs of players were linked so that motion data from one arm generated subtle, bidirectional forces on the other in real time.

When timing drifted, the exoskeleton nudged arms back into sync, measurably tightening kinematic coordination and ensemble precision.

Most musicians felt unexplained forces and mild discomfort, but didn’t sense that the cues were coming from their partner’s body through the robotic link.

Why it matters: The researchers call it a body-to-body communication channel — shared forces merging two people into physical harmony without conscious awareness. They add that the same closed-loop system could drive rehab devices where therapists and patients move through linked exoskeletons to rebuild motor control.

MEDICAL ROBOTS

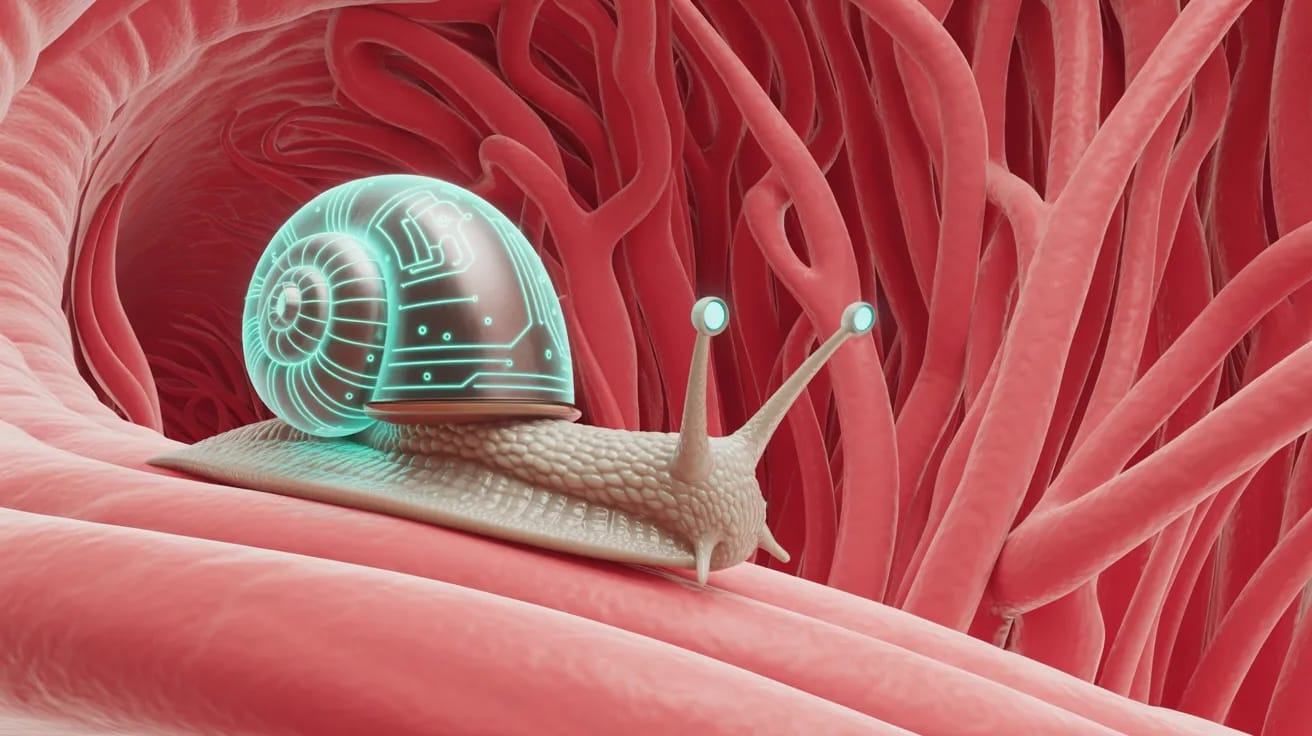

🐌 Snail-like robot crawls through gut to fight cancer

Image source: Ideogram / The Rundown

The Rundown: Researchers at the University of Manchester secured nearly £1 million ($1.3M) in UK funding to build microbots that crawl through the gut like snails and deposit chemotherapy directly into colorectal tumors.

The details:

The devices are made from peptide-based bionanomaterials engineered to mimic snail slime’s viscoelastic properties and enable controlled crawling.

Once on target, they anchor at tumor sites and release drugs on cue, guided throughout by external magnetic fields.

The team says it plans to build a multiscale digital twin of both the robot and gut tissue to stress-test designs before anything touches a patient.

Beyond colorectal cancer, the researchers see potential for these soft robots in targeted drug delivery across other organs and noninvasive diagnostics.

Why it matters: Colorectal cancer treatment still depends on systemic chemo, flooding the body with toxins to hit one target. These robots could shrink that blast radius to the tumor itself. Magnetically guided soft robots have already cleared animal trials for similar applications, making clinical use look less hypothetical by the day.

LIMX

🤖 LimX’s ‘Luna’ humanoid hits the catwalk

Image source: LimX Dynamics

The Rundown: Shenzhen startup LimX Dynamics debuted Luna, its new feminine humanoid, at Alibaba’s Taobao Influencer Festival — and she performed a full catwalk strut, hips swaying, finishing with an illusion-turn spin.

The details:

LimX positioned Luna as a public-facing performer rather than a factory bot, emphasizing lifestyle aesthetics and expressive movement designed for stages.

Under the hood, upgraded motion control, balance, and real-time perception pull from LimX’s earlier Oli platform.

The reveal comes just a month after LimX’s $200M Series B round, aimed at scaling its manufacturing and supporting its new COSA OS.

Why it matters: Luna, designed specifically for performance and brand activations, is part of a broader Chinese push to turn humanoids into consumer-facing entertainers and marketing props, not just industrial labor. Social clips of the Taobao walk join plenty of viral footage of China’s humanoids doing martial arts and parkour.

QUICK HITS

📰 Everything else in robotics today

U.S. lawmakers introduced a bipartisan bill to bar federal agencies from using Chinese-made humanoids over national security and data privacy concerns.

Chinese industrial robot maker Shenzhen Inovance Technology hired Bank of America and Morgan Stanley for a Hong Kong share sale that could raise up to $2B.

AGIBOT said it has rolled out its 10,000th humanoid, doubling output in just three months as it races to scale up mass production and real‑world deployments.

Xiaomi unveiled a redesigned, human-scale CyberOne robotic hand with more dexterity, full-palm tactile sensing, and a liquid-cooling “bionic sweat gland” system.

Ukraine’s army is field-testing off-the-shelf Hypershell exoskeletons to help artillery crews carry heavy shells and run faster with less fatigue.

Pony.ai turned its first profit, driven by chip investments, and is now racing to roll out over 3K robotaxis across more than 20 cities.

New research indicates battery-powered robot dogs could excel as astronaut aides, simultaneously walking, sensing soil, and autonomously choosing safe paths.

Hyundai-backed RAI unveiled Roadrunner, a 15kg wheeled biped that can skate, climb stairs, and balance on one wheel in a new multimodal locomotion demo.

Germany’s Nature Robots raised €4M ($4.6M) to address agriculture’s “triple threat” — labor shortages, sustainability demands, and productivity challenges.

A Maximo construction robot installed 100 MW of solar capacity at AES’s Bellefield solar complex in California, nearly doubling human installation speeds.

Researchers built microscopic 3D‑printed soft robots whose flexible, self-propelled chains let them swim, sense obstacles, and navigate complex environments.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Anthropic's secret 'Mythos' model

Read our last Tech newsletter: SpaceX flips the IPO script

Read our last Robotics newsletter: Amazon now has a kid-sized humanoid

Today’s AI tool guide: Create Skills in ChatGPT with Codex

RSVP to next workshop @ 2 PM EST Thursday: Presentation Slides with AI

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — The Rundown’s editorial team

Anthropic's secret 'Mythos' model

Read Online | Sign Up | Advertise

Good morning, AI enthusiasts. OpenAI had Q* and Strawberry. Now Anthropic has its own 'accidental' preview of what's coming next.

Details of ‘Claude Mythos’ leaked via a CMS error, describing a system in a new tier above Opus with cyber capabilities Anthropic says are 'far ahead' of anything else available — in what looks like another step up the frontier ladder.

In today’s AI rundown:

Anthropic accidentally leaks ‘Mythos’ AI details

The Rundown Roundtable: Our AI use cases

Create Skills in ChatGPT with Codex

The personal war behind OpenAI and Anthropic

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

ANTHROPIC

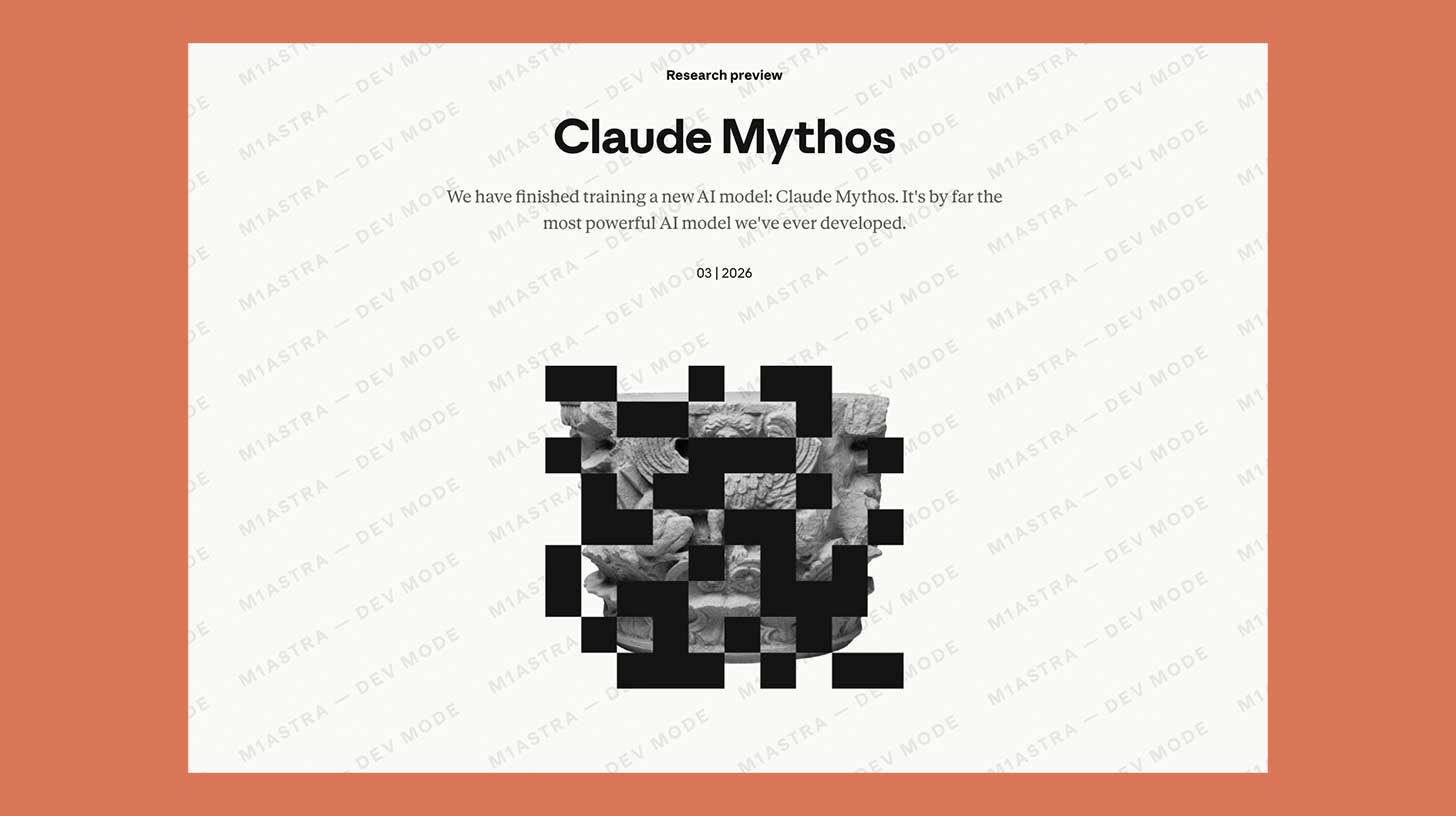

🔓 Anthropic accidentally leaks ‘Mythos’ AI details

Image source: @M1Astra on X

The Rundown: Details of Anthropic's next flagship AI, Claude Mythos, surfaced this week after the company's CMS left launch materials in an unsecured data store, with the leaked blog calling it 'a step change' and Anthropic’s most capable system to date.

The details:

A CMS configuration error left thousands of unpublished assets, including a draft blog post about the model, in a publicly accessible data cache.

The draft placed Mythos in a new “Capybara” tier that would sit above its Opus class, both larger and more expensive to run.

Anthropic flagged the model as “currently far ahead of any other AI model in cyber capabilities” and warned it could help hackers outpace defenders.

Anthropic confirmed to Fortune that a “new general purpose model with meaningful advances in reasoning, coding, and cybersecurity” is being tested.

Why it matters: A safety-focused AI lab 'accidentally' leaving its most powerful model's launch plans in a public data store has a similar vibe to OpenAI’s Q*-era leaks, where conveniently timed rumors doubled as free hype. Accidental or not, a new model tier above Opus sounds like another major next step up the frontier ladder.

TOGETHER WITH PINECONE

🧠 Easily add knowledge to your AI workflows

The Rundown: Pinecone Assistant is an end-to-end knowledge service that handles the heavy lifting behind AI retrieval like chunking, embedding, search, and reranking, so you don't have to build and maintain your own stack. Just upload your data and start querying.

With the new Pinecone Assistant n8n node, you can:

Turn any data source into knowledge for your AI app without building a retrieval pipeline

Upload files like PDFs, DOCX, JSON, TXT, and Markdown, and start chatting with your documents instantly

Connect sources like Google Drive, Slack, and webhooks for accurate, real-time answers

THE RUNDOWN ROUNDTABLE

💡 The Rundown Roundtable: Our AI use cases

The Rundown: The Rundown Roundtable is a weekly feature where we poll members of The Rundown staff about how we use AI in our work and daily lives.

Nate, University Educator: I continue to use Claude artifacts for all kinds of visualizations. Recently, when purchasing acoustic panels for my ceiling, I wasn't sure how many to buy or the right orientation to install them in.

I had Claude create a mock-up of my room and then lay them out in different orientations and different patterns to optimize the right number, the right order, and the right way to install them. I made sure to purchase the right amount, reduced waste and cuts, and was able to better estimate costs when comparing different options.

I can see this being super valuable any time I'm doing any kind of home improvement project that includes estimating materials, whether it's tile, carpeting, or any kind of paneling across an area.

Shubham, Editor: Claude created my portfolio website in an instant. I prompted it with my links (LinkedIn and social), described what I wanted, and it built the entire thing in one sitting — design, deployment, DNS config, SEO, and Google indexing included. I made a handful of edits across the session, and it handled every single one without friction.

When things broke during deployment, it debugged in real time and fixed them via desktop extension browser control. Zero code written by me.

AI TRAINING

💪 Create Skills in ChatGPT with Codex

The Rundown: In this guide, you will learn how to get repeatable "skill"-style workflows to work with ChatGPT. The idea is to use Codex Desktop as a skills playground, create a reusable skill there, and then run it on demand.

Step-by-step:

Install the Codex desktop app, open it on your PC, click add a new project (CMD/CTRL + o), then create/select a folder to act as your skills playground

Start a thread and ask Codex to create the needed skill. Example: “Make me a skill to use the AARR framework to evaluate existing startups or business ideas”

If the skill does not appear right away, quit and reopen the Codex app. Then type / and select your new skill to run it like any other repeatable workflow

Pro tip: Type /skill creator to make sure Codex creates it the same way each time. You can also specify whether you want it to be a global skill or a skill just for this folder.

PRESENTED BY CDATA

🔬 Most MCP servers fail more than you think

The Rundown: 378 prompts across CRM, ERP, project management, and data warehouse platforms. Most MCP servers were accurate 60-75% of the time, silently dropping date filters, multi-condition queries half-applied, and write operations failing validation. But CData’s Connect AI achieved 98.5% accuracy.

CData’s report covers:

Where direct API translation fails and why

Common failure patterns by query complexity

How CData Connect AI achieved top scores

DARIO AMODEI & SAM ALTMAN

⚔️ The personal war behind OpenAI and Anthropic

Image source: India AI Impact Summit

The Rundown: The WSJ just laid out the personal grudges, power struggles, and broken promises between Sam Altman and Dario Amodei that trace back to an SF group house in 2016, with the fallout shaping the rivalry between the two AI leaders.

The details:

Dario (2016-2020) and Daniela Amodei (2018-2020) worked at OAI prior to Anthropic, with the WSJ detailing early issues with co-founder Greg Brockman.

Brockman reportedly once floated selling AGI to UN Security Council nuclear powers, a proposal Dario considered 'tantamount to treason.'

The WSJ also reported that Altman accused the Amodeis of plotting against him to the board in a private meeting, then denied it when confronted.

Amodei privately likened Altman/Musk suit to Hitler vs. Stalin, called Brockman's pro-Trump PAC donation 'evil’, and compared OAI to Big Tobacco.

Why it matters: Kudos to the WSJ for these nuggets that paint a much deeper picture of the decade-long drama between Amodei and Altman. The grudges are entertaining, but they're also steering the trajectory of two of the most important AI companies — with impacts that ripple through much more than just a personal rivalry.

QUICK HITS

🛠️ Trending AI Tools

🧠 Adapt – The AI Computer for your company. It connects every tool, learns your business, and takes action for you directly in Slack*

🎨 Phota Studio - Phota's personalized photo editing and generation model

🧠 TRIBE v2 - AI that simulates brain responses to sights, sounds, language

🗣️ Cohere Transcribe - SOTA, open-source speech recognition model

*Sponsored Listing

📰 Everything else in AI today

Sam Altman reportedly told OAI staff he tried to "save" Anthropic during its Pentagon standoff, per Slack messages seen by Axios — even as OpenAI locked in its own deal.

xAI’s Ross Nordeen reportedly departed the company this week, who was the last remaining of the original 11 co-founders at the startup besides Elon Musk.

Pharma giant Eli Lilly entered a $2.75B deal with Hong Kong's Insilico Medicine to license its AI-discovered drug pipeline, with 28 compounds already in development.

Anthropic won a federal injunction blocking the Trump administration's supply-chain-risk designation, with the judge calling it "classic illegal First Amendment retaliation."

Google expanded the rollout of its Live Translate feature to iOS, turning any pair of headphones into a real-time interpreter across 70+ languages.

COMMUNITY

🤝 Community AI workflows

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader David B. in Wichita, KS:

"My dad passed away in February, leaving behind a large box of handwritten letters from the 50's & 60's. I photographed them with my phone and fed the images in bulk to Claude Code — it read the cursive, transcribed everything, and helped me build a family history website with the original photos alongside each transcription.

Something that would have stayed in a box is now a family archive grandkids can actually explore."

How do you use AI? Tell us here.

🎓 Highlights: News, Guides & Events

Read our last AI newsletter: Meta’s new open-source brain AI

Read our last Tech newsletter: SpaceX slips the IPO script

Read our last Robotics newsletter: Amazon now has a kid-sized humanoid

Today’s AI tool guide: Create Skills in ChatGPT with Codex

RSVP to next workshop @ 2 PM EST Thursday: Presentation Slides with AI

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown

No matching search results

Try using different keywords, double-check your spelling, or explore related categories.

Stay Ahead on AI.

Join 2,000,000+ readers getting bite-size AI news updates straight to their inbox every morning with The Rundown AI newsletter. It's 100% free.